ploeh blog danish software design

Table-driven tennis scoring

Probably the most boring implementation of the tennis kata I've ever written.

Regular readers of this blog will know that I keep coming back to the tennis kata. It's an interesting little problem to attack from various angles.

The tennis scoring rules essentially describe a finite state machine, and while I was thinking about the state transitions involved, I came across an article by Michael McCandless about scoring tennis using finite-state automata.

This isn't the first time I've thought about simply enumerating all possible states in the state machine, but I decided to spend half an hour on actually doing it. While Michael McCandless shows that an optimisation is possible, his minimised version doesn't enable us to report all intermediary states with the correct labels. For example, he optimises away thirty-all by replacing it with deuce. The end result is still the same, in the sense that the minimised state machine will arrive at the same winner for the same sequence of balls, but it can't correctly report the score while the game is in progress.

For that reason, I decided to use his non-optimised state machine as a launch pad.

States #

I used F# to enumerate all twenty states:

type Score =

| LoveAll

| FifteenLove

| LoveFifteen

| ThirtyLove

| FifteenAll

| LoveThirty

| FortyLove

| ThirtyFifteen

| FifteenThirty

| LoveForty

| FortyFifteen

| ThirtyAll

| FifteenForty

| GamePlayerOne

| FortyThirty

| ThirtyForty

| GamePlayerTwo

| AdvantagePlayerOne

| Deuce

| AdvantagePlayerTwo

Utterly boring, yes, but perhaps boring code might be good code.

Table-driven methods #

Code Complete describes a programming technique called table-driven methods. The idea is to replace branching instructions such as if, else, and switch with a lookup table. The book assumes that the table exists in memory, but in this case, we can implement the table lookup with pattern matching:

// Score -> Score let ballOne = function | LoveAll -> FifteenLove | FifteenLove -> ThirtyLove | LoveFifteen -> FifteenAll | ThirtyLove -> FortyLove | FifteenAll -> ThirtyFifteen | LoveThirty -> FifteenThirty | FortyLove -> GamePlayerOne | ThirtyFifteen -> FortyFifteen | FifteenThirty -> ThirtyAll | LoveForty -> FifteenForty | FortyFifteen -> GamePlayerOne | ThirtyAll -> FortyThirty | FifteenForty -> ThirtyForty | GamePlayerOne -> GamePlayerOne | FortyThirty -> GamePlayerOne | ThirtyForty -> Deuce | GamePlayerTwo -> GamePlayerTwo | AdvantagePlayerOne -> GamePlayerOne | Deuce -> AdvantagePlayerOne | AdvantagePlayerTwo -> Deuce

The ballOne function returns the new score when player one wins a ball. It takes the old score as input.

I'm going to leave ballTwo as an exercise to the reader.

Smoke test #

Does it work, then? Here's a few interactions with the API in F# Interactive:

> ballOne LoveAll;; val it : Score = FifteenLove > LoveAll |> ballOne |> ballTwo;; val it : Score = FifteenAll > LoveAll |> ballOne |> ballTwo |> ballTwo;; val it : Score = FifteenThirty > LoveAll |> ballOne |> ballTwo |> ballTwo |> ballTwo;; val it : Score = FifteenForty > LoveAll |> ballOne |> ballTwo |> ballTwo |> ballTwo |> ballOne;; val it : Score = ThirtyForty > LoveAll |> ballOne |> ballTwo |> ballTwo |> ballTwo |> ballOne |> ballTwo;; val it : Score = GamePlayerTwo

It looks like it's working.

Automated tests #

Should I be writing unit tests for this implementation?

I don't see how a test would be anything but a duplication of the two 'transition tables'. Given that the score is thirty-love, when player one wins the ball, then the new score should be forty-love. Indeed, the ballOne function already states that.

We trust tests because they are simple. When the implementation is as simple as the test that would exercise it, then what's the benefit of the test?

To be clear, there are still compelling reasons to write tests for some simple implementations, but that's another discussion. I don't think those reasons apply here. I'll write no tests.

Code size #

While this code is utterly dull, it takes up some space. In all, it runs to 67 lines of code.

For comparison, the code base that evolves throughout my Types + Properties = Software article series is 65 lines of code, not counting the tests. When I also count the tests, that entire code base contains around 300 lines of code. That's more than four times as much code.

Preliminary research implies that bug count correlates linearly with line count. The more lines of code, the more bugs.

While I believe that this is probably a simplistic rule of thumb, there's much to like about smaller code bases. In total, this utterly dull implementation is actually smaller than a comparable implementation built from small functions.

Conclusion #

Many software problems can be modelled as finite state machines. I find that this is often the case in my own field of line-of-business software and web services.

It's not always possible to exhaustively enumerate all states, because each 'type' of state carries data that can't practically be enumerated. For example, polling consumers need to carry timing statistics. These statistics influence how the state machine transitions, but the range of possible values is so vast that it can't be enumerated as types.

It may not happen often that you can fully enumerate all states and transitions of a finite state machine, but I think it's worthwhile to be aware of such refactoring opportunities. It might make your code dully simple.

The dispassionate developer

Caring for your craft is fine, but should you work for free?

I've met many passionate developers in my career. Programmers who are deeply interested in technology, programming languages, methodology, and self-improvement. I've also seen many online profiles where people present themselves as 'passionate developers'.

These are the people who organise and speak at user groups. They write blog posts and host podcasts. They contribute to open source development in their free time.

I suppose that I can check many of those boxes myself. In the last few years, though, I've become increasingly sceptic that this is a good idea.

Working for free #

In the last five years or so, I've noticed what looks like a new trend. Programmers contact me to ask about paid mentorship. They offer to pay me out of their own pocket to mentor them.

I find that flattering, but it also makes me increasingly disenchanted with the software development industry. To be clear, this isn't an attack on the good people who care so much about their craft that they are willing to spend their hard-earned cash on improving their skill. This is more a reflection on employers.

For reasons that are complicated and that I don't fully understand, the software development community in the eighties and nineties developed a culture of anti-capitalism and liberal values that put technology on a pedestal for its own sake. Open source good; commercial software bad. Free software good; commercial software bad.

I'm not entirely unsympathetic to such ideas, but it seems clear, now, that these ideas have had unintended consequences. The idea of free software, for example, has led to a software economy where you, the user, are no longer the customer, but the product.

The idea of open source, too, seems largely defunct as a means of 'sticking it to the man'. The big tech companies now embrace open source. Despite initial enmity towards open source, Microsoft now owns GitHub and is one of the most active contributors. Google and Facebook control popular front-end platforms such as Angular and React, as well as many other technologies such as Android or GraphQL. Continue the list at your own leisure.

Developing open source is seen as a way to establish credibility, not only for companies, but for individuals as well. Would you like a cool job in tech? Show me your open-source portfolio.

Granted, the focus on open-source contributions as a replacement for a CV seems to have peaked, and good riddance.

I deliberately chose to use the word portfolio, above. Like a struggling artist, you're expected to show up with such a stunning sample of your work that you amaze your potential new employer and blow away your competition. Unlike struggling artists, though, you've already given away everything in your portfolio, and so have other job applicants. Employers benefit from this. You work for free.

The passion ethos #

You're expected to 'contribute' to open source software. Why? Because employers want employees who are passionate about their craft.

As you start to ponder the implied ethos, the stranger it gets. Would you like engineers to be passionate as they design new bridges? Would you like a surgeon to be passionate as she operates on you? Would you like judges to be passionate as they pass sentence on your friend?

I'd like such people to care about their vocation, but I'd prefer that they keep a cool head and make as rational decisions as possible.

Why should programmers be passionate?

I don't think that it's in our interest to be passionate, but it is in employers' interest. Not only are passionate people expected to work for free, they're also easier to manipulate. Tell a passionate person something he wants to hear, and he may turn off further critical thinking because the praise feels good.

Some open-source maintainers have created crucial software that runs everywhere. Companies make millions off that free software, while maintainers are often left with an increasing support burden and no money.

They do, however, often get a pat on the back. They get invited to speak at conferences, and can add creator of Xyz to their social media bios.

Until they burn out, that is. Passion, after all, comes from the Latin for suffering.

Self-improvement #

I remember consulting with a development organisation, helping them adopt some new technology. As my engagement was winding down, I had a meeting with the manager to discuss how they should be able to carry on without me. This was back in my Microsoft days, so I suggested that they institute a training programme for the employees. To give it structure, they could, for example, study for some Microsoft certifications.

The development manager immediately shot down that idea: "If we do that, they'll leave us once they have the certification."

I was flabbergasted.

You've probably seen quotes like this:

This is one of those bon mots that seem impossible to attribute to a particular source, but the idea is clear enough. The sentiment doesn't seem to represent mainstream behaviour, though."What happens if we train our people and they leave?"

"What happens if we don't and they stay?"

Granted, I've met more than one visionary leader willing to invest in employees' careers, but most managers don't.

While I teach and coach internationally, I naturally have more experience with my home region of Copenhagen, and more broadly Scandinavia. Here, it's a common position that anything that relates to work should only happen during work hours. If the employer doesn't allow training on the job, then most employees don't train.

What happens if you don't keep up to date with new methodologies, new frameworks, new programming languages? Your skill set becomes obsolete. Not overnight, but over the years. Finding a new job becomes harder and harder.

As your marketability atrophies, your employer can treat you worse and worse. After all, where are you going to go?

If you're tired of working with legacy code without tests, most of your suggestions for improvements will be met by a shrug. We don't have time for that now. It's more important to deliver value to the customer.

You'll have to work long hours and weekends fire-fighting 'unexpected' issues in production while still meeting deadlines.

A sufficiently cynical employer may have no qualms keeping employees busy this way.

To be clear, I'm not saying that it's good business sense to treat skilled employees like this, and I'm not saying that this is how all employers conduct business, but I've seen enough development organisations that fit the description.

As disappointing as it may be, keeping up to date with technology is your responsibility, and if you can't sneak in some time for self-improvement at work, you'll have to do it on your own time.

This has little to do with passion, but much to do with self-preservation.

Can I help you? #

The programmers who contact me (and others) for mentorship are the enlightened ones who've already figured this out.

That doesn't mean that I'm comfortable taking people's hard-earned money. If I teach you something that improves your productivity, your employer benefits, too. I think that your employer should pay for that.

I'm aware that most companies don't want to do that. It's also my experience that while most employers couldn't care less whether you pay me for mentorship, they don't want you to show me their code. This basically means that I can't really mentor you, unless you can reproduce the problems you're having as anonymised code examples.

But if you can do that, you can ask the whole internet. You can try asking on Stack Overflow and then ping me. You're also welcome to ask me. If your minimal working example is interesting, I may turn it into a blog post, and you pay nothing.

People also ask me how they can convince their managers or colleagues to do things differently. I often wonder why they don't make technical decisions already, but this may be my cultural bias talking. In Denmark you can often get away with the ask-for-forgiveness-rather-than-permission attitude, but it may not be a good idea in your culture.

Can I magically convince your manager to do things differently? Not magically, but I do know an effective trick: get him or her to hire me (or another expensive consultant). Most people don't heed advice given for free, but if they pay dearly for it, they tend to pay attention.

Other than that, I can only help you as I've passionately tried to help the world-wide community for decades: by blogging, answering questions on Stack Overflow, writing books, speaking at user groups and conferences, publishing videos, and so on.

Ticking most of the boxes #

Yes, I know that I fit the mould of the passionate developer. I've blogged regularly since 2006, I've answered thousands of questions on Stack Overflow, I've given more than a hundred presentations, been a podcast guest, and co-written a book, none of which has made me rich. If I don't do it for the passion, then why do I do it?

Sometimes it's hard and tedious work, but even so, I do much of it because I can't really help it. I like to write and teach. I suppose that makes me passionate.

My point with this article isn't that there's anything wrong with being passionate about software development. The point is that you might want to regard it as a weakness rather than an asset. If you are passionate, beware that someone doesn't take advantage of you.

I realise that I didn't view the world like this when I started blogging in January 2006. I was driven by my passion. In retrospect, though, I think that I have been both privileged and fortunate. I'm not sure my career path is reproducible today.

When I started blogging, it was a new-fangled thing. Just the fact that you blogged was enough to get a little attention. I was in the right place at the right time.

The same is true for Stack Overflow. The site was still fairly new when I started, and a lot of frequently asked questions were only asked on my watch. I still get upvotes on answers from 2009, because these are questions that people still ask. I was just lucky enough to be around the first time it was asked on the site.

I'm also privileged by being an able-bodied man born into the middle class in one of the world's richest countries. I received a free education. Denmark has free health care and generous social security. Taking some chances with your career in such an environment isn't even reckless. I've worked for more than one startup. That's not risky here. Twice, I've worked for a company that went out of business; in none of those cases did I lose money.

Yes, I've been fortunate, but my point is that you should probably not use my career as a model for yours, just as you shouldn't use those of Robert C. Martin, Kent Beck, or Martin Fowler. It's hardly a reproducible career path.

Conclusion #

What can you do, then, if you want to stand out from the crowd? How do you advance your software development career?

I don't know. I never claimed that this was easy.

Being good at something helps, but you must also make sure that the right people know what you're good at. You're probably still going to have to invest some of your 'free' time to make that happen.

Just beware that you aren't being taken advantage of. Be dispassionate.

Comments

Thanks Mark for your post.

I really relate to your comment about portfolio. I am still a young developer, not even 30 years old. A few years ago, I had an unhealthy obsession, that I should have a portfolio, otherwise I would be having a hard time finding job.

I am not entirely sure where this thought was coming from, but it is not important in what I want to convey. I was worrying that I do not have a portfolio and that anxiety itself, prevented me from doing any real work to have anything to showcase. Kinda vicious cycle.

Anyways, even without a portfolio, I didn't have any troubles switching jobs. I focused on presenting what I have learned in every project I worked on. What was good about it, what were the struggles. I presented myself not as a just a "mercenary" if you will. I always gave my best at jobs and at the interviews and somehow managed to prove to myself that a portfolio is not a must.

Granted, everybody's experience is different and we all work in different market conditions. But my takeaway is - don't fixate on a thing, if it's not an issue. That's kinda what I was doing a few years back.

Pendulum swing: pure by default

Favour pure functions over polymorphic dependencies.

This is an article in a small series of articles about personal pendulum swings. Here, I'll discuss another contemporary one-eighty. This one is older than the other two I've discussed in this article series, but I believe that it deserves to be included.

Once upon I time, I used to consider Dependency Injection (DI) and injected interfaces an unequivocal good: the more, the merrier. These days, I tend to only model true application dependencies as injected dependencies. For the rest, I use pure functions.

Background #

When I started my programming career, I'd barely taught myself to program. I worked in both Visual Basic, VBScript, and C++ before I encountered the concept of an interface. What C++ I wrote was entirely procedural, and I don't recall being aware of inheritance. Visual Basic 6 didn't have inheritance, and I'm fairly sure that VBScript didn't, either.

I vaguely recall first being introduced to the concept of an interface in Visual Basic. It took me some time to wrap my head around it, and while I thought it seemed clever, I couldn't find any practical use for it.

I think that I wrote my first professional C# code base in 2002. We didn't use Dependency Injection or interfaces. I don't even recall that we used much inheritance.

Inject all the things #

When I discovered test-driven development (TDD) the year after, it didn't take me too long to figure out that I'd need to isolate units from their dependencies. Based on initial successes, I even wrote an article about mock objects for MSDN Magazine October 2004.

At that time I'd made interfaces a part of my active technique. I still struggled with how to replace a unit's 'real' dependencies with the mock objects. Initially, I used what I in Dependency Injection in .NET later called Bastard Injection. As I also described in the book, things took a dark turn for while as I discovered the Service Locator anti-pattern - only, at that time, I didn't realise that it was an anti-pattern. Soon after, fortunately, I discovered Pure DI.

That problem solved, I began an era of my programming career where everything became an interface. It does enable unit testing, so it's better than not being able to test, but after some years I began to sense the limits.

Perhaps the worst problem is that you get a deluge of interfaces. Many of these interfaces have similar-sounding names like IReservationsManager and IRestaurantManager. This makes discoverability harder: Which of these interfaces should you use? One defines a TrySave method, the other a Check method, and they aren't that different.

This wasn't clear to me when I worked in teams with one or two programmers. Once I saw how this played out in larger teams, however, I began to understand that one developer's interface remained undiscovered by other team members. When existing 'abstractions' are unclear, it leads to frequent reinvention of interfaces to implement the same functionality. Duplication abounds.

Designing with many fine-grained dependencies also has a tendency drag into existence many factory interfaces, a well-known design smell.

Have a sandwich #

It's remarkable how effectively you can lie to yourself. As late as 2017 I still concluded that fine-grained dependencies were best, despite most of my arguments pointing in another direction.

I first encountered functional programming in 2010, but was off to a slow start. It took me years before I realised that Dependency Injection isn't functional. There are other ways to address the problem of separating pure functions from impure actions, the simplest of which is the impureim sandwich.

Which parts of the application architecture are inherently impure? The usual suspects: the system clock, random number generators, the file system, databases, network resources. Notice how these are the dependencies that you usually need to replace with Test Doubles in order to make unit tests deterministic.

It makes sense to model these as dependencies. I still define interfaces for those and use Dependency Injection to control them. I do, however, use the impureim sandwich architecture to deal with the impure actions first, so that I can then delegate all the complex decision logic to pure functions.

Pure functions are intrinsically testable, so that solves many of the problems with testability. There's still a need to test how the impure actions interact with the pure functions. Here I take a step up in the Test Pyramid and write just enough state-based integration tests to render it probable that the integration works as intended. You can see an example of such a test here.

Conclusion #

From having favoured fine-grained Dependency Injection, I now write all decision logic as pure functions by default. These only need to implement interfaces if you need the logic of the system to be interchangeable, which isn't that often. I do still use Dependency Injection for the impure dependencies of the system. There's usually only a handful of those.

Pendulum swing: sealed by default

Inheritance is evil. Seal your classes.

This is an article in a small series of articles about personal pendulum swings. Here, I document another recent change of heart that's been a long way coming. In short, I now seal C# classes whenever I remember to do it.

The code shown here is part of the sample code base that accompanies my book Code That Fits in Your Head.

Background #

After I discovered test-driven development (TDD) (circa 2003) I embarked on a quest for proper ways to enable testability. Automated tests should be deterministic, but real software systems rarely are. Software depends on the system clock, random number generators, the file system, the states of databases, web services, and so on. All of these may change independently of the software, making it difficult to express an automated systems test in a deterministic manner.

This is a known problem in TDD. In order to get the system under test (SUT) under control, you have to introduce what Michael Feathers calls seams. In C#, there's traditionally been two ways you could do that: extract and override, and interfaces.

The original Framework Design Guidelines explicitly recommended base classes over interfaces, and I wasn't wise to how unfortunate that recommendation was. For a long time, I'd define abstractions with (abstract) base classes. I was even envious of Java, where instance members are virtual (overridable) by default. In C# you must explicitly declare a method virtual to make it overridable.

Abstract base classes aren't too bad if you leave them completely empty, but I never had much success with non-abstract base classes and virtual members and the whole extract-and-override manoeuvre. I soon concluded that Dependency Injection with interfaces was a better alternative.

Even after I changed to exclusively relying on interfaces (instead of abstract base classes), remnants of the rule stuck with me for years: unsealed good; sealed bad. Even today, the framework design guidelines favour unsealed classes:

I can no longer agree with this guidance; I think it's poor advice."CONSIDER using unsealed classes with no added virtual or protected members as a great way to provide inexpensive yet much appreciated extensibility to a framework."

You don't need inheritance #

Base classes imply class inheritance as a reuse and extensibility mechanism. We've known since 1994, though, that inheritance probably isn't the best design principle.

In single-inheritance languages like C# and Java, inheritance is just evil. Once you decide to inherit from a base class, you exclude all other base classes. Inheritance signifies a single 'yes' and an infinity of 'noes'. This is particularly problematic if you rely on inheritance for reuse. You can only 'reuse' a single base class, which again leads to duplication or bloated base classes."Favor object composition over class inheritance."

It's been years (probably more than a decade) since I stopped relying on base classes for anything. You don't need inheritance. Haskell doesn't have it at all, and I only use it in C# when a framework forces me to derive from some base class.

There's little you can do with an abstract class that you can't do in some other way. Abstract classes are isomorphic with Dependency Injection up to accessibility.

Seal #

If I already follow a design principle of not relying on inheritance, then why keep classes unsealed? Explicit is better than implicit, so why not make that principle visible? Seal classes.

It doesn't have any immediate impact on the code, but it might make it clearer to other programmers that an explicit decision was made.

You already saw examples in the previous article: Both Month and Seating are sealed classes. They're also immutable records. I seal more than record types, too:

public sealed class HomeController

I seal Controllers, as well as services:

public sealed class SmtpPostOffice : IPostOffice

Another example is an ASP.NET filter named UrlIntegrityFilter.

A common counter-argument is that 'you may need extensibility in the future':

I agree that it'd be arrogant to claim that you've thought about all extension points. Trying to predict future need is futile."by using "sealed" and not virtual in libs dev says "I thought of all extension point" which seems arrogant"

I don't agree, however, that making everything virtual is a good idea, but it's because I disagree with the underlying premise. The presupposition is that extensibility should be enabled through inheritance. If it's not already clear, I believe that this has many undesirable consequences. There are better ways to enable extensibility than through inheritance.

Conclusion #

I've begun to routinely seal new classes. I don't always remember to do it, but I think that I ought to. As I also explained in the previous article, this is only my default. If something has to be a base class, that's still an option. Likewise, just because a class starts out sealed doesn't mean that it has to stay sealed forever. While sealing an unsealed class is a breaking change, unsealing a sealed class isn't.

I can't think of any reason why I'd do that, though.

Pendulum swing: internal by default

Declare new C# classes as internal by default, and public by choice.

This is an article in a small series of articles about personal pendulum swings. Here, I document a recent change of heart that's been a long way coming. In short, I now declare C# classes as internal unless they're driven by tests.

The code shown here is part of the sample code base that accompanies my book Code That Fits in Your Head.

Background #

When you create a new class in Visual Studio, the default accessibility is internal. In fact, Visual Studio's default templates don't add an access modifier at all, but if no access modifier is present, it implies internal.

When I started out programming C#, I don't recall thinking much about accessibility modifiers. By default, then, I'd be using mostly internal classes. What little I knew about encapsulation (information hiding, anyone?) led me to believe that the more internal my code was, the better encapsulation it had.

It's possible that I make my past self more ignorant than I actually was. It's almost twenty years ago: I don't recall all the details.

Public all the things #

When I discovered test-driven development (TDD) (circa 2003) all my classes became public. They had to. When tests are interacting with code in another library, they can only exercise the system under test (SUT) if they can reach it. The tests make the SUT classes public.

Yes, it's technically possible to test internal classes in .NET, but I don't believe that you should. I've yet to change my mind about that; no imminent pendulum swing there. You're testing something you care about. If the internal code serves any, any, purpose, it must be somehow observable. If so, verify that such observable behaviour takes place; if not, delete the code. (I'm sure you can dream up some corner cases where this doesn't hold; fine: I'm painting with a broad brush, here.)

For years, I applied TDD, but I wasn't aware of the red-green-refactor cycle. I rarely changed the public API that the tests interacted with, and when I did, I made sure to adjust the tests accordingly. If a refactoring gave rise to new classes, I'd often write tests for those new classes as well.

Imagine, for example, invoking the Extract Class refactoring. The new class would be as covered by tests as before the extraction, but what happens next is typically that you need to tweak it. When that happened to me, I'd typically write completely new tests to cover it. To do that, I'd need the extracted class to be public.

In this phase of my professional life, my classes were almost exclusively public, with internal classes only making a rare appearance.

One problem this tends to cause is that it makes code bases more brittle. Every type change is a potential breaking change. When every public class is covered by tests, this makes tests brittle.

I think that it's relevant to consider the context of the code base. At this phase of my professional life, I maintained AutoFixture, a fairly popular open-source library. I wanted that library to be stable so that users could trust it. I considered the test suite a guard of the contract. As long as a change didn't break any test, I considered it likely that it wasn't a breaking change. Thus, I was already conservative when it came to editing tests. I considered test to be append-only in principle.

I still consider it prudent to be conservative when it comes to a library with a public API. This doesn't mean, however, that this line of thinking carries over to code bases without a public (language-level) API. This may include web sites and services, but could also include installed apps. As long as there's no public API, there's no contract to break.

Internal by default #

In 2020 I wrote a REST API of middling complexity. I used outside-in TDD as a major driver. In the spirit of behaviour-driven development I favour describing the observable behaviour of the system. I use self-hosted state-based integration tests for this purpose. Only when I find that these tests get too complex do I grudgingly drop down to the unit-test level.

The things that I test with unit tests have to be public. This still leaves plenty of room for behaviour described by the integration tests to have internal implementation details. The code base I mentioned has several examples of that. Some of them I've already described here on the blog.

For example, notice that the LinksFilter shown here is an internal class. Its behaviour is covered by abundant integration tests, so I'm not afraid to refactor it if need be. Those LinkToYear, LinkToMonth, and LinkToDay extension methods that it uses are internal too.

Another example is the UrlIntegrityFilter seen here. The class itself is internal and its behaviour is composed from private helper functions. Its counterpart SigningUrlHelper is also internal. (Its companion SigningUrlHelperFactory, shown in the same article, is public, but that's an oversight on my part. It can easily be internal as well.) All that URL-signing behaviour is, again, covered by tests that verify the behaviour of the REST API.

Another example from the same code base can be found in its so-called calendar feature. The system is an online restaurant reservation system. It allows clients to browse a day, a month, or even a year to see if there are any free spots for a given time slot. You can see an example here. While I test-drove the calendar feature with integration tests, it quickly dawned on me that I had three disparate cases (day, month, year) that essentially represented the same concept: a period.

A period is a closed set of heterogeneous data. A year contains only a single datum: the year itself (e.g. 2021). A month contains both a month and a year, and so on. A closed set of heterogeneous data describes a sum type, and since I know that in object-oriented programming, sum types can be encoded as Visitors, I introduced a Visitor API:

internal interface IPeriod { T Accept<T>(IPeriodVisitor<T> visitor); } internal interface IPeriodVisitor<T> { T VisitYear(int year); T VisitMonth(int year, int month); T VisitDay(int year, int month, int day); }

I decided, however, to keep this API internal, since this isn't the only possible way to model this feature. As is the case with the other examples I've shown here, the behaviour is covered by integration tests. I feel free to refactor. In fact, this Visitor-based API is actually the result of a refactoring from something more ad hoc that I didn't like.

Here's one of the three IPeriod implementation, in case you're curious:

internal sealed class Month : IPeriod { private readonly int year; private readonly int month; public Month(int year, int month) { this.year = year; this.month = month; } public T Accept<T>(IPeriodVisitor<T> visitor) { return visitor.VisitMonth(year, month); } public override bool Equals(object? obj) { return obj is Month month && year == month.year && this.month == month.month; } public override int GetHashCode() { return HashCode.Combine(year, month); } }

This class, too, is internal, as are its two companions Day and Year. I'll leave it as an exercise for the interested reader to implement these two classes, as well as IPeriodVisitor<T> implementations that return the next or previous period, or the first or last tick of the period, etcetera.

Public by choice #

This shifted emphasis of mine isn't a return to a simpler time. It's not internal all the things! It's about shifting the default for classes that are not driven by tests. Those classes that are artefacts of TDD are still public since I don't directly unit test internal classes.

Other classes may start out as internal and then get promoted to public by choice. For example, I'd introduced a Seating class in the code base to model how long a seating was supposed to take:

internal sealed class Seating { internal Seating(TimeSpan seatingDuration, Reservation reservation) { SeatingDuration = seatingDuration; Reservation = reservation; } // Members follow...

Some restaurants have second seatings (or more). They give you a predefined duration after which you're supposed to be done so that they can reuse your table for another party. I'd used the Seating class to encapsulate some logic related to that, such as the Overlaps method:

internal DateTime Start { get { return Reservation.At; } } internal DateTime End { get { return Start + SeatingDuration; } } internal bool Overlaps(Reservation other) { var otherSeating = new Seating(SeatingDuration, other); return Start < otherSeating.End && otherSeating.Start < End; }

While I considered this a well-designed little class with good encapsulation, I kept it internal simply because there was no need to make it public. It was indirectly covered by test cases, but it was a result of a refactoring and not directly test-driven.

As I started to add a new feature, I realised that I'd be able to write new unit tests in a better way if I could reuse Seating and a variation of its Overlaps method. I considered it carefully and decided to make the class and its members public:

public sealed class Seating { public Seating(TimeSpan seatingDuration, Reservation reservation) { SeatingDuration = seatingDuration; Reservation = reservation; } // Members follow...

I made this decision after explicit deliberation. It didn't take long, though, but I did shortly stop to consider whether this seemed like a good idea. This code base isn't a reusable library in the wild, so I wasn't concerned about misuse of the API. I did consider, on the other hand, how this would increase coupling between the tests and the production code base. It didn't take me long to decide that in this case, I was okay with that.

Seating had already existed as an internal class for some time and had proven useful and stable. Putting on my DDD hat, I also thought that Seating represented a proper domain concept.

Conclusion #

You can go back and forth on how you write code; which rules of thumb you apply. For many years, I favoured public classes. I think that I even, at one time, tweaked the Visual Studio templates to explicitly create new classes as public.

Now, I've changed my heuristic. Classes driven into existence by tests are public; they have to be. Other classes I now make internal by default, and public by choice.

This is going to be my rule until I change it.

Pendulum swings

The software development industry goes back and forth on how to do things, and so do I.

I've been working with something IT-related since 1994, and I've been a professional programmer since 1999. When you observe the software development industry over decades, you may start to notice some trends. One decade, service-oriented architecture (SOA) is cool; the next, consolidation sets in; then it's micro-services; and, as far as I can tell, monoliths are on the way in again, although I'm sure that we'll find something else to call them.

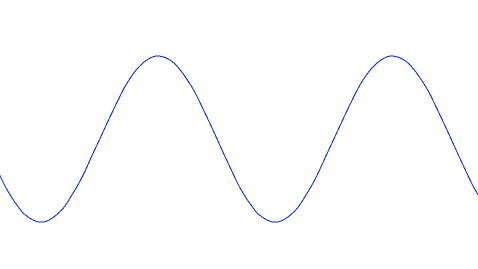

It's as if a pendulum swings from one extreme to the other. Sooner or later, it comes back, only to then continue its swing in the other direction. If you view it over time and assume no loss to friction, a pendulum describes a sine wave.

There's probably several reasons for this motion. The benign interpretation is that it's still a young industry and we're still learning. It's not uncommon to see oscillations in dynamic systems, particularly when feedback isn't immediate.

Software architecture tends to produce slow feedback. Architecture solves more than one problem, including scalability, but a major motivation to think about architecture is to pick a way to organise the source code so that you don't have to rewrite from scratch every 2-3 years. Tautologically, then, it takes years before you know whether or not you succeeded.

While waiting for feedback, you may continue doing what you believe is right: micro-services versus monoliths, unit tests versus acceptance tests, etcetera. Once you discover that a particular way to work has problems, you may overcompensate by going too far in the other direction.

Once you discover the problem with that, you may begin to pull back towards the original position. Because feedback is delayed, the pendulum once more swings too far.

If we manage to learn from our mistakes, one could hope that the oscillations we currently observe will dampen until we reach equilibrium in the future. The industry is still so young, though, that the pendulum makes wide swings. Perhaps it'll takes decades, or even centuries, before the oscillations die down.

The more cynic interpretation is that most software developers have only a few years of professional experience, and aren't taught the experiences of past generations.

In this light, the industry keeps regurgitating the same ideas over and over, never learning from past mistakes."Those who cannot remember the past are condemned to repeat it."

The truth is probably a mix of both explanations.

Personal pendulum #

I've noticed a similar tendency in myself. I work in a particular way until I run into the limitations of that way. Then, after a time of frustration, I change direction.

As an example, I'm an autodidact programmer. In the beginning of my career, I'd just throw together code until I thought it worked, then launch the software with the debugger attached only to discover that it didn't, then go back and tweak some more, and so on.

Then I discovered test-driven development (TDD) and for years, it was the only way I could conceive of working. As my experience with TDD grew, I started to notice that it wasn't the panacea that I believed when it was all new. I wrote about that as early as late 2010. Knowing myself, I'd probably started to notice problems with TDD before that. I have cognitive biases just like the next person. You can lie to yourself for years before the problems become so blatant that you can no longer ignore them.

To be clear, I never lost faith in TDD, but I began to glimpse the contours of its limitations. It's good for many circumstances, and it's still my preferred technique for developing new production code, but I use other techniques for e.g. prototyping.

In 2020 I wrote a code base of middling complexity, and I noticed that I'd started to change my position on some other long-standing practices. As I've tried to explain, it may look like pendulum swings, but I hope that they are, at least, dampened swings. I intend to observe what happens so that I can learn from these new directions.

In the following, I'll be writing about these new approaches that I'm trying on, and so far like:

- Pendulum swing: internal by default

- Pendulum swing: sealed by default

- Pendulum swing: pure by default

Conclusion #

One year TDD is all the rage; a few years later, it's BDD. One year it's SOA, then it's ports and adapters (which implies consolidated deployment), then it's micro-services. One year, it's XML, then it's JSON, then it's YAML. One decade it's structured programming, then it's object-orientation, then it's functional programming, and so on ad nauseam.

Hopefully, this is just a symptom of growing pains. Hopefully, we'll learn from all these wild swings so that we don't have to rewrite applications when older developers leave.

The only course of action that I can see for myself here is to document how I work so that I, and others, can learn from those experiences.

When properties are easier than examples

Sometimes, describing the properties of a function is easier than coming up with examples.

Instead of the term test-driven development you may occasionally encounter the phrase example-driven development. The idea is that each test is an example of how the system under test ought to behave. As you add more tests, you add more examples.

I've noticed that beginners often find it difficult to come up with good examples. This is the reason I've developed the Devil's advocate technique. It's meant as a heuristic that may help you identify the next good example. It's particularly effective if you combine it with the Transformation Priority Premise (TPP) and equivalence partitioning.

I've noticed, however, that translating concrete examples into code is not always straightforward. In the following, I'll describe an experience I had in 2020 while developing an online restaurant reservation system.

The code shown here is part of the sample code base that accompanies my book Code That Fits in Your Head.

Problem outline #

I'm going to start by explaining what it was that I was trying to do. I wanted to present the maître d' (or other restaurant staff) with a schedule of a day's reservations. It should take the form of a list of time entries, one entry for every time one or more new reservations would start. I also wanted to list, for each entry, all reservations that were currently ongoing, or would soon start. Here's a simple example, represented as JSON:

"date": "2023-08-23", "entries": [ { "time": "20:00:00", "reservations": [ { "id": "af5feb35f62f475cb02df2a281948829", "at": "2023-08-23T20:00:00.0000000", "email": "crystalmeth@example.net", "name": "Crystal Metheney", "quantity": 3 }, { "id": "eae39bc5b3a7408eb2049373b2661e32", "at": "2023-08-23T20:30:00.0000000", "email": "x.benedict@example.org", "name": "Benedict Xavier", "quantity": 4 } ] }, { "time": "20:30:00", "reservations": [ { "id": "af5feb35f62f475cb02df2a281948829", "at": "2023-08-23T20:00:00.0000000", "email": "crystalmeth@example.net", "name": "Crystal Metheney", "quantity": 3 }, { "id": "eae39bc5b3a7408eb2049373b2661e32", "at": "2023-08-23T20:30:00.0000000", "email": "x.benedict@example.org", "name": "Benedict Xavier", "quantity": 4 } ] } ]

To keep the example simple, there are only two reservations for that particular day: one for 20:00 and one for 20:30. Since something happens at both of these times, both time has an entry. My intent isn't necessarily that a user interface should show the data in this way, but I wanted to make the relevant data available so that a user interface could show it if it needed to.

The first entry for 20:00 shows both reservations. It shows the reservation for 20:00 for obvious reasons, and it shows the reservation for 20:30 to indicate that the staff can expect a party of four at 20:30. Since this restaurant runs with a single seating per evening, this effectively means that although the reservation hasn't started yet, it still reserves a table. This gives a user interface an opportunity to show the state of the restaurant at that time. The table for the 20:30 party isn't active yet, but it's effectively reserved.

For restaurants with shorter seating durations, the schedule should reflect that. If the seating duration is, say, two hours, and someone has a reservation for 20:00, you can sell that table to another party at 18:00, but not at 18:30. I wanted the functionality to take such things into account.

The other entry in the above example is for 20:30. Again, both reservations are shown because one is ongoing (and takes up a table) and the other is just starting.

Desired API #

A major benefit of test-driven development (TDD) is that you get fast feedback on the API you intent for the system under test (SUT). You write a test against the intended API, and besides a pass-or-fail result, you also learn something about the interaction between client code and the SUT. You often learn that the original design you had in mind isn't going to work well once it meets the harsh realities of an actual programming language.

In TDD, you often have to revise the design multiple times during the process.

This doesn't mean that you can't have a plan. You can't write the initial test if you have no inkling of what the API should look like. For the schedule feature, I did have a plan. It turned out to hold, more or less. I wanted the API to be a method on a class called MaitreD, which already had these four fields and the constructors to support them:

public TimeOfDay OpensAt { get; } public TimeOfDay LastSeating { get; } public TimeSpan SeatingDuration { get; } public IEnumerable<Table> Tables { get; }

I planned to implement the new feature as a new instance method on that class:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations)

This plan turned out to hold in general, although I ultimately decided to simplify the return type by getting rid of the Occurrence container. It's going to be present throughout this article, however, so I need to briefly introduce it. I meant to use it as a generic container of anything, but with an time-stamp associated with the value:

public sealed class Occurrence<T> { public Occurrence(DateTime at, T value) { At = at; Value = value; } public DateTime At { get; } public T Value { get; } public Occurrence<TResult> Select<TResult>(Func<T, TResult> selector) { if (selector is null) throw new ArgumentNullException(nameof(selector)); return new Occurrence<TResult>(At, selector(Value)); } public override bool Equals(object? obj) { return obj is Occurrence<T> occurrence && At == occurrence.At && EqualityComparer<T>.Default.Equals(Value, occurrence.Value); } public override int GetHashCode() { return HashCode.Combine(At, Value); } }

You may notice that due to the presence of the Select method this is a functor.

There's also a little extension method that we may later encounter:

public static Occurrence<T> At<T>(this T value, DateTime at) { return new Occurrence<T>(at, value); }

The plan, then, is to return a collection of occurrences, each of which may contain a collection of tables that are relevant to include at that time entry.

Examples #

When I embarked on developing this feature, I thought that it was a good fit for example-driven development. Since the input for Schedule requires a collection of Reservation objects, each of which comes with some data, I expected the test cases to become verbose. So I decided to bite the bullet right away and define test cases using xUnit.net's [ClassData] feature. I wrote this test:

[Theory, ClassData(typeof(ScheduleTestCases))] public void Schedule( MaitreD sut, IEnumerable<Reservation> reservations, IEnumerable<Occurrence<Table[]>> expected) { var actual = sut.Schedule(reservations); Assert.Equal( expected.Select(o => o.Select(ts => ts.AsEnumerable())), actual); }

This is almost as simple as it can be: Call the method and verify that expected is equal to actual. The only slightly complicated piece is the nested projection of expected from IEnumerable<Occurrence<Table[]>> to IEnumerable<Occurrence<IEnumerable<Table>>>. There are ugly reasons for this that I don't want to discuss here, since they have no bearing on the actual topic, which is coming up with tests.

I also added the ScheduleTestCases class and a single test case:

private class ScheduleTestCases : TheoryData<MaitreD, IEnumerable<Reservation>, IEnumerable<Occurrence<Table[]>>> { public ScheduleTestCases() { // No reservations, so no occurrences: Add(new MaitreD( TimeSpan.FromHours(18), TimeSpan.FromHours(21), TimeSpan.FromHours(6), Table.Communal(12)), Array.Empty<Reservation>(), Array.Empty<Occurrence<Table[]>>()); } }

The simplest implementation that passed that test was this:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { yield break; }

Okay, hardly rocket science, but this was just a test case to get started. So I added another one:

private void SingleReservationCommunalTable() { var table = Table.Communal(12); var r = Some.Reservation; Add(new MaitreD( TimeSpan.FromHours(18), TimeSpan.FromHours(21), TimeSpan.FromHours(6), table), new[] { r }, new[] { new[] { table.Reserve(r) }.At(r.At) }); }

This test case adds a single reservation to a restaurant with a single communal table. The expected result is now a single occurrence with that reservation. In true TDD fashion, this new test case caused a test failure, and I now had to adjust the Schedule method to pass all tests:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { if (reservations.Any()) { var r = reservations.First(); yield return new[] { Table.Communal(12).Reserve(r) }.AsEnumerable().At(r.At); } yield break; }

You might have wanted to jump to something prettier right away, but I wanted to proceed according to the Devil's advocate technique. I was concerned that I was going to mess up the implementation if I moved too fast.

And that was when I basically hit a wall.

Property-based testing to the rescue #

I couldn't figure out how to proceed from there. Which test case ought to be the next? I wanted to follow the spirit of the TPP and pick a test case that would cause another incremental step in the right direction. The sheer number of possible combinations overwhelmed me, though. Should I adjust the reservations? The table configuration for the MaitreD class? The SeatingDuration?

It's possible that you'd be able to conjure up the perfect next test case, but I couldn't. I actually let it stew for a couple of days before I decided to give up on the example-driven approach. While I couldn't see a clear path forward with concrete examples, I had a vivid vision of how to proceed with property-based testing.

I left the above tests in place and instead added a new test class to my code base. Its only purpose: to test the Schedule method. The test method itself is only a composition of various data definitions and the actual test code:

[Property] public Property Schedule() { return Prop.ForAll( GenReservation.ArrayOf().ToArbitrary(), ScheduleImp); }

This uses FsCheck 2.14.3, which is written in F# and composes better if you also write the tests in F#. In order to make things a little more palatable for C# developers, I decided to implement the building blocks for the property using methods and class properties.

The ScheduleImp method, for example, actually implements the test. This method runs a hundred times (FsCheck's default value) with randomly generated input values:

private static void ScheduleImp(Reservation[] reservations) { // Create a table for each reservation, to ensure that all // reservations can be allotted a table. var tables = reservations.Select(r => Table.Standard(r.Quantity)); var sut = new MaitreD( TimeSpan.FromHours(18), TimeSpan.FromHours(21), TimeSpan.FromHours(6), tables); var actual = sut.Schedule(reservations); Assert.Equal( reservations.Select(r => r.At).Distinct().Count(), actual.Count()); }

The step you see in the first line of code is an example of a trick that I find myself doing often with property-based testing: instead of trying to find some good test values for a particular set of circumstances, I create a set of circumstances that fits the randomly generated test values. As the code comment explains, given a set of Reservation values, it creates a table that fits each reservation. In that way I ensure that all the reservations can be allocated a table.

I'll soon return to how those random Reservation values are generated, but first let's discuss the rest of the test body. Given a valid MaitreD object it calls the Schedule method. In the assertion phase, it so far only verifies that there's as many time entries in actual as there are distinct At values in reservations.

That's hardly a comprehensive description of the SUT, but it's a start. The following implementation passes both the new property, as well as the two examples above.

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { return from r in reservations group Table.Communal(12).Reserve(r) by r.At into g select g.AsEnumerable().At(g.Key); }

I know that many C# programmers don't like query syntax, but I've always had a soft spot for it. I liked it, but wasn't sure that I'd be able to keep it up as I added more constraints to the property.

Generators #

Before we get to that, though, I promised to show you how the random reservations are generated. FsCheck has an API for that, and it's also query-syntax-friendly:

private static Gen<Email> GenEmail => from s in Arb.Default.NonWhiteSpaceString().Generator select new Email(s.Item); private static Gen<Name> GenName => from s in Arb.Default.StringWithoutNullChars().Generator select new Name(s.Item); private static Gen<Reservation> GenReservation => from id in Arb.Default.Guid().Generator from d in Arb.Default.DateTime().Generator from e in GenEmail from n in GenName from q in Arb.Default.PositiveInt().Generator select new Reservation(id, d, e, n, q.Item);

GenReservation is a generator of Reservation values (for a simplified explanation of how such a generator might work, see The Test Data Generator functor). It's composed from smaller generators, among these GenEmail and GenName. The rest of the generators are general-purpose generators defined by FsCheck.

If you refer back to the Schedule property above, you'll see that it uses GenReservation to produce an array generator. This is another general-purpose combinator provided by FsCheck. It turns any single-object generator into a generator of arrays containing such objects. Some of these arrays will be empty, which is often desirable, because it means that you'll automatically get coverage of that edge case.

Iterative development #

As I already discovered in 2015 some problems are just much better suited for property-based development than example-driven development. As I expected, this one turned out to be just such a problem. (Recently, Hillel Wayne identified a set of problems with no clear properties as rho problems. I wonder if we should pick another Greek letter for this type of problems that almost ooze properties. Sigma problems? Maybe we should just call them describable problems...)

For the next step, I didn't have to write a completely new property. I only had to add a new assertion, and thereby strengthening the postconditions of the Schedule method:

Assert.Equal( actual.Select(o => o.At).OrderBy(d => d), actual.Select(o => o.At));

I added the above assertion to ScheduleImp after the previous assertion. It simply states that actual should be sorted in ascending order.

To pass this new requirement I added an ordering clause to the implementation:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { return from r in reservations group Table.Communal(12).Reserve(r) by r.At into g orderby g.Key select g.AsEnumerable().At(g.Key); }

It passes all tests. Commit to Git. Next.

Table configuration #

If you consider the current implementation, there's much not to like. The worst offence, I think, is that it conjures a hard-coded communal table out of thin air. The method ought to use the table configuration passed to the MaitreD object. This seems like an obvious flaw to address. I therefore added this to the property:

Assert.All(actual, o => AssertTables(tables, o.Value)); } private static void AssertTables( IEnumerable<Table> expected, IEnumerable<Table> actual) { Assert.Equal(expected.Count(), actual.Count()); }

It's just another assertion that uses the helper assertion also shown. As a first pass, it's not enough to cheat the Devil, but it sets me up for my next move. The plan is to assert that no tables are generated out of thin air. Currently, AssertTables only verifies that the actual count of tables in each occurrence matches the expected count.

The Devil easily foils that plan by generating a table for each reservation:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { var tables = reservations.Select(r => Table.Communal(12).Reserve(r)); return from r in reservations group r by r.At into g orderby g.Key select tables.At(g.Key); }

This (unfortunately) passes all tests, so commit to Git and move on.

The next move I made was to add an assertion to AssertTables:

Assert.Equal( expected.Sum(t => t.Capacity), actual.Sum(t => t.Capacity));

This new requirement states that the total capacity of the actual tables should be equal to the total capacity of the allocated tables. It doesn't prevent the Devil from generating tables out of thin air, but it makes it harder. At least, it makes it so hard that I found it more reasonable to use the supplied table configuration:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { var tables = reservations.Zip(Tables, (r, t) => t.Reserve(r)); return from r in reservations group r by r.At into g orderby g.Key select tables.At(g.Key); }

The implementation of Schedule still cheats because it 'knows' that no tests (except for the degenerate test where there are no reservations) have surplus tables in the configuration. It takes advantage of that knowledge to zip the two collections, which is really not appropriate.

Still, it seems that things are moving in the right direction.

Generated SUT #

Until now, ScheduleImp has been using a hard-coded sut. It's time to change that.

To keep my steps as small as possible, I decided to start with the SeatingDuration since it was currently not being used by the implementation. This meant that I could start randomising it without affecting the SUT. Since this was a code change of middling complexity in the test code, I found it most prudent to move in such a way that I didn't have to change the SUT as well.

I completely extracted the initialisation of the sut to a method argument of the ScheduleImp method, and adjusted it accordingly:

private static void ScheduleImp(MaitreD sut, Reservation[] reservations) { var actual = sut.Schedule(reservations); Assert.Equal( reservations.Select(r => r.At).Distinct().Count(), actual.Count()); Assert.Equal( actual.Select(o => o.At).OrderBy(d => d), actual.Select(o => o.At)); Assert.All(actual, o => AssertTables(sut.Tables, o.Value)); }

This meant that I also had to adjust the calling property:

public Property Schedule() { return Prop.ForAll( GenReservation .ArrayOf() .SelectMany(rs => GenMaitreD(rs).Select(m => (m, rs))) .ToArbitrary(), t => ScheduleImp(t.m, t.rs)); }

You've already seen GenReservation, but GenMaitreD is new:

private static Gen<MaitreD> GenMaitreD(IEnumerable<Reservation> reservations) { // Create a table for each reservation, to ensure that all // reservations can be allotted a table. var tables = reservations.Select(r => Table.Standard(r.Quantity)); return from seatingDuration in Gen.Choose(1, 6) select new MaitreD( TimeSpan.FromHours(18), TimeSpan.FromHours(21), TimeSpan.FromHours(seatingDuration), tables); }

The only difference from before is that the new MaitreD object is now initialised from within a generator expression. The duration is randomly picked from the range of one to six hours (those numbers are my arbitrary choices).

Notice that it's possible to base one generator on values randomly generated by another generator. Here, reservations are randomly produced by GenReservation and merged to a tuple with SelectMany, as you can see above.

This in itself didn't impact the SUT, but set up the code for my next move, which was to generate more tables than reservations, so that there'd be some free tables left after the schedule allocation. I first added a more complex table generator:

/// <summary> /// Generate a table configuration that can at minimum accomodate all /// reservations. /// </summary> /// <param name="reservations">The reservations to accommodate</param> /// <returns>A generator of valid table configurations.</returns> private static Gen<IEnumerable<Table>> GenTables(IEnumerable<Reservation> reservations) { // Create a table for each reservation, to ensure that all // reservations can be allotted a table. var tables = reservations.Select(r => Table.Standard(r.Quantity)); return from moreTables in Gen.Choose(1, 12).Select(Table.Standard).ArrayOf() from allTables in Gen.Shuffle(tables.Concat(moreTables)) select allTables.AsEnumerable(); }

This function first creates standard tables that exactly accommodate each reservation. It then generates an array of moreTables, each fitting between one and twelve people. It then mixes those tables together with the ones that fit a reservation and returns the sequence. Since moreTables can be empty, it's possible that the entire sequence of tables only just accommodates the reservations.

I then modified GenMaitreD to use GenTables:

private static Gen<MaitreD> GenMaitreD(IEnumerable<Reservation> reservations) { return from seatingDuration in Gen.Choose(1, 6) from tables in GenTables(reservations) select new MaitreD( TimeSpan.FromHours(18), TimeSpan.FromHours(21), TimeSpan.FromHours(seatingDuration), tables); }

This provoked a change in the SUT:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { return from r in reservations group r by r.At into g orderby g.Key select Allocate(g).At(g.Key); }

The Schedule method now calls a private helper method called Allocate. This method already existed, since it supports the algorithm used to decide whether or not to accept a reservation request.

Rinse and repeat #

I hope that a pattern starts to emerge. I kept adding more and more randomisation to the data generators, while I also added more and more assertions to the property. Here's what it looked like after a few more iterations:

private static void ScheduleImp(MaitreD sut, Reservation[] reservations) { var actual = sut.Schedule(reservations); Assert.Equal( reservations.Select(r => r.At).Distinct().Count(), actual.Count()); Assert.Equal( actual.Select(o => o.At).OrderBy(d => d), actual.Select(o => o.At)); Assert.All(actual, o => AssertTables(sut.Tables, o.Value)); Assert.All( actual, o => AssertRelevance(reservations, sut.SeatingDuration, o)); }

While AssertTables didn't change further, I added another helper assertion called AssertRelevance. I'm not going to show it here, but it checks that each occurrence only contains reservations that overlaps that point in time, give or take the SeatingDuration.

I also made the reservation generator more sophisticated. If you consider the one defined above, one flaw is that it generates reservations at random dates. The chance that it'll generate two reservations that are actually adjacent in time is minimal. To counter this problem, I added a function that would return a generator of adjacent reservations:

/// <summary> /// Generate an adjacant reservation with a 25% chance. /// </summary> /// <param name="reservation">The candidate reservation</param> /// <returns> /// A generator of an array of reservations. The generated array is /// either a singleton or a pair. In 75% of the cases, the input /// <paramref name="reservation" /> is returned as a singleton array. /// In 25% of the cases, the array contains two reservations: the input /// reservation as well as another reservation adjacent to it. /// </returns> private static Gen<Reservation[]> GenAdjacentReservations(Reservation reservation) { return from adjacent in GenReservationAdjacentTo(reservation) from useAdjacent in Gen.Frequency( new WeightAndValue<Gen<bool>>(3, Gen.Constant(false)), new WeightAndValue<Gen<bool>>(1, Gen.Constant(true))) let rs = useAdjacent ? new[] { reservation, adjacent } : new[] { reservation } select rs; } private static Gen<Reservation> GenReservationAdjacentTo(Reservation reservation) { return from minutes in Gen.Choose(-6 * 4, 6 * 4) // 4: quarters/h from r in GenReservation select r.WithDate( reservation.At + TimeSpan.FromMinutes(minutes)); }

Now that I look at it again, I wonder whether I could have expressed this in a simpler way... It gets the job done, though.

I then defined a generator that would either create entirely random reservations, or some with some adjacent ones mixed in:

private static Gen<Reservation[]> GenReservations { get { var normalArrayGen = GenReservation.ArrayOf(); var adjacentReservationsGen = GenReservation.ArrayOf() .SelectMany(rs => Gen .Sequence(rs.Select(GenAdjacentReservations)) .SelectMany(rss => Gen.Shuffle( rss.SelectMany(rs => rs)))); return Gen.OneOf(normalArrayGen, adjacentReservationsGen); } }

I changed the property to use this generator instead:

[Property] public Property Schedule() { return Prop.ForAll( GenReservations .SelectMany(rs => GenMaitreD(rs).Select(m => (m, rs))) .ToArbitrary(), t => ScheduleImp(t.m, t.rs)); }

I could have kept at it longer, but this turned out to be good enough to bring about the change in the SUT that I was looking for.

Implementation #

These incremental changes iteratively brought me closer and closer to an implementation that I think has the correct behaviour:

public IEnumerable<Occurrence<IEnumerable<Table>>> Schedule(IEnumerable<Reservation> reservations) { return from r in reservations group r by r.At into g orderby g.Key let seating = new Seating(SeatingDuration, g.Key) let overlapping = reservations.Where(seating.Overlaps) select Allocate(overlapping).At(g.Key); }

Contrary to my initial expectations, I managed to keep the implementation to a single query expression all the way through.

Conclusion #

This was a problem that I was stuck on for a couple of days. I could describe the properties I wanted the function to have, but I had a hard time coming up with a good set of examples for unit tests.

You may think that using property-based testing looks even more complicated, and I admit that it's far from trivial. The problem itself, however, isn't easy, and while the property-based approach may look daunting, it turned an intractable problem into a manageable one. That's a win in my book.

It's also worth noting that this would all have looked more elegant in F#. There's an object-oriented tax to be paid when using FsCheck from C#.

Accountability and free speech

A most likely naive suggestion.

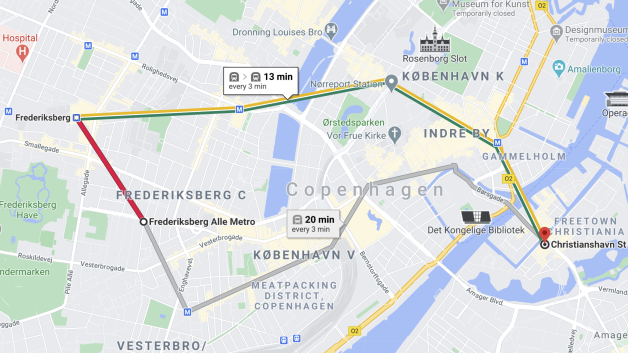

A few years ago, my mother (born 1940) went to Paris on vacation with her older sister. She was a little concerned if she'd be able to navigate the Métro, so she asked me to print out all sorts of maps in advance. I wasn't keen on another encounter with my nemesis, the printer, so I instead showed her how she could use Google Maps to get on-demand routes. Google Maps now include timetables and line information for many metropolitan subway lines around the world. I've successfully used it to find my way around London, Paris, New York, Tokyo, Sydney, and Melbourne.

It even works in my backwater home-town:

It's rapidly turning into indispensable digital infrastructure, and that's beginning to trouble me.

You can still get paper maps of the various rapid transit systems around the world, but for how long?

Digital infrastructure #

If you're old enough, you may remember phone books. In the days of land lines and analogue phones, you'd look up a number and then dial it. As the internet became increasingly ubiquitous, phone directories went online as well. Paper phone books were seen as waste, and ultimately disappeared from everyday life.

You can think of paper phone books as a piece of infrastructure that's now gone. I don't miss them, but it's worth reflecting on what impact their disappearing has. Today, if I need to find a phone number, Google is often the place I go. The physical infrastructure is gone, replaced by a digital infrastructure.

Google, in particular, now provides important infrastructure for modern society. Not only web search, but also maps, video sharing, translation, emails, and much more.

Other companies offer other services that verge on being infrastructure. Facebook is more than just updates to friends and families. Many organisations, including schools and universities, coordinate activities via Facebook, and the political discourse increasingly happens there and on Twitter.

We've come to rely on these 'free' services to a degree that resembles our reliance on physical infrastructure like the power grid, running water, roads, telephones, etcetera.

TANSTAAFL #

Robert A. Heinlein coined the phrase There ain't no such thing as a free lunch (TANSTAAFL) in his excellent book The Moon is a Harsh Mistress. Indeed, the digital infrastructure isn't free.

Recent events have magnified some of the cost we're paying. In January, Facebook and Twitter suspended the then-president of the United States from the platforms. I've little sympathy for Donald Trump, who strikes me as an uncouth narcissist, but since I'm a Danish citizen living in Denmark, I don't think that I ought to have much of an opinion about American politics.

I do think, on the other hand, that the suspension sets an uncomfortable precedent.

Should we let a handful of tech billionaires control essential infrastructure? This time, the victim was someone half the world was glad to see go, but who's next? Might these companies suspend the accounts of politicians who work against them?

Never in the history of the world has decision power over so many people been so concentrated. I'll remind my readers that Facebook, Twitter, Google, etcetera are in worldwide use. The decisions of a handful of majority shareholders can now affect billions of people.

If you're suspended from one of these platforms, you may lose your ability to participate in school activities, or from finding your way through a foreign city, or from taking part of the democratic discussion.

The case for regulation #

In this article, I mainly want to focus on the free-speech issue related mostly to Facebook and Twitter. These companies make money from ads. The longer you stay on the platform, the more ads they can show you, and they've discovered that nothing pulls you in like anger. These incentives are incredibly counter-productive for society.

Users are implicitly encouraged to create or spread anger, yet few are held accountable. One reason is that you can easily create new accounts without disclosing your real-world identity. This makes it difficult to hold users accountable for their utterances.

Instead of clear rules, users are suspended for inexplicable and arbitrary reasons. It seems that these are mostly the verdicts of algorithms, but as we've seen in the case of Donald Trump, it can also be the result of an undemocratic, but political decision.

Everyone can lose their opportunities for self-expression for arbitrary reasons. This is a free-speech issue.

Yes, free speech.

I'm well aware that Facebook and Twitter are private companies, and no-one has any right to an account on those platforms. That's why I started this essay by discussing how these services are turning into infrastructure.

More than a century ago, the telephone was new technology operated by private companies. I know the Danish history best, but it serves well as an example. KTAS, one of the world's first telephone companies, was a private company. Via mergers and acquisitions, it still is, but as it grew and became the backbone of the country's electronic communications network, the government introduced legislation. For decades, it and its sister companies were regional monopolies.

The government allowed the monopoly in exchange for various obligations. Even when the monopoly ended in 1996, the original monopoly companies inherited these legal obligations. For instance, every Danish citizen has the right to get a land-line installed, even if that installation by itself is a commercial loss for the company. This could be the case on certain remote islands or other rural areas. The Danish state compensates the telephone operator for this potential loss.

Many other utility companies run in a similar fashion. Some are semi-public, some are private, but common to water, electricity, garbage disposal, heating, and other operators is that they are regulated by government.

When a utility becomes important to the functioning of society, a sensible government steps in to regulate it to ensure the further smooth functioning of society.

I think that the services offered by Google, Facebook, and Twitter are fast approaching a level of significance that ought to trigger government regulation.

I don't say that lightly. I'm actually quite libertarian in my views, but I'm no anarcho-libertarian. I do acknowledge that a state offers essential services such as protection of property rights, a judicial system, and so on.

Accountability #

How should we regulate social media? I think we should start by exchanging accountability for the right to post.

Let's take another step back for a moment. For generations, it's been possible to send a letter to the editor of a regular newspaper. If the editor deems it worthy for publication, it'll be printed in the next issue. You don't have any right to get your letter published; this happens at the discretion of the editor.

Why does it work that way? It works that way because the editor is accountable for what's printed in the paper. Ultimately, he or she can go to jail for what's printed.

Freedom of speech is more complicated than it looks at first glance. For instance, censorship in Denmark was abolished with the 1849 constitution. This doesn't mean that you can freely say whatever you'd like; it only means that government has no right to prevent you from saying or writing something. You can still be prosecuted after the fact if you say or write something libellous, or if you incite violence, or if you disclose secrets that you've agreed to keep, etcetera.

This is accountability. It works when the person making the utterance is known and within reach of the law.

Notice, particularly, that an editor-in-chief is accountable for a newspaper's contents. Why isn't Facebook or Twitter accountable for content?