ploeh blog danish software design

Asynchronous Injection

How to combine asynchronous programming with Dependency Injection without leaky abstractions.

C# has decent support for asynchronous programming, but it ultimately leads to leaky abstractions. This is often conspicuous when combined with Dependency Injection (DI). This leads to frequently asked questions around the combination of DI and asynchronous programming. This article outlines the problem and suggests an alternative.

The code base supporting this article is available on GitHub.

A synchronous example #

In this article, you'll see various stages of a small sample code base that pretends to implement the server-side behaviour of an on-line restaurant reservation system (my favourite example scenario). In the first stage, the code uses DI, but no asynchronous I/O.

At the boundary of the application, a Post method receives a Reservation object:

public class ReservationsController : ControllerBase { public ReservationsController(IMaîtreD maîtreD) { MaîtreD = maîtreD; } public IMaîtreD MaîtreD { get; } public IActionResult Post(Reservation reservation) { int? id = MaîtreD.TryAccept(reservation); if (id == null) return InternalServerError("Table unavailable"); return Ok(id.Value); } }

The Reservation object is just a simple bundle of properties:

public class Reservation { public DateTimeOffset Date { get; set; } public string Email { get; set; } public string Name { get; set; } public int Quantity { get; set; } public bool IsAccepted { get; set; } }

In a production code base, I'd favour a separation of DTOs and domain objects with proper encapsulation, but in order to keep the code example simple, here the two roles are combined.

The Post method simply delegates most work to an injected IMaîtreD object, and translates the return value to an HTTP response.

The code example is overly simplistic, to the point where you may wonder what is the point of DI, since it seems that the Post method doesn't perform any work itself. A slightly more realistic example includes some input validation and mapping between layers.

The IMaîtreD implementation is this:

public class MaîtreD : IMaîtreD { public MaîtreD(int capacity, IReservationsRepository repository) { Capacity = capacity; Repository = repository; } public int Capacity { get; } public IReservationsRepository Repository { get; } public int? TryAccept(Reservation reservation) { var reservations = Repository.ReadReservations(reservation.Date); int reservedSeats = reservations.Sum(r => r.Quantity); if (Capacity < reservedSeats + reservation.Quantity) return null; reservation.IsAccepted = true; return Repository.Create(reservation); } }

The protocol for the TryAccept method is that it returns the reservation ID if it accepts the reservation. If the restaurant has too little remaining Capacity for the requested date, it instead returns null. Regular readers of this blog will know that I'm no fan of null, but this keeps the example realistic. I'm also no fan of state mutation, but the example does that as well, by setting IsAccepted to true.

Introducing asynchrony #

The above example is entirely synchronous, but perhaps you wish to introduce some asynchrony. For example, the IReservationsRepository implies synchrony:

public interface IReservationsRepository { Reservation[] ReadReservations(DateTimeOffset date); int Create(Reservation reservation); }

In reality, though, you know that the implementation of this interface queries and writes to a relational database. Perhaps making this communication asynchronous could improve application performance. It's worth a try, at least.

How do you make something asynchronous in C#? You change the return type of the methods in question. Therefore, you have to change the IReservationsRepository interface:

public interface IReservationsRepository { Task<Reservation[]> ReadReservations(DateTimeOffset date); Task<int> Create(Reservation reservation); }

The Repository methods now return Tasks. This is the first leaky abstraction. From the Dependency Inversion Principle it follows that

The"clients [...] own the abstract interfaces"

MaîtreD class is the client of the IReservationsRepository interface, which should be designed to support the needs of that class. MaîtreD doesn't need IReservationsRepository to be asynchronous.

The change of the interface has nothing to with what MaîtreD needs, but rather with a particular implementation of the IReservationsRepository interface. Because this implementation queries and writes to a relational database, this implementation detail leaks into the interface definition. It is, therefore, a leaky abstraction.

On a more practical level, accommodating the change is easily done. Just add async and await keywords in appropriate places:

public async Task<int?> TryAccept(Reservation reservation) { var reservations = await Repository.ReadReservations(reservation.Date); int reservedSeats = reservations.Sum(r => r.Quantity); if (Capacity < reservedSeats + reservation.Quantity) return null; reservation.IsAccepted = true; return await Repository.Create(reservation); }

In order to compile, however, you also have to fix the IMaîtreD interface:

public interface IMaîtreD { Task<int?> TryAccept(Reservation reservation); }

This is the second leaky abstraction, and it's worse than the first. Perhaps you could successfully argue that it was conceptually acceptable to model IReservationsRepository as asynchronous. After all, a Repository conceptually represents a data store, and these are generally out-of-process resources that require I/O.

The IMaîtreD interface, on the other hand, is a domain object. It models how business is done, not how data should be accessed. Why should business logic be asynchronous?

It's hardly news that async and await is infectious. Once you introduce Tasks, it's async all the way!

That doesn't mean that asynchrony isn't one big leaky abstraction. It is.

You've probably already realised what this means in the context of the little example. You must also patch the Post method:

public async Task<IActionResult> Post(Reservation reservation) { int? id = await MaîtreD.TryAccept(reservation); if (id == null) return InternalServerError("Table unavailable"); return Ok(id.Value); }

Pragmatically, I'd be ready to accept the argument that this isn't a big deal. After all, you just replace all return values with Tasks, and add async and await keywords where they need to go. This hardly impacts the maintainability of a code base.

In C#, I'd be inclined to just acknowledge that, hey, there's a leaky abstraction. Moving on...

On the other hand, sometimes people imply that it has to be like this. That there is no other way.

Falsifiable claims like that often get my attention. Oh, really?!

Move impure interactions to the boundary of the system #

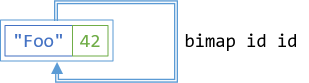

We can pretend that Task<T> forms a functor. It's also a monad. Monads are those incredibly useful programming abstractions that have been propagating from their origin in statically typed functional programming languages to more mainstream languages like C#.

In functional programming, impure interactions happen at the boundary of the system. Taking inspiration from functional programming, you can move the impure interactions to the boundary of the system.

In the interest of keeping the example simple, I'll only move the impure operations one level out: from MaîtreD to ReservationsController. The approach can be generalised, although you may have to look into how to handle pure interactions.

Where are the impure interactions in MaîtreD? They are in the two interactions with IReservationsRepository. The ReadReservations method is non-deterministic, because the same input value can return different results, depending on the state of the database when you call it. The Create method causes a side effect to happen, because it creates a row in the database. This is one way in which the state of the database could change, which makes ReadReservations non-deterministic. Additionally, Create also violates Command Query Separation (CQS) by returning the ID of the row it creates. This, again, is non-deterministic, because the same input value will produce a new return value every time the method is called. (Incidentally, you should design Create methods so that they don't violate CQS.)

Move reservations to a method argument #

The first refactoring is the easiest. Move the ReadReservations method call to the application boundary. In the above state of the code, the TryAccept method unconditionally calls Repository.ReadReservations to populate the reservations variable. Instead of doing this from within TryAccept, just pass reservations as a method argument:

public async Task<int?> TryAccept( Reservation[] reservations, Reservation reservation) { int reservedSeats = reservations.Sum(r => r.Quantity); if (Capacity < reservedSeats + reservation.Quantity) return null; reservation.IsAccepted = true; return await Repository.Create(reservation); }

This no longer compiles until you also change the IMaîtreD interface:

public interface IMaîtreD { Task<int?> TryAccept(Reservation[] reservations, Reservation reservation); }

You probably think that this is a much worse leaky abstraction than returning a Task. I'd be inclined to agree, but trust me: ultimately, this will matter not at all.

When you move an impure operation outwards, it means that when you remove it from one place, you must add it to another. In this case, you'll have to query the Repository from the ReservationsController, which also means that you need to add the Repository as a dependency there:

public class ReservationsController : ControllerBase { public ReservationsController( IMaîtreD maîtreD, IReservationsRepository repository) { MaîtreD = maîtreD; Repository = repository; } public IMaîtreD MaîtreD { get; } public IReservationsRepository Repository { get; } public async Task<IActionResult> Post(Reservation reservation) { var reservations = await Repository.ReadReservations(reservation.Date); int? id = await MaîtreD.TryAccept(reservations, reservation); if (id == null) return InternalServerError("Table unavailable"); return Ok(id.Value); } }

This is a refactoring in the true sense of the word. It just reorganises the code without changing the overall behaviour of the system. Now the Post method has to query the Repository before it can delegate the business decision to MaîtreD.

Separate decision from effect #

As far as I can tell, the main reason to use DI is because some impure interactions are conditional. This is also the case for the TryAccept method. Only if there's sufficient remaining capacity does it call Repository.Create. If it detects that there's too little remaining capacity, it immediately returns null and doesn't call Repository.Create.

In object-oriented code, DI is the most common way to decouple decisions from effects. Imperative code reaches a decision and calls a method on an object based on that decision. The effect of calling the method can vary because of polymorphism.

In functional programming, you typically use a functor like Maybe or Either to separate decisions from effects. You can do the same here.

The protocol of the TryAccept method already communicates the decision reached by the method. An int value is the reservation ID; this implies that the reservation was accepted. On the other hand, null indicates that the reservation was declined.

You can use the same sort of protocol, but instead of returning a Nullable<int>, you can return a Maybe<Reservation>:

public async Task<Maybe<Reservation>> TryAccept( Reservation[] reservations, Reservation reservation) { int reservedSeats = reservations.Sum(r => r.Quantity); if (Capacity < reservedSeats + reservation.Quantity) return Maybe.Empty<Reservation>(); reservation.IsAccepted = true; return reservation.ToMaybe(); }

This completely decouples the decision from the effect. By returning Maybe<Reservation>, the TryAccept method communicates the decision it made, while leaving further processing entirely up to the caller.

In this case, the caller is the Post method, which can now compose the result of invoking TryAccept with Repository.Create:

public async Task<IActionResult> Post(Reservation reservation) { var reservations = await Repository.ReadReservations(reservation.Date); Maybe<Reservation> m = await MaîtreD.TryAccept(reservations, reservation); return await m .Select(async r => await Repository.Create(r)) .Match( nothing: Task.FromResult(InternalServerError("Table unavailable")), just: async id => Ok(await id)); }

Notice that the Post method never attempts to extract 'the value' from m. Instead, it injects the desired behaviour (Repository.Create) into the monad. The result of calling Select with an asynchronous lambda expression like that is a Maybe<Task<int>>, which is a awkward combination. You can fix that later.

The Match method is the catamorphism for Maybe. It looks exactly like the Match method on the Church-encoded Maybe. It handles both the case when m is empty, and the case when m is populated. In both cases, it returns a Task<IActionResult>.

Synchronous domain logic #

At this point, you have a compiler warning in your code:

Warning CS1998 This async method lacks 'await' operators and will run synchronously. Consider using the 'await' operator to await non-blocking API calls, or 'await Task.Run(...)' to do CPU-bound work on a background thread.Indeed, the current incarnation of

TryAccept is synchronous, so remove the async keyword and change the return type:

public Maybe<Reservation> TryAccept( Reservation[] reservations, Reservation reservation) { int reservedSeats = reservations.Sum(r => r.Quantity); if (Capacity < reservedSeats + reservation.Quantity) return Maybe.Empty<Reservation>(); reservation.IsAccepted = true; return reservation.ToMaybe(); }

This requires a minimal change to the Post method: it no longer has to await TryAccept:

public async Task<IActionResult> Post(Reservation reservation) { var reservations = await Repository.ReadReservations(reservation.Date); Maybe<Reservation> m = MaîtreD.TryAccept(reservations, reservation); return await m .Select(async r => await Repository.Create(r)) .Match( nothing: Task.FromResult(InternalServerError("Table unavailable")), just: async id => Ok(await id)); }

Apart from that, this version of Post is the same as the one above.

Notice that at this point, the domain logic (TryAccept) is no longer asynchronous. The leaky abstraction is gone.

Redundant abstraction #

The overall work is done, but there's some tidying up remaining. If you review the TryAccept method, you'll notice that it no longer uses the injected Repository. You might as well simplify the class by removing the dependency:

public class MaîtreD : IMaîtreD { public MaîtreD(int capacity) { Capacity = capacity; } public int Capacity { get; } public Maybe<Reservation> TryAccept( Reservation[] reservations, Reservation reservation) { int reservedSeats = reservations.Sum(r => r.Quantity); if (Capacity < reservedSeats + reservation.Quantity) return Maybe.Empty<Reservation>(); reservation.IsAccepted = true; return reservation.ToMaybe(); } }

The TryAccept method is now deterministic. The same input will always return the same input. This is not yet a pure function, because it still has a single side effect: it mutates the state of reservation by setting IsAccepted to true. You could, however, without too much trouble refactor Reservation to an immutable Value Object.

This would enable you to write the last part of the TryAccept method like this:

return reservation.Accept().ToMaybe();

In any case, the method is close enough to be pure that it's testable. The interactions of TryAccept and any client code (including unit tests) is completely controllable and observable by the client.

This means that there's no reason to Stub it out. You might as well just use the function directly in the Post method:

public class ReservationsController : ControllerBase { public ReservationsController( int capacity, IReservationsRepository repository) { Capacity = capacity; Repository = repository; } public int Capacity { get; } public IReservationsRepository Repository { get; } public async Task<IActionResult> Post(Reservation reservation) { var reservations = await Repository.ReadReservations(reservation.Date); Maybe<Reservation> m = new MaîtreD(Capacity).TryAccept(reservations, reservation); return await m .Select(async r => await Repository.Create(r)) .Match( nothing: Task.FromResult(InternalServerError("Table unavailable")), just: async id => Ok(await id)); } }

Notice that ReservationsController no longer has an IMaîtreD dependency.

All this time, whenever you make a change to the TryAccept method signature, you'd also have to fix the IMaîtreD interface to make the code compile. If you worried that all of these changes were leaky abstractions, you'll be happy to learn that in the end, it doesn't even matter. No code uses that interface, so you can delete it.

Grooming #

The MaîtreD class looks fine, but the Post method could use some grooming. I'm not going to tire you with all the small refactoring steps. You can follow them in the GitHub repository if you're interested. Eventually, you could arrive at an implementation like this:

public class ReservationsController : ControllerBase { public ReservationsController( int capacity, IReservationsRepository repository) { Capacity = capacity; Repository = repository; maîtreD = new MaîtreD(capacity); } public int Capacity { get; } public IReservationsRepository Repository { get; } private readonly MaîtreD maîtreD; public async Task<IActionResult> Post(Reservation reservation) { return await Repository.ReadReservations(reservation.Date) .Select(rs => maîtreD.TryAccept(rs, reservation)) .SelectMany(m => m.Traverse(Repository.Create)) .Match(InternalServerError("Table unavailable"), Ok); } }

Now the Post method is just a single, composed asynchronous pipeline. Is it a coincidence that this is possible?

This is no coincidence. This top-level method executes in the 'Task monad', and a monad is, by definition, composable. You can chain operations together, and they don't all have to be asynchronous. Specifically, maîtreD.TryAccept is a synchronous piece of business logic. It's unaware that it's being injected into an asynchronous context. This type of design would be completely run of the mill in F# with its asynchronous workflows.

Summary #

Dependency Injection frequently involves I/O-bound operations. Those typically get hidden behind interfaces so that they can be mocked or stubbed. You may want to access those I/O-bound resources asynchronously, but with C#'s support for asynchronous programming, you'll have to make your abstractions asynchronous.

When you make the leaf nodes in your call graph asynchronous, that design change ripples through the entire code base, forcing you to be async all the way. One result of this is that the domain model must also accommodate asynchrony, although this is rarely required by the logic it implements. These concessions to asynchrony are leaky abstractions.

Pragmatically, it's hardly a big problem. You can use the async and await keywords to deal with the asynchrony, and it's unlikely to, in itself, cause a problem with maintenance.

In functional programming, monads can address asynchrony without introducing sweeping leaky abstractions. Instead of making DI asynchronous, you can inject desired behaviour into an asynchronous context.

Behaviour Injection, not Dependency Injection.

How to get the value out of the monad

How do I get the value out of my monad? You don't. You inject the desired behaviour into the monad.

A frequently asked question about monads can be paraphrased as: How do I get the value out of my monad? This seems to particularly come up when the monad in question is Haskell's IO monad, from which you can't extract the value. This is by design, but then beginners are often stumped on how to write the code they have in mind.

You can encounter variations of the question, or at least the underlying conceptual misunderstanding, with other monads. This seems to be particularly prevalent when object-oriented or procedural programmers start working with Maybe or Either. People really want to extract 'the value' from those monads as well, despite the lack of guarantee that there will be a value.

So how do you extract the value from a monad?

The answer isn't use a comonad, although it could be, for a limited set of monads. Rather, the answer is mu.

Unit containers #

Before I attempt to address how to work with monads, I think it's worthwhile to speculate on what misleads people into thinking that it makes sense to even contemplate extracting 'the value' from a monad. After all, you rarely encounter the question: How do I get the value out of my collection?

Various collections form monads, but everyone intuitively understand that there isn't a single value in a collection. Collections could be empty, or contain many elements. Collections could easily be the most ordinary monad. Programmers deal with collections all the time.

Yet, I think that most programmers don't realise that collections form monads. The reason for this could be that mainstream languages rarely makes this relationship explicit. Even C# query syntax, which is nothing but monads in disguise, hides this fact.

What happens, I think, is that when programmers first come across monads, they often encounter one of a few unit containers.

What's a unit container? I admit that the word is one I made up, because I couldn't detect existing terminology on this topic. The idea, though, is that it's a functor guaranteed to contain exactly one value. Since functors are containers, I call such types unit containers. Examples include Identity, Lazy, and asynchronous functors.

You can extract 'the value' from most unit containers (with IO being the notable exception from the rule). Trivially, you can get the item contained in an Identity container:

> Identity<string> x = new Identity<string>("bar"); > x.Item "bar"

Likewise, you can extract the value from lazy and asynchronous values:

> Lazy<int> x = new Lazy<int>(() => 42); > x.Value 42 > Task<int> y = Task.Run(() => 1337); > await y 1337

My theory, then, is that some programmers are introduced to the concept of monads via lazy or asynchronous computations, and that this could establish incorrect mental models.

Semi-containers #

There's another category of monad that we could call semi-containers (again, I'm open to suggestions for a better name). These are data containers that contain either a single value, or no value. In this set of monads, we find Nullable<T>, Maybe, and Either.

Unfortunately, Maybe implementations often come with an API that enables you to ask a Maybe object if it's populated or empty, and a way to extract the value from the Maybe container. This misleads many programmers to write code like this:

Maybe<int> id = // ... if (id.HasItem) return new Customer(id.Item); else throw new DontKnowWhatToDoException();

Granted, in many cases, people do something more reasonable than throwing a useless exception. In a specific context, it may be clear what to do with an empty Maybe object, but there are problems with this Tester-Doer approach:

- It doesn't compose.

- There's no systematic technique to apply. You always need to handle empty objects in a context-specific way.

If you throw an exception when the object is empty, you'll likely have to deal with that exception further up in the call stack.

If you return a magic value (like returning -1 when a natural number is expected), you again force all callers to check for that magic number.

If you set a flag that indicates that an object was empty, again, you put the burden on callers to check for the flag.

This leads to defensive coding, which, at best, makes the code unreadable.

Behaviour Injection #

Interestingly, programmers rarely take a Tester-Doer approach to working with collections. Instead, they rely on APIs for collections and arrays.

In C#, LINQ has been around since 2007, and most programmers love it. It's common knowledge that you can use the Select method to, for example, convert an array of numbers to an array of strings:

> new[] { 42, 1337, 2112, 90125 }.Select(i => i.ToString())

string[4] { "42", "1337", "2112", "90125" }

You can do that with all functors, including Maybe:

Maybe<int> id = // ... Maybe<Customer> c = id.Select(x => new Customer(x));

A previous article offers a slightly more compelling example:

var viewModel = repository.Read(id).Select(r => r.ToViewModel());

Common to all the three above examples is that instead of trying to extract a value from the monad (which makes no sense in the array example), you inject the desired behaviour into the context of the data container. What that eventually brings about depends on the monad in question.

In the array example, the behaviour being injected is that of turning a number into a string. Since this behaviour is injected into a collection, it's applied to every element in the source array.

In the second example, the behaviour being injected is that of turning an integer into a Customer object. Since this behaviour is injected into a Maybe, it's only applied if the source object is populated.

In the third example, the behaviour being injected is that of turning a Reservation domain object into a View Model. Again, this only happens if the original Maybe object is populated.

Composability #

The marvellous quality of a monad is that it's composable. You could, for example, start by attempting to parse a string into a number:

string candidate = // Some string from application boundary Maybe<int> idm = TryParseInt(candidate);

This code could be defined in a part of your code base that deals with user input. Instead of trying to get 'the value' out of idm, you can pass the entire object to other parts of the code. The next step, defined in a different method, in a different class, perhaps even in a different library, then queries a database to read a Reservation object corresponding to that ID - if the ID is there, that is:

Maybe<Reservation> rm = idm.SelectMany(repository.Read);

The Read method on the repository has this signature:

public Maybe<Reservation> Read(int id)

The Read method returns a Maybe<Reservation> object because you could pass any int to the method, but there may not be a row in the database that corresponds to that number. Had you used Select on idm, the return type would have been Maybe<Maybe<Reservation>>. This is a typical example of a nested functor, so instead, you use SelectMany, which flattens the functor. You can do this because Maybe is a monad.

The result at this stage is a Maybe<Reservation> object. If all goes according to plan, it's populated with a Reservation object from the database. Two things could go wrong at this stage, though:

- The

candidatestring didn't represent a number. - The database didn't contain a row for the parsed ID.

idm is empty.

You can now pass rm to another part of the code base, which then performs this step:

Maybe<ReservationViewModel> vm = rm.Select(r => r.ToViewModel());

Functors and monads are composable (i.e. 'chainable'). This is a fundamental trait of functors; they're (endo)morphisms, which, by definition, are composable. In order to leverage that composability, though, you must retain the monad. If you extract 'the value' from the monad, composability is lost.

For that reason, you're not supposed to 'get the value out of the monad'. Instead, you inject the desired behaviour into the monad in question, so that it stays composable. In the above example, repository.Read and r.ToViewModel() are behaviors injected into the Maybe monad.

Summary #

When we learn something new, there's always a phase where we struggle to understand a new concept. Sometimes, we may, inadvertently, erect a tentative, but misleading mental model of a concept. It seems to me that this happens to many people while they're grappling with the concept of functors and monads.

One common mental wrong turn that many people seem to take is to try to 'get the value out of the monad'. This seems to be particularly common with IO in Haskell, where the issue is a frequently asked question.

I've also reviewed enough F# code to have noticed that people often take the imperative, Tester-Doer road to 'a option. That's the reason this article uses a majority of its space on various Maybe examples.

In a future article, I'll show a more complete and compelling example of behaviour injection.

Comments

Hi Mark, was very interested in your post as I do try and use Option Monads in my code, and I think I understand the point you are making about not thinking of an optional value as something that is composable. However, I recently had a couple of situations where I reluctantly had to check the value, would really appreciate any thoughts you may have?

The first example was where I have a UI and the user may specify a Latitude and a Longitude. The user may not yet have specified both values, so each is held as an Option

Having read your article, I realise I could change this to a Select statement on latitude, but that lambda would still need to check longitude.HasValue. Should I combine the two options somehow before doing a single Select?

The second example again relates to a UI where the user can enter values in a grid, or leave a row blank. I would like to calculate the mean, standard deviation and root mean square of the values, and normally all these functions would have the signature: double Mean(ICollection<double> values)

If I keep this then I need a function like

if(latitude.HasValue && longitude.HasValue)

Bearing = CalculateRhumbBearing(latitude.Value, longitude.Value, fixedLatitude, fixedLongitude).ToOptionMonad();

else

Bearing = OptionMonad<double>.None;

foreach(var item in values)

{

if(item.HasValue)

{

yield return item.Value;

}

}

Or some equivalent Where/Select combination. Can you advise me please, how you recommend transforming an IEnumerable<OptionMonad<X>> to an enumerable<X>? Or should I write a signature overload double Mean(ICollection<OptionMonad<double>> possibleValues) and ditto for SD and RMS?

Thanks, Sean

Sean, thank you for writing. The first example you give is quite common, and is easily addressed with using the applicative or monadic capabilities of Maybe. Often, in a language like C#, it's easiest to use monadic bind (in C# called SelectMany):

Bearing = latitude .SelectMany(lat => longitude .Select(lon => CalculateRhumbBearing(lat, lon, fixedLatitude, fixedLongitude)));

If you find code like that disagreeable, you can also write it with query syntax:

Bearing = from lat in latitude from lon in longitude select CalculateRhumbBearing(lat, lon, fixedLatitude, fixedLongitude);

Here, Bearing is a Maybe value. As you can see, in neither of the above alternatives is it necessary to check and extract the values. Bearing will be populated when both latitude and longitude are populated, and empty otherwise.

Regarding the other question, being able to filter out empty values from a collection is a standard operation in both F# and Haskell. In C#, you can write it like this:

public static IEnumerable<T> Choose<T>(this IEnumerable<IMaybe<T>> source) { return source.SelectMany(m => m.Match(new T[0], x => new[] { x })); }

This example is based on the Church-encoded Maybe, which is currently my favourite implementation. I decided to call the method Choose, as this is also the name it has in F#. In Haskell, this function is called catMaybes.

Hi Mark, did you ever think about publishing a Library containing all these types missing in .net Framework like Either? Or can you recommend an existing library?

Achim, thank you for writing. The thought has crossed my mind, but my position on this question seems to be changing.

Had you asked me one or two years ago, I'd have answered that I hadn't seriously considered doing that, and that I saw little reason to do so. There is, as far as I can tell, plenty of such libraries out there, although I can't recommend any in particular. This seems to be something that many people create as part of a learning exercise. It seems to be a rite of passage for many people, similarly to developing a Dependency Injection container, or an ORM.

Besides, a reusable library would mean another dependency that your code would have to take on.

These days, however, I'm beginning to reconsider my position. It seems that no such library is emerging as dominant, and some of the types involved (particularly Maybe) would really be useful.

Ideally, these types ought be in the .NET Base Class Library, but perhaps a second-best alternative would be to put them in a commonly-used shared library.

Hi Mark, thank you for the interesting article series.

Can you maybe provide guidance of how asynchronous operations can become part of a chain of operations? How would the 'functor flattening' be combined with the built Task/Task<T> types? Extending your example, how would you go about if we would like to enrich the reservation retrieved from repository with that day's special, which happens to be async:

Task<ReservationWithMenuSuggestion> EnrichWithSpecialOfTheDayAsync(Reservation reservation)

I tried with your Church encoded Maybe implementation, but I got stuck with the Task<T> wrapping/unwrapping/awaiting.

Ralph, thank you for writing. Please see if my new article Asynchronous Injection answers your question.

Hi Mark, I'm curious what do you think about this approach to monads - Maybe monad through async/await in C#

Dominik, thank you for writing. That's a clever article. As far as I can tell, the approach is similar to Nick Palladinos' Eff library. You can see how he rewrote one of my sample applications using it.

I've no personal experience with this approach, so I could easily be wrong in my assessment. Nick reports that he's had some success getting other people on board with such an approach, because the resulting user code looks like idiomatic C#. That is, I think, one compelling argument.

What I do find less appealing, however, is that, if I understand this correctly, the C# compiler enables you to mix, or interleave, disparate effects. As long as your method returns an awaitable object, you can await true asynchronous tasks, bind Maybe values, and conceivably invoke other effectful operations all in the same method - and you wouldn't be able to tell from the return type what to expect.

In my opinion, one of the most compelling benefits of modelling with universal abstractions is that they provide excellent encapsulation. You can use the type system to communicate the pre- and post-conditions of an operation.

If I see an operation that returns Maybe<User>, I expect that it may or may not return a User object. If I see a return type of Task<User>, I expect that I'm guaranteed to receive a User object, but that this'll happen asynchronously. Only if I see something like Task<Maybe<User>> do I expect the combination of those two effects.

My concern with making Maybe awaitable is that this enables one to return Maybe<User> from a method, but still make the implementation asynchronous (e.g. by querying a database for the user). That effect is now hidden, which in my view break encapsulation because you now have to go and read the implementation code in order to discover that this is taking place.

Mark, thanks for useful respond!

You are absolutely true that awaiting Maybe that have Task have side effects not visible to invoker. I agree that this is the smell I felt but you gave me the source of it.

Better abstractions revisited

How do you design better abstractions? A retrospective look on an old article for object-oriented programmers.

About a decade ago, I had already been doing test-driven development (TDD) and used Dependency Injection for many years, but I'd started to notice some patterns about software design. I'd noticed that interfaces aren't abstractions and that TDD isn't a design methodology. Sometimes, I'd arrive at interfaces that turned out to be good abstractions, but at other times, the interfaces I created seemed to serve no other purpose than enabling unit testing.

In 2010 I thought that I'd noticed some patterns for good abstractions, so I wrote an article called Towards better abstractions. I still consider it a decent attempt at communicating my findings, but I don't think that I succeeded. My thinking on the subject was still too immature, and I lacked a proper vocabulary.

While I had hoped that I would be able to elaborate on such observations, and perhaps turn them into heuristics, my efforts soon after petered out. I moved on to other things, and essentially gave up on this particular research programme. Years later, while trying to learn category theory, I suddenly realised that mathematical disciplines like category theory and abstract algebra could supply the vocabulary. After some further work, I started publishing a substantial and long-running article series called From design patterns to category theory. It goes beyond my initial attempt, but it finally enabled me to crystallise those older observations.

In this article, I'll revisit that old article, Towards better abstractions, and translate the vague terminology I used then, to the terminology presented in From design patterns to category theory.

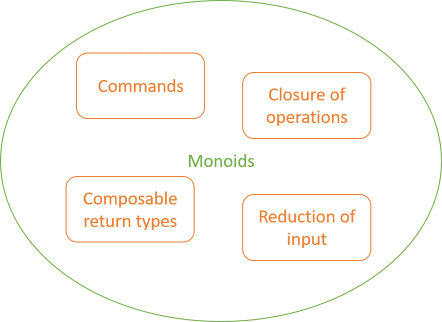

The thrust of the old article is that if you can create a Composite or a Null Object from an interface, then it's likely to be a good abstraction. I still consider that a useful rule of thumb.

When can you create a Composite? When the abstraction gives rise to a monoid. When can you create a Null Object? When the abstraction gives rise to a monoid.

All the 'API shapes' I'd identified in Towards better abstractions form monoids.

Commands #

A Command seems to be universally identified by a method typically called Execute:

public void Execute()

From unit isomorphisms we know that methods with the void return type are isomorphic to (impure) functions that return unit, and that unit forms a monoid.

Furthermore, we know from function monoids that methods that return a monoid themselves form monoids. Therefore, Commands form monoids.

In early 2011 I'd already explicitly noticed that Commands are composable. Now I know the deeper reason for this: they're monoids.

Closure of operations #

In Domain-Driven Design, Eric Evans discusses the benefits of designing APIs that exhibit closure of operations. This means that a method returns the same type as all its input arguments. The simplest example is the one that I show in the old article:

public static T DoIt(T x)

That's just an endomorphism, which forms a monoid.

Another variation is a method that takes two arguments:

public static T DoIt(T x, T y)

This is a binary operation. While it's certainly a magma, in itself it's not guaranteed to be a monoid. In fact, Evans' colour-mixing example is only a magma, but not a monoid. You can, however, also view this as a special case of the reduction of input shape, below, where the 'extra' arguments just happen to have the same type as the return type. In that interpretation, such a method still forms a monoid, but it's not guaranteed to be meaningful. (Just like modulo 31 addition forms a monoid; it's hardly useful.)

The same sort of argument goes for methods with closure of operations, but more input arguments, like:

public static T DoIt(T x, T y, T z)

This sort of method is, however, rare, unless you're working in a stringly typed code base where methods look like this:

public static string DoIt(string x, string y, string z)

That's a different situation, though, because those strings should probably be turned into domain types that properly communicate their roles. Once you do that, you'll probably find that the method arguments have different types.

In any case, regardless of cardinality, you can view all methods with closure of operations as special cases of the reduction of input shape below.

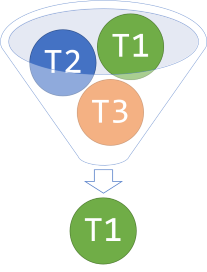

Reduction of input #

This is the part of the original article where my struggles with vocabulary began in earnest. The situation is when you have a method that looks like this, perhaps as an interface method:

public interface IInputReducer<T1, T2, T3> { T1 DoIt(T1 x, T2 y, T3 z); }

In order to stay true to the terminology of my original article, I've named this reduction of input generic example IInputReducer. The reason I originally called it reduction of input is that such a method takes a set of input types as arguments, but only returns a value of a type that's a subset of the set of input types. Thus, the method looks like it's reducing the range of input types to a single one of those types.

A realistic example could be a piece of HTTP middleware that defines an action filter as an interface that you can implement to intercept each HTTP request:

public interface IActionFilter { Task<HttpResponseMessage> ExecuteActionFilterAsync( HttpActionContext actionContext, CancellationToken cancellationToken, Task<HttpResponseMessage> continuation); }

This is a slightly modified version of an earlier version of the ASP.NET Web API. Notice that in this example, it's not the first argument's type that doubles as the return type, but rather the third and last argument. The reduction of input 'shape' can take an arbitrary number of arguments, and any of the argument types can double as a return type, regardless of position.

Returning to the generic IInputReducer example, you can easily make a Composite of it:

public class CompositeInputReducer<T1, T2, T3> : IInputReducer<T1, T2, T3> { private readonly IInputReducer<T1, T2, T3>[] reducers; public CompositeInputReducer(params IInputReducer<T1, T2, T3>[] reducers) { this.reducers = reducers; } public T1 DoIt(T1 x, T2 y, T3 z) { var acc = x; foreach (var reducer in reducers) acc = reducer.DoIt(acc, y, z); return acc; } }

Notice that you call DoIt on all the composed reducers. The arguments that aren't part of the return type, y and z, are passed to each call to DoIt unmodified, whereas the T1 value x is only used to initialise the accumulator acc. Each call to DoIt also returns a T1 object, so the acc value is updated to that object, so that you can use it as an input for the next iteration.

This is an imperative implementation, but as you'll see below, you can also implement the same behaviour in a functional manner.

For the sake of argument, pretend that you reorder the method arguments so that the method looks like this:

T1 DoIt(T3 z, T2 y, T1 x);

From Uncurry isomorphisms you know that a method like that is isomorphic to a function with the type 'T3 -> 'T2 -> 'T1 -> 'T1 (F# syntax). You can think of such a curried function as a function that returns a function that returns a function: 'T3 -> ('T2 -> ('T1 -> 'T1)). The rightmost function 'T1 -> 'T1 is clearly an endomorphism, and you already know that an endomorphism gives rise to a monoid. Finally, Function monoids informs us that a function that returns a monoid itself forms a monoid, so 'T2 -> ('T1 -> 'T1) forms a monoid. This argument applies recursively, because if that's a monoid, then 'T3 -> ('T2 -> ('T1 -> 'T1)) is also a monoid.

What does that look like in C#?

In the rest of this article, I'll revert the DoIt method signature to T1 DoIt(T1 x, T2 y, T3 z);. The monoid implementation looks much like the endomorphism code. Start with a binary operation:

public static IInputReducer<T1, T2, T3> Append<T1, T2, T3>( this IInputReducer<T1, T2, T3> r1, IInputReducer<T1, T2, T3> r2) { return new AppendedReducer<T1, T2, T3>(r1, r2); } private class AppendedReducer<T1, T2, T3> : IInputReducer<T1, T2, T3> { private readonly IInputReducer<T1, T2, T3> r1; private readonly IInputReducer<T1, T2, T3> r2; public AppendedReducer( IInputReducer<T1, T2, T3> r1, IInputReducer<T1, T2, T3> r2) { this.r1 = r1; this.r2 = r2; } public T1 DoIt(T1 x, T2 y, T3 z) { return r2.DoIt(r1.DoIt(x, y, z), y, z); } }

This is similar to the endomorphism Append implementation. When you combine two IInputReducer objects, you receive an AppendedReducer that implements DoIt by first calling DoIt on the first object, and then using the return value from that method call as the input for the second DoIt method call. Notice that y and z are just 'context' variables used for both reducers.

Just like the endomorphism, you can also implement the identity input reducer:

public class IdentityInputReducer<T1, T2, T3> : IInputReducer<T1, T2, T3> { public T1 DoIt(T1 x, T2 y, T3 z) { return x; } }

This simply returns x while ignoring y and z. The Append method is associative, and the IdentityInputReducer is both left and right identity for the operation, so this is a monoid. Since monoids accumulate, you can also implement an Accumulate extension method:

public static IInputReducer<T1, T2, T3> Accumulate<T1, T2, T3>( this IReadOnlyCollection<IInputReducer<T1, T2, T3>> reducers) { IInputReducer<T1, T2, T3> identity = new IdentityInputReducer<T1, T2, T3>(); return reducers.Aggregate(identity, (acc, reducer) => acc.Append(reducer)); }

This implementation follows the overall implementation pattern for accumulating monoidal values: start with the identity and combine pairwise. While I usually show this in a more imperative form, I've here used a proper functional implementation for the method.

The IInputReducer object returned from that Accumulate function has exactly the same behaviour as the CompositeInputReducer.

The reduction of input shape forms another monoid, and is therefore composable. The Null Object is the IdentityInputReducer<T1, T2, T3> class. If you set T1 = T2 = T3, you have the closure of operations 'shapes' discussed above; they're just special cases, so form at least this type of monoid.

Composable return types #

The original article finally discusses methods that in themselves don't look composable, but turn out to be so anyway, because their return types are composable. Without knowing it, I'd figured out that methods that return monoids are themselves monoids.

In 2010 I didn't have the vocabulary to put this into specific language, but that's all it says.

Summary #

In 2010 I apparently discovered an ad-hoc, informally specified, vaguely glimpsed, half-understood description of half of abstract algebra.

Riffs on Greenspun's tenth rule aside, things clicked for me once I started to investigate what category theory was about, and why it seemed so closely linked to Haskell. That's one of the reasons I started writing the From design patterns to category theory article series.

The patterns I thought that I could see in 2010 all form monoids, but there are many other universal abstractions from mathematics that apply to programming as well.

Some thoughts on anti-patterns

What's an anti-pattern? Are there rules to identify them, or is it just name-calling? Before I use the term, I try to apply some rules of thumb.

It takes time to write a book. Months, even years. It took me two years to write the first edition of Dependency Injection in .NET. The second edition of Dependency Injection in .NET is also the result of much work; not so much by me, but by my co-author Steven van Deursen.

When you write a book single-handedly, you can be as opinionated as you'd like. When you have a co-author, regardless of how much you think alike, there's bound to be some disagreements. Steven and I agreed about most of the changes we'd like to make to the second edition, but each of us had to yield or compromise a few times.

An interesting experience has been that on more than one occasion where I've reluctantly had to yield to Steven, over the time, I've come to appreciate his position. Two minds think better than one.

Ambient Context #

One of the changes that Steven wanted to make was that he wanted to change the status of the Ambient Context pattern to an anti-pattern. While I never use that pattern myself, I included it in the first edition in the spirit of the original Design Patterns book. The Gang of Four made it clear that the patterns they'd described weren't invented, but rather discovered:

The spirit, as I understand it, is to identify solutions that already exist, and catalogue them. When I wrote the first edition of my book, I tried to do that as well."We have included only designs that have been applied more than once in different systems."

I'd noticed what I eventually named the Ambient Context pattern several places in the .NET Base Class Library. Some of those APIs are still around today. Thread.CurrentPrincipal, CultureInfo.CurrentCulture, thread-local storage, HttpContext.Current, and so on.

None of these really have anything to do with Dependency Injection (DI), but people sometimes attempt to use them to solve problems similar to the problems that DI addresses. For that reason, and because the pattern was so prevalent, I included it in the book - as a pattern, not an anti-pattern.

Steven wanted to make it an anti-pattern, and I conceded. I wasn't sure I was ready to explicitly call it out as an anti-pattern, but I agreed to the change. I'm becoming increasingly happy that Steven talked me into it.

Pareto efficiency #

I've heard said of me that I'm one of those people who call everything I don't like an anti-pattern. I don't think that's true.

I think people's perception of me is skewed because even today, the most visited page (my greatest hit, if you will) is an article called Service Locator is an Anti-Pattern. (It concerns me a bit that an article from 2010 seems to be my crowning achievement. I hope I haven't peaked yet, but the numbers tell a different tale.)

While I've used the term anti-pattern in other connections, I prefer to be conservative with my use of the word. I tend to use it only when I feel confident that something is, indeed, an anti-pattern.

What's an anti-pattern? AntiPatterns defines it like this:

As definitions go, it's quite amphibolous. Is it the problem that generates negative consequences? Hardly. In the context, it's clear that it's the solution that causes problems. In any case, just because it's in a book doesn't necessarily make it right, but I find it a good start."An AntiPattern is a literary form that describes a commonly occurring solution to a problem that generates decidedly negative consequences."

I think that the phrase decidedly negative consequences is key. Most solutions come with some disadvantages, but in order for a 'solution' to be an anti-pattern, the disadvantages must clearly outweigh any advantages produced.

I usually look at it another way. If I can solve the problem in a different way that generates at least as many advantages, but fewer disadvantages, then the first 'solution' might be an anti-pattern. This way of viewing the problem may stem from my background in economics. In that perspective, an anti-pattern simply isn't Pareto optimal.

Falsifiability #

Another rule of thumb I employ to determine whether a solution could be an anti-pattern is Popper's concept of falsifiability. As a continuation of the Pareto efficiency perspective, an anti-pattern is a 'solution' that you can improve without any (significant) trade-offs.

That turns claims about anti-patterns into falsifiable statements, which I consider is the most intellectually honest way to go about claiming that things are bad.

Take, for example, the claim that Service Locator is an anti-pattern. In light of Pareto efficiency, that's a falsifiable claim. All you have to do to prove me wrong is to present a situation where Service Locator solves a problem, and I can't come up with a better solution.

I made the claim about Service Locator in 2010, and so far, no one has been able to present such a situation, even though several have tried. I'm fairly confident making that claim.

This way of looking at the term anti-pattern, however, makes me wary of declaiming solutions anti-patterns just because I don't like them. Could there be a counter-argument, some niche scenario, where the pattern actually couldn't be improved without trade-offs?

I didn't take it lightly when Steven suggested making Ambient Context an anti-pattern.

Preliminary status #

I've had some time to think about Ambient Context since I had the (civil) discussion with Steven. The more I think about it, the more I think that he's right; that Ambient Context really is an anti-pattern.

I never use that pattern myself, so it's clear to me that for all the situations that I typically encounter, there's always better solutions, with no significant trade-offs.

The question is: could there be some niche scenario that I'm not aware of, where Ambient Context is a bona fide good solution?

The more I think about this, the more I'm beginning to believe that there isn't. It remains to be seen, though. It remains to be falsified.

Summary #

I'm so happy that Steven van Deursen agreed to co-author the second edition of Dependency Injection in .NET with me. The few areas where we've disagreed, I've ultimately come around to agree with him. He's truly taken a good book and made it better.

One of the changes is that Ambient Context is now classified as an anti-pattern. Originally, I wasn't sure that this was the correct thing to do, but I've since changed my mind. I do think that Ambient Context belongs in the anti-patterns chapter.

I could be wrong, though. I was before.

Comments

Thanks for great input for discussion :P

Like with all other patterns and anti-patterns, I think there's a time and a place.

Simply looking at it in a one-dimensional manner, i.e. asking "does there exist a solution to this problem with the same advantages but less downsides?" must be qualified with "IN THIS TIME AND PLACE", in my opinion.

This way, the patterns/anti-patterns distinction does not make that much sense in a global perspective, because all patterns can be an anti-patterns in some situations, and vice versa.

For example, I like what Ambient Context does in Rebus: It provides a mechanism that enables user code to transparently enlist its bus operations in a unit of work, without requiring user code to pass that unit of work to each operation.

This is very handy, e.g. in OWIN-based applications, where the unit of work can be managed by an OWIN middleware that uses a

RebusTransactionScope,

this way enlisting all send/publish operations on the bus in that unit of work.

Had it not been possible to automatically pick up an ongoing ambient Rebus transaction context, one would probably need to pollute the interfaces of one's application with an ITransactionContext argument,

thus not handling the cross-cutting concern of managing the unit of work in a cross-cutting manner.

Mogens, thank you for writing. The reason I explicitly framed my treatment in a discourse related to Pareto efficiency is exactly because this view on optima is multi-dimensional. When considering whether a 'solution coordinate' is Pareto-optimal or not, the question is exactly whether or not it's possible to improve at least one dimension without exacerbating any other dimension. If you can make one dimension better without trade-offs, then you can make a Pareto improvement. If you can only make one dimension better at the cost of one or more other dimensions, then you already have a Pareto-optimal solution.

The theory of Pareto efficiency doesn't say anything about the number of dimensions. Usually, as in the linked Wikipedia article, the concept is illustrated in the plane, but conceptually, it applies to an arbitrary number of dimensions.

In the context of anti-patterns, those dimensions include time and place, as you say.

I consider something to be an anti-pattern if I can make a change that constitutes an improvement in at least one dimension, without trading off of any other dimensions. In other words, in this article, I'm very deliberately not looking at it in a one-dimensional manner.

As I wrote, I'm still not sure that Ambient Context is an anti-pattern (although I increasingly believe it to be). How can we even test that hypothesis when we can't really quantify software design?

On the other hand, if we leave the question about Ambient Context for a moment, I feel confident that Service Locator is an anti-pattern, even in what you call a global perspective. The reason I believe that is that I made that falsifiable claim in 2010, and here, almost nine years later, no-one has successfully produced a valid counter-example.

I don't have the same long history with the claim about Ambient Context, so I could be wrong. Perhaps you are, right now, proving me wrong. I can't tell, though, because I don't (yet) know enough about Rebus to be able to tell whether what you describe is Pareto-optimal.

The question isn't whether the current design is 'handy'. The question is whether it's possible to come up with a design that's 'globally' better; i.e. either has all the advantages of the current design, but fewer disadvantages; or has more advantages, and only the same disadvantages.

I may be able to suggest such an improvement if provided with some code examples, but in the end we may never agree whether one design is better than another. After all, since we can't quantify software design, a subjective judgement will always remain.

Mark,

Thanks for this thoughtful meta-analysis of what it means to be an anti-pattern and how we think about them. What are some considerations when designing a replacement for ambient context objects? What patterns have you found succesful in object-oriented code? I'm sure you'd mention Constructor Injection and CQRS with decorators.

How might one refactor ambient context out of a solution? Some say passing around an object, maybe some sort of continuation?

Drew, thank you for writing. Indeed, in object-oriented code, I'd typically replace Ambient Contexts with either injected dependencies or Decorators. I'm not sure I see how CQRS fits into this picture.

When refactoring away an Ambient Context, it's typically, before the refactoring, used like Context.DoSomething(). The first step is often to inject a dependency and make it available to the class as a property called Context. IIRC, the C# overload resolution system should then pick the instance property over the static class, so that Context.DoSomething() calls DoSomething on the injected Context property.

There may be some static member that also use the Ambient Context. If that's the case, you'll first have to make those static members instance members.

Once you've made those replacements everywhere, you should be able to delete the Ambient Context.

An Either functor

Either forms a normal functor. A placeholder article for object-oriented programmers.

This article is an instalment in an article series about functors. As another article explains, Either is a bifunctor. This makes it trivially a functor. As such, this article is mostly a place-holder to fit the spot in the functor table of contents, thereby indicating that Either is a functor.

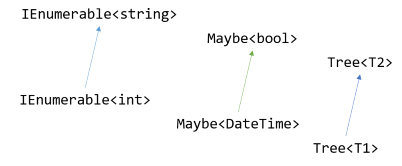

Since Either is a bifunctor, it's actually not one, but two, functors. Many languages, C# included, are best equipped to deal with unambiguous functors. This is also true in Haskell, where Either l r is only a Functor over the right side. Likewise, in C#, you can make IEither<L, R> a functor by implementing Select:

public static IEither<L, R1> Select<L, R, R1>( this IEither<L, R> source, Func<R, R1> selector) { return source.SelectRight(selector); }

This method simply delegates all implementation to the SelectRight method; it's just SelectRight by another name. It obeys the functor laws, since these are just specializations of the bifunctor laws, and we know that Either is a proper bifunctor.

It would have been technically possible to instead implement a Select method by calling SelectLeft, but it seems generally more useful to enable syntactic sugar for mapping over 'happy path' scenarios. This enables you to write projections over operations that can fail.

Here's some C# Interactive examples that use the FindWinner helper method from the Church-encoded Either article. Imagine that you're collecting votes; you're trying to pick the highest-voted integer, but in reality, you're only interested in seeing if the number is positive or not. Since FindWinner returns IEither<VoteError, T>, and this type is a functor, you can project the right result, while any left result short-circuits the query. First, here's a successful query:

> from i in FindWinner(1, 2, -3, -1, 2, -1, -1) select i > 0 Right<VoteError, bool>(false)

This query succeeds, resulting in a Right object. The contained value is false because the winner of the vote is -1, which isn't a positive number.

On the other hand, the following query fails because of a tie.

> from i in FindWinner(1, 2, -3, -1, 2, -1) select i > 0 Left<VoteError, bool>(Tie)

Because the result is tied on -1, the return value is a Left object containing the VoteError value Tie.

Another source of error is an empty input collection:

> from i in FindWinner<int>() select i > 0 Left<VoteError, bool>(Empty)

This time, the Left object contains the Empty error value, since no winner can be found from an empty collection.

While the Select method doesn't implement any behaviour that SelectRight doesn't already afford, it enables you to use C# query syntax, as demonstrated by the above examples.

Next: A Tree functor.

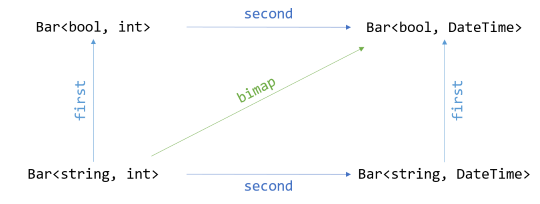

Either bifunctor

Either forms a bifunctor. An article for object-oriented programmers.

This article is an instalment in an article series about bifunctors. As the overview article explains, essentially there's two practically useful bifunctors: pairs and Either. In the previous article, you saw how a pair (a two-tuple) forms a bifunctor. In this article, you'll see how Either also forms a bifunctor.

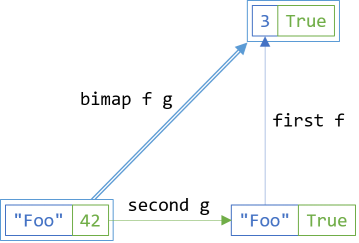

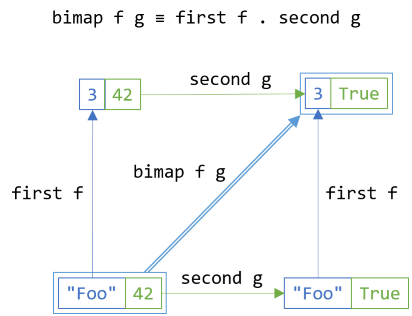

Mapping both dimensions #

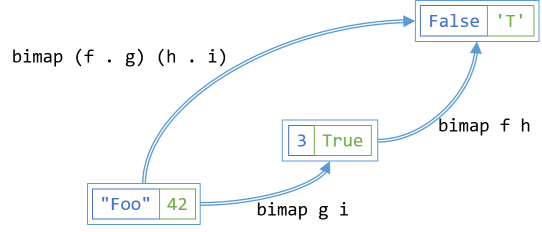

In the previous article, you saw how, if you have maps over both dimensions, you can trivially implement SelectBoth (what Haskell calls bimap):

return source.SelectFirst(selector1).SelectSecond(selector2);

The relationship can, however, go both ways. If you implement SelectBoth, you can derive SelectFirst and SelectSecond from it. In this article, you'll see how to do that for Either.

Given the Church-encoded Either, the implementation of SelectBoth can be achieved in a single expression:

public static IEither<L1, R1> SelectBoth<L, L1, R, R1>( this IEither<L, R> source, Func<L, L1> selectLeft, Func<R, R1> selectRight) { return source.Match<IEither<L1, R1>>( onLeft: l => new Left<L1, R1>( selectLeft(l)), onRight: r => new Right<L1, R1>(selectRight(r))); }

Given that the input source is an IEither<L, R> object, there's isn't much you can do. That interface only defines a single member, Match, so that's the only method you can call. When you do that, you have to supply the two arguments onLeft and onRight.

The Match method is defined like this:

T Match<T>(Func<L, T> onLeft, Func<R, T> onRight)

Given the desired return type of SelectBoth, you know that T should be IEither<L1, R1>. This means, then, that for onLeft, you must supply a function of the type Func<L, IEither<L1, R1>>. Since a functor is a structure-preserving map, you should translate a left case to a left case, and a right case to a right case. This implies that the concrete return type that matches IEither<L1, R1> for the onLeft argument is Left<L1, R1>.

When you write the function with the type Func<L, IEither<L1, R1>> as a lambda expression, the input argument l has the type L. In order to create a new Left<L1, R1>, however, you need an L1 object. How do you produce an L1 object from an L object? You call selectLeft with l, because selectLeft is a function of the type Func<L, L1>.

You can apply the same line of reasoning to the onRight argument. Write a lambda expression that takes an R object r as input, call selectRight to turn that into an R1 object, and return it wrapped in a new Right<L1, R1> object.

This works as expected:

> new Left<string, int>("foo").SelectBoth(string.IsNullOrWhiteSpace, i => new DateTime(i)) Left<bool, DateTime>(false) > new Right<string, int>(1337).SelectBoth(string.IsNullOrWhiteSpace, i => new DateTime(i)) Right<bool, DateTime>([01.01.0001 00:00:00])

Notice that both of the above statements evaluated in C# Interactive use the same projections as input to SelectBoth. Clearly, though, because the inputs are first a Left value, and secondly a Right value, the outputs differ.

Mapping the left side #

When you have SelectBoth, you can trivially implement the translations for each dimension in isolation. In the previous article, I called these methods SelectFirst and SelectSecond. In this article, I've chosen to instead name them SelectLeft and SelectRight, but they still corresponds to Haskell's first and second Bifunctor functions.

public static IEither<L1, R> SelectLeft<L, L1, R>(this IEither<L, R> source, Func<L, L1> selector) { return source.SelectBoth(selector, r => r); }

The method body is literally a one-liner. Just call SelectBoth with selector as the projection for the left side, and the identity function as the projection for the right side. This ensures that if the actual value is a Right<L, R> object, nothing's going to happen. Only if the input is a Left<L, R> object will the projection run:

> new Left<string, int>("").SelectLeft(string.IsNullOrWhiteSpace) Left<bool, int>(true) > new Left<string, int>("bar").SelectLeft(string.IsNullOrWhiteSpace) Left<bool, int>(false) > new Right<string, int>(42).SelectLeft(string.IsNullOrWhiteSpace) Right<bool, int>(42)

In the above C# Interactive session, you can see how projecting three different objects using string.IsNullOrWhiteSpace works. When the Left object indeed does contain an empty string, the result is a Left value containing true. When the object contains "bar", however, it contains false. Furthermore, when the object is a Right value, the mapping has no effect.

Mapping the right side #

Similar to SelectLeft, you can also trivially implement SelectRight:

public static IEither<L, R1> SelectRight<L, R, R1>(this IEither<L, R> source, Func<R, R1> selector) { return source.SelectBoth(l => l, selector); }

This is another one-liner calling SelectBoth, with the difference that the identity function l => l is passed as the first argument, instead of as the last. This ensures that only Right values are mapped:

> new Left<string, int>("baz").SelectRight(i => new DateTime(i)) Left<string, DateTime>("baz") > new Right<string, int>(1_234_567_890).SelectRight(i => new DateTime(i)) Right<string, DateTime>([01.01.0001 00:02:03])

In the above examples, Right integers are projected into DateTime values, whereas Left strings stay strings.

Identity laws #

Either obeys all the bifunctor laws. While it's formal work to prove that this is the case, you can get an intuition for it via examples. Often, I use a property-based testing library like FsCheck or Hedgehog to demonstrate (not prove) that laws hold, but in this article, I'll keep it simple and only cover each law with a parametrised test.

private static T Id<T>(T x) => x; public static IEnumerable<object[]> BifunctorLawsData { get { yield return new[] { new Left<string, int>("foo") }; yield return new[] { new Left<string, int>("bar") }; yield return new[] { new Left<string, int>("baz") }; yield return new[] { new Right<string, int>( 42) }; yield return new[] { new Right<string, int>( 1337) }; yield return new[] { new Right<string, int>( 0) }; } } [Theory, MemberData(nameof(BifunctorLawsData))] public void SelectLeftObeysFirstFunctorLaw(IEither<string, int> e) { Assert.Equal(e, e.SelectLeft(Id)); }

This test uses xUnit.net's [Theory] feature to supply a small set of example input values. The input values are defined by the BifunctorLawsData property, since I'll reuse the same values for all the bifunctor law demonstration tests.

The tests also use the identity function implemented as a private function called Id, since C# doesn't come equipped with such a function in the Base Class Library.

For all the IEither<string, int> objects e, the test simply verifies that the original Either e is equal to the Either projected over the first axis with the Id function.

Likewise, the first functor law applies when translating over the second dimension:

[Theory, MemberData(nameof(BifunctorLawsData))] public void SelectRightObeysFirstFunctorLaw(IEither<string, int> e) { Assert.Equal(e, e.SelectRight(Id)); }

This is the same test as the previous test, with the only exception that it calls SelectRight instead of SelectLeft.

Both SelectLeft and SelectRight are implemented by SelectBoth, so the real test is whether this method obeys the identity law:

[Theory, MemberData(nameof(BifunctorLawsData))] public void SelectBothObeysIdentityLaw(IEither<string, int> e) { Assert.Equal(e, e.SelectBoth(Id, Id)); }

Projecting over both dimensions with the identity function does, indeed, return an object equal to the input object.

Consistency law #

In general, it shouldn't matter whether you map with SelectBoth or a combination of SelectLeft and SelectRight:

[Theory, MemberData(nameof(BifunctorLawsData))] public void ConsistencyLawHolds(IEither<string, int> e) { bool f(string s) => string.IsNullOrWhiteSpace(s); DateTime g(int i) => new DateTime(i); Assert.Equal(e.SelectBoth(f, g), e.SelectRight(g).SelectLeft(f)); Assert.Equal( e.SelectLeft(f).SelectRight(g), e.SelectRight(g).SelectLeft(f)); }

This example creates two local functions f and g. The first function, f, just delegates to string.IsNullOrWhiteSpace, although I want to stress that this is just an example. The law should hold for any two (pure) functions. The second function, g, creates a new DateTime object from an integer, using one of the DateTime constructor overloads.

The test then verifies that you get the same result from calling SelectBoth as when you call SelectLeft followed by SelectRight, or the other way around.

Composition laws #

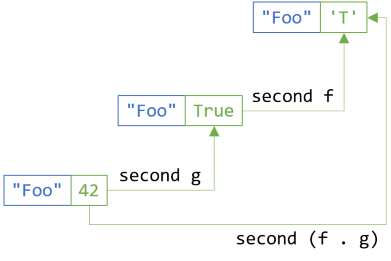

The composition laws insist that you can compose functions, or translations, and that again, the choice to do one or the other doesn't matter. Along each of the axes, it's just the second functor law applied. This parametrised test demonstrates that the law holds for SelectLeft:

[Theory, MemberData(nameof(BifunctorLawsData))] public void SecondFunctorLawHoldsForSelectLeft(IEither<string, int> e) { bool f(int x) => x % 2 == 0; int g(string s) => s.Length; Assert.Equal(e.SelectLeft(x => f(g(x))), e.SelectLeft(g).SelectLeft(f)); }

Here, f is the even function, whereas g is a local function that returns the length of a string. The second functor law states that mapping f(g(x)) in a single step is equivalent to first mapping over g and then map the result of that using f.

The same law applies if you fix the first dimension and translate over the second:

[Theory, MemberData(nameof(BifunctorLawsData))] public void SecondFunctorLawHoldsForSelectRight(IEither<string, int> e) { char f(bool b) => b ? 'T' : 'F'; bool g(int i) => i % 2 == 0; Assert.Equal(e.SelectRight(x => f(g(x))), e.SelectRight(g).SelectRight(f)); }

Here, f is a local function that returns 'T' for true and 'F' for false, and g is a local function that, as you've seen before, determines whether a number is even. Again, the test demonstrates that the output is the same whether you map over an intermediary step, or whether you map using only a single step.

This generalises to the composition law for SelectBoth:

[Theory, MemberData(nameof(BifunctorLawsData))] public void SelectBothCompositionLawHolds(IEither<string, int> e) { bool f(int x) => x % 2 == 0; int g(string s) => s.Length; char h(bool b) => b ? 'T' : 'F'; bool i(int x) => x % 2 == 0; Assert.Equal( e.SelectBoth(x => f(g(x)), y => h(i(y))), e.SelectBoth(g, i).SelectBoth(f, h)); }

Again, whether you translate in one or two steps shouldn't affect the outcome.

As all of these tests demonstrate, the bifunctor laws hold for Either. The tests only showcase six examples for either a string or an integer, but I hope it gives you an intuition how any Either object is a bifunctor. After all, the SelectLeft, SelectRight, and SelectBoth methods are all generic, and they behave the same for all generic type arguments.

Summary #

Either objects are bifunctors. You can translate the first and second dimension of an Either object independently of each other, and the bifunctor laws hold for any pure translation, no matter how you compose the projections.

As always, there can be performance differences between the various compositions, but the outputs will be the same regardless of composition.

A functor, and by extension, a bifunctor, is a structure-preserving map. This means that any projection preserves the structure of the underlying container. For Either objects, it means that left objects remain left objects, and right objects remain right objects, even if the contained values change. Either is characterised by containing exactly one value, but it can be either a left value or a right value. No matter how you translate it, it still contains only a single value - left or right.

The other common bifunctor, pair, is complementary. Not only does it also have two dimensions, but a pair always contains both values at once.

Next: Rose tree bifunctor.

Comments

I feel like the concepts of functor and bifunctor were used somewhat interchangeably in this post. Can we clarify this relationship?

To help us with this, consider type variance. The generic type Func<A> is covariant, but more specifically, it is covariant on A. That additional prepositional phrase is often omitted because it can be inferred. In contrast, the generic type Func<A, B> is both covariant and contravariant but (of course) not on the same type parameter. It is covariant in B and contravariant in A.

I feel like saying that a generic type with two type parameters is a (bi)functor also needs an additional prepositional phrase. Like, Either<L, R> is a bifunctor in L and R, so it is also a functor in L and a functor in R.

Does this seem like a clearer way to talk about a specific type being both a bifunctor and a fuctor?

Tyson, thank you for writing. I find no fault with what you wrote. Is it clearer? I don't know.

One thing that's surprised me throughout this endeavour is exactly what does or doesn't confuse readers. This, I can't predict.

A functor is, by its definition, assumed to be covariant. Contravariant functors also exist, but they're explicitly named contravariant functors to distinguish them from standard functors.

Ultimately, co- or contravariance of generic type arguments is (I think) insufficient to identify a type as a functor. Whether or not something is a functor is determined by whether or not it obeys the functor laws. Can we guarantee that all types with a covariant type argument will obey the functor laws?

I wasn't trying to discuss the relationship between functors and type variance. I just brought up type variance as an example in programming where I think adding additional prepositional phrases to statements can clarify things.

Tuple bifunctor

A Pair (a two-tuple) forms a bifunctor. An article for object-oriented programmers.

This article is an instalment in an article series about bifunctors. In the previous overview, you learned about the general concept of a bifunctor. In practice, there's two useful bifunctor instances: pairs (two-tuples) and Either. In this article, you'll see how a pair is a bifunctor, and in the next article, you'll see how Either fits the same abstraction.

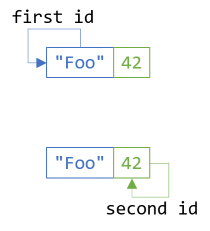

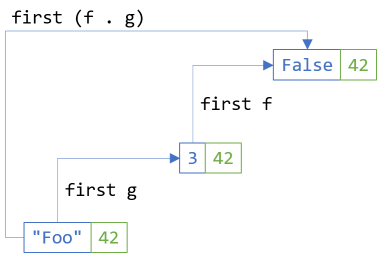

Tuple as a functor #

You can treat a normal pair (two-tuple) as a functor by mapping one of the elements, while keeping the other generic type fixed. In Haskell, when you have types with multiple type arguments, you often 'fix' the types from the left, leaving the right-most type free to vary. Doing this for a pair, which in C# has the type Tuple<T, U>, this means that tuples are functors if we keep T fixed and enable translation of the second element from U1 to U2.

This is easy to implement with a standard Select extension method:

public static Tuple<T, U2> Select<T, U1, U2>( this Tuple<T, U1> source, Func<U1, U2> selector) { return Tuple.Create(source.Item1, selector(source.Item2)); }

You simply return a new tuple by carrying source.Item1 over without modification, while on the other hand calling selector with source.Item2. Here's a simple example, which also highlights that C# understands functors:

var t = Tuple.Create("foo", 42); var actual = from i in t select i % 2 == 0;

Here, actual is a Tuple<string, bool> with the values "foo" and true. Inside the query expression, i is an int, and the select expression returns a bool value indicating whether the number is even or odd. Notice that the string in the first element disappears inside the query expression. It's still there, but the code inside the query expression can't see "foo".

Mapping the first element #

There's no technical reason why the mapping has to be over the second element; it's just how Haskell does it by convention. There are other, more philosophical reasons for that convention, but in the end, they boil down to the ultimately arbitrary cultural choice of reading from left to right (in Western scripts).

You can translate the first element of a tuple as easily:

public static Tuple<T2, U> SelectFirst<T1, T2, U>( this Tuple<T1, U> source, Func<T1, T2> selector) { return Tuple.Create(selector(source.Item1), source.Item2); }