ploeh blog danish software design

Dependency rejection

In functional programming, the notion of dependencies must be rejected. Instead, applications should be composed from pure and impure functions.

This is the third article in a small article series called from dependency injection to dependency rejection. In the previous article in the series, you learned that dependency injection can't be functional, because it makes everything impure. In this article, you'll see what to do instead.

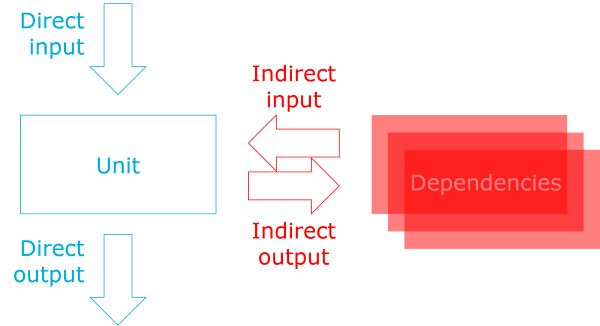

Indirect input and output #

One of the first concepts you learned when you learned to program was that units of operation (functions, methods, procedures) take input and produce output. Input is in the form of input parameters, and output is in the form of return values. (Sometimes, though, a method returns nothing, but we know from category theory that nothing is also a value (called unit).)

In addition to such input and output, a unit with dependencies also take indirect input, and produce indirect output:

When a unit queries a dependency for data, the data returned from the dependency is indirect input. In the restaurant reservation example used in this article series, when tryAccept calls readReservations, the returned reservations are indirect input.

Likewise, when a unit invokes a dependency, all arguments passed to that dependency constitute indirect output. In the example, when tryAccept calls createReservation, the reservation value it uses as input argument to that function call becomes output. The intent, in this case, is to save the reservation in a database.

From indirect output to direct output #

Instead of producing indirect output, you can refactor functions to produce direct output.

Such a refactoring is often problematic in mainstream object-oriented languages like C# and Java, because you wish to control the circumstances in which the indirect output must be produced. Indirect output often implies side-effects, but perhaps the side-effect must only happen when certain conditions are fulfilled. In the restaurant reservation example, the desired side-effect is to add a reservation to a database, but this must only happen when the restaurant has sufficient remaining capacity to serve the requested number of people. Since languages like C# and Java are statement-based, it can be difficult to separate the decision from the action.

In expression-based languages like F# and Haskell, it's trivial to decouple decisions from effects.

In the previous article, you saw a version of tryAccept with this signature:

// int -> (DateTimeOffset -> Reservation list) -> (Reservation -> int) -> Reservation // -> int option

The second function argument, with the type Reservation -> int, produces indirect output. The Reservation value is the output. The function even violates Command Query Separation and returns the database ID of the added reservation, so that's additional indirect input. The overall function returns int option: the database ID if the reservation was added, and None if it wasn't.

Refactoring the indirect output to direct output is easy, then: just remove the createReservation function and return the Reservation value instead:

// int -> (DateTimeOffset -> Reservation list) -> Reservation -> Reservation option let tryAccept capacity readReservations reservation = let reservedSeats = readReservations reservation.Date |> List.sumBy (fun x -> x.Quantity) if reservedSeats + reservation.Quantity <= capacity then { reservation with IsAccepted = true } |> Some else None

Notice that this refactored version of tryAccept returns a Reservation option value. The implication is that the reservation was accepted if the return value is a Some case, and rejected if the value is None. The decision is embedded in the value, but decoupled from the side-effect of writing to the database.

This function clearly never writes to the database, so at the boundary of your application, you'll have to connect the decision to the effect. To keep the example consistent with the previous article, you can do this in a tryAcceptComposition function, like this:

// Reservation -> int option let tryAcceptComposition reservation = reservation |> tryAccept 10 (DB.readReservations connectionString) |> Option.map (DB.createReservation connectionString)

Notice that the type of tryAcceptComposition remains Reservation -> int option. This is a true refactoring. The overall API remains the same, as does the behaviour. The reservation is added to the database only if there's sufficient remaining capacity, and in that case, the ID of the reservation is returned.

From indirect input to direct input #

Just as you can refactor from indirect output to direct output can you refactor from indirect input to direct input.

Again, in statement-based languages like C# and Java, this may be problematic, because you may wish to defer a query, or base it on a decision inside the unit. In expression-based languages you can decouple decisions from effects, and deferred execution can always be done by lazy evaluation, if that's required. In the case of the current example, however, the refactoring is easy:

// int -> Reservation list -> Reservation -> Reservation option let tryAccept capacity reservations reservation = let reservedSeats = reservations |> List.sumBy (fun x -> x.Quantity) if reservedSeats + reservation.Quantity <= capacity then { reservation with IsAccepted = true } |> Some else None

Instead of calling a (potentially impure) function, this version of tryAccept takes a list of existing reservations as input. It still sums over all the quantities, and the rest of the code is the same as before.

Obviously, the list of existing reservations must come from somewhere, like a database, so tryAcceptComposition will still have to take care of that:

// ('a -> 'b -> 'c) -> 'b -> 'a -> 'c let flip f x y = f y x // Reservation -> int option let tryAcceptComposition reservation = reservation.Date |> DB.readReservations connectionString |> flip (tryAccept 10) reservation |> Option.map (DB.createReservation connectionString)

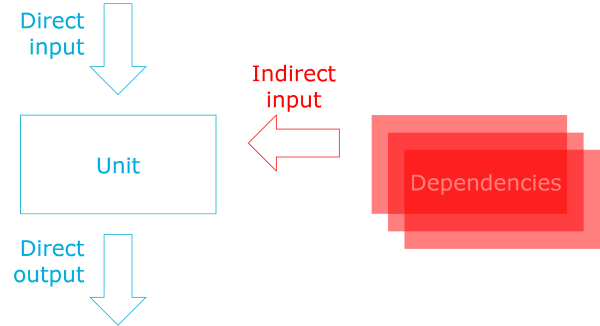

The type and behaviour of this composition is still the same as before, but the data flow is different. First, the function queries the database, which is an impure operation. Then, it pipes the resulting list of reservations to tryAccept, which is now a pure function. It returns a Reservation option that's finally mapped to another impure operation, which writes the reservation to the database if the reservation was accepted.

You'll notice that I also added a flip function in order to make the composition more concise, but I could also have used a lambda expression when invoking tryAccept. The flip function is a part of Haskell's standard library, but isn't in F#'s core library. It's not crucial to the example, though.

Evaluation #

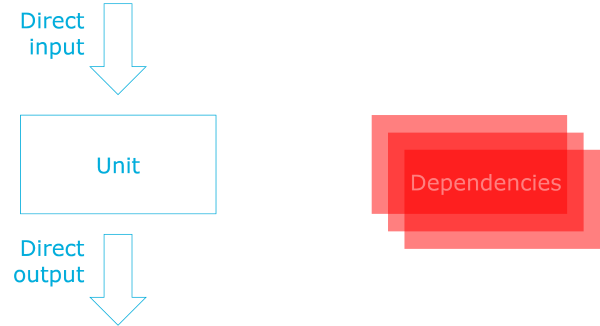

Did you notice that in the previous diagram, above, all arrows between the unit and its dependencies were gone? This means that the unit no longer has any dependencies:

Dependencies are, by their nature, impure, and since pure functions can't call impure functions, functional programming must reject the notion of dependencies. Pure functions can't depend on impure functions.

Instead, pure functions must take direct input and produce direct output, and the impure boundary of an application must compose impure and pure functions together in order to achieve the desired behaviour.

In the previous article, you saw how Haskell can be used to evaluate whether or not an implementation is functional. You can port the above F# code to Haskell to verify that this is the case.

tryAccept :: Int -> [Reservation] -> Reservation -> Maybe Reservation tryAccept capacity reservations reservation = let reservedSeats = sum $ map quantity reservations in if reservedSeats + quantity reservation <= capacity then Just $ reservation { isAccepted = True } else Nothing

This version of tryAccept is pure, and compiles, but as you learned in the previous article, that's not the crucial question. The question is whether the composition compiles?

tryAcceptComposition :: Reservation -> IO (Maybe Int) tryAcceptComposition reservation = runMaybeT $ liftIO (DB.readReservations connectionString $ date reservation) >>= MaybeT . return . flip (tryAccept 10) reservation >>= liftIO . DB.createReservation connectionString

This version of tryAcceptComposition compiles, and works as desired. The code exhibits a common pattern for Haskell: First, gather data from impure sources. Second, pass pure data to pure functions. Third, take the pure output from the pure functions, and do something impure with it.

It's like a sandwich, with the best parts in the middle, and some necessary stuff surrounding it.

Summary #

Dependencies are, by nature, impure. They're either non-deterministic, have side-effects, or both. Pure functions can't call impure functions (because that would make them impure as well), so pure functions can't have dependencies. Functional programming must reject the notion of dependencies.

Obviously, software is only useful with impure behaviour, so instead of injecting dependencies, functional programs must be composed in impure contexts. Impure functions can call pure functions, so at the boundary, an application must gather impure data, and use it to call pure functions. This automatically leads to the ports and adapters architecture.

This style of programming is surprisingly often possible, but it's not a universal solution; other alternatives exist.

Partial application is dependency injection

The equivalent of dependency injection in F# is partial function application, but it isn't functional.

This is the second article in a small article series called from dependency injection to dependency rejection.

People often ask me how to do dependency injection in F#. That's only natural, since I wrote Dependency Injection in .NET some years ago, and also since I've increasingly focused my energy on F# and other functional programming languages.

Over the years, I've seen other F# experts respond to that question, and often, the answer is that partial function application is the F# way to do dependency injection. For some years, I believed that as well. It turns out to be true in one sense, but incorrect in another. Partial application is equivalent to dependency injection. It's just not a functional solution to dealing with dependencies.

(To be as clear as I can be: I'm not claiming that partial application isn't functional. What I claim is that partial application used for dependency injection isn't functional.)

Attempted dependency injection using functions #

Returning to the example from the previous article, you could try to rewrite MaîtreD.TryAccept as a function:

// int -> (DateTimeOffset -> Reservation list) -> (Reservation -> int) -> Reservation // -> int option let tryAccept capacity readReservations createReservation reservation = let reservedSeats = readReservations reservation.Date |> List.sumBy (fun x -> x.Quantity) if reservedSeats + reservation.Quantity <= capacity then createReservation { reservation with IsAccepted = true } |> Some else None

You could imagine that this tryAccept function is part of a module called MaîtreD, just to keep the examples as equivalent as possible.

The function takes four arguments. The first is the capacity of the restaurant in question; a primitive integer. The next two arguments, readReservations and createReservation fill the role of the injected IReservationsRepository in the previous article. In the object-oriented example, the TryAccept method used two methods on the repository: ReadReservations and Create. Instead of using an interface, in the F# function, I make the function take two independent functions. They have (almost) the same types as their C# counterparts.

The first three arguments correspond to the injected dependencies in the previous MaîtreD class. The fourth argument is a Reservation value, which corresponds to the input to the previous TryAccept method.

Instead of returning a nullable integer, this F# version returns an int option.

The implementation is also equivalent to the C# example: Read the relevant reservations from the database using the readReservations function argument, and sum over their quantities. Based on the number of already reserved seats, decide whether or not to accept the reservation. If you can accept the reservation, set IsAccepted to true, call the createReservation function argument, and pipe the returned ID (integer) to Some. If you can't accept the reservation, then return None.

Notice that the first three arguments are 'dependencies', whereas the last argument is the 'actual input', if you will. This means that you can use partial function application to compose this function.

Application #

If you recall the definition of the previous IMaîtreD interface, the TryAccept method was defined like this (C# code snippet):

int? TryAccept(Reservation reservation);

You could attempt to define a similar function with the type Reservation -> int option. Normally, you'd want to do this closer to the boundary of the application, but the following example demonstrates how to 'inject' real database operations into the function.

Imagine that you have a DB module with these functions:

module DB = // string -> DateTimeOffset -> Reservation list let readReservations connectionString date = // .. // string -> Reservation -> int let createReservation connectionString reservation = // ..

The readReservations function takes a connection string and a date as arguments, and returns a list of reservations for that date. The createReservation function also takes a connection string, as well as a reservation. When invoked, it creates a new record for the reservation and returns the ID of the newly created row. (This sort of API violates CQS, so you should consider alternatives.)

If you partially apply these functions with a valid connection string, both have the type desired for their roles in tryAccept. This means that you can create a function from these elements:

// Reservation -> int option let tryAcceptComposition = let read = DB.readReservations connectionString let create = DB.createReservation connectionString tryAccept 10 read create

Notice how tryAccept itself is partially applied. Only the arguments corresponding to the C# dependencies are passed to it, so the return value is a function that 'waits' for the last argument: the reservation. As I've attempted to indicate by the code comment above the function, it has the desired type of Reservation -> int option.

Equivalence #

Partial application used like this is equivalent to dependency injection. To see how, consider the generated Intermediate Language (IL).

F# is a .NET language, so it compiles to IL. You can decompile that IL to C# to get a sense of what's going on. If you do that with the above tryAcceptComposition, you get something like this:

internal class tryAcceptComposition@17 : FSharpFunc<Reservation, FSharpOption<int>> { public int capacity; public FSharpFunc<Reservation, int> createReservation; public FSharpFunc<DateTimeOffset, FSharpList<Reservation>> readReservations; internal tryAcceptComposition@17( int capacity, FSharpFunc<DateTimeOffset, FSharpList<Reservation>> readReservations, FSharpFunc<Reservation, int> createReservation) { this.capacity = capacity; this.readReservations = readReservations; this.createReservation = createReservation; } public override FSharpOption<int> Invoke(Reservation reservation) { return MaîtreD.tryAccept<int>( this.capacity, this.readReservations, this.createReservation, reservation); } }

I've cleaned it up a bit, mostly by removing all attributes from the various elements. Notice how this is a class, with class fields, and a constructor that takes values for the fields and assigns them. It's constructor injection!

Partial application is dependency injection.

It compiles, works as expected, but is it functional?

Evaluation #

People sometimes ask me: How do I know whether my F# code is functional?

I sometimes wonder about that myself, but unfortunately, as nice a language as F# is, it doesn't offer much help in that regard. Its emphasis is on functional programming, but it allows mutation, object-oriented programming, and even procedural programming. It's a friendly and forgiving language. (This also makes it a great 'beginner' functional language, because you can learn functional concepts piecemeal.)

Haskell, on the other hand, is a strictly functional language. In Haskell, you can only write your code in the functional way.

Fortunately, F# and Haskell are similar enough that it's easy to port F# code to Haskell, as long as the F# code already is 'sufficiently functional'. In order to evaluate if my F# code is properly functional, I sometimes port it to Haskell. If I can get it to compile and run in Haskell, I take that as confirmation that my code is functional.

I've previously shown an example similar to this one, but I'll repeat the experiment here. Will porting tryAccept and tryAcceptComposition to Haskell work?

It's easy to port tryAccept:

tryAccept :: Int -> (ZonedTime -> [Reservation]) -> (Reservation -> Int) -> Reservation -> Maybe Int tryAccept capacity readReservations createReservation reservation = let reservedSeats = sum $ map quantity $ readReservations $ date reservation in if reservedSeats + quantity reservation <= capacity then Just $ createReservation $ reservation { isAccepted = True } else Nothing

Clearly, there are differences, but I'm sure that you can also see the similarities. The most important feature of this function is that it's pure. All Haskell functions are pure by default, unless explicitly declared to be impure, and that's not the case here. This function is pure, and so are both readReservations and createReservation.

The Haskell version of tryAccept compiles, but what about tryAcceptComposition?

Like the F# code, the experiment is to see if it's possible to 'inject' functions that actually operate against a database. Equivalent to the F# example, imagine that you have this DB module:

readReservations :: ConnectionString -> ZonedTime -> IO [Reservation] readReservations connectionString date = -- .. createReservation :: ConnectionString -> Reservation -> IO Int createReservation connectionString reservation = -- ..

Database operations are, by definition, impure, and Haskell admirably models that with the type system. Notice how both functions return IO values.

If you partially apply both functions with a valid connection string, the IO context remains. The type of DB.readReservations connectionString is ZonedTime -> IO [Reservation], and the type of DB.createReservation connectionString is Reservation -> IO Int. You can try to pass them to tryAccept, but the types don't match:

tryAcceptComposition :: Reservation -> IO (Maybe Int) tryAcceptComposition reservation = let read = DB.readReservations connectionString create = DB.createReservation connectionString in tryAccept 10 read create reservation

This doesn't compile.

It doesn't compile, because the database operations are impure, and tryAccept wants pure functions.

In short, partial application used for dependency injection isn't functional.

Summary #

Partial application in F# can be used to achieve a result equivalent to dependency injection. It compiles and works as expected, but it's not functional. The reason it's not functional is that (most) dependencies are, by their very nature, impure. They're either non-deterministic, have side-effects, or both, and that's often the underlying reason that they are factored into dependencies in the first place.

Pure functions, however, can't call impure functions. If they could, they would become impure themselves. This rule is enforced by Haskell, but not by F#.

When you inject impure operations into an F# function, that function becomes impure as well. Dependency injection makes everything impure, which explains why it isn't functional.

Functional programming solves the problem of decoupling (side) effects from program logic another way. That's the topic of the next article.

Next: Dependency rejection.

Comments

A couple of questions: If you're porting your code from F# to Haskell and back into F#, why not just use Haskell in the first place?

Also, why would you want to mark a function as having side effects in the first place?

Thanks

Kurren, thank you for writing. Why not use Haskell in the first place? In some situations, if that's an option, I'd go for it. In most of my professional work, however, it's not. A bit of background is required, I think. I've spent most of my professional career working with Microsoft technologies. Most of my clients use .NET. The majority of my clients use C#, but some are interested in adopting functional programming. F# is a great bridge to functional programming, because it integrates so well with existing C# code.

Perhaps most importantly is that while these organisations learn F# as a new programming language, and a new paradigm, all other dimensions of software development remain the same. You can use familiar libraries and frameworks, you can use the same Continuous Integration and build tools as you've been used to, you can deploy like you always do, and you can operate and monitor your software like before.

If you start using Haskell, not only will developers have to learn an entire new ecosystem, but if you have a separate operations team, they would have be on board with that as well.

If you can't get the entire organisation to accept Haskell-based software, then F# is a great choice for a .NET shop. Developers will obviously know the difference, but the rest of the organisation can be oblivious to the choice of language.

When it comes to side-effects, that's one of the main motivations behind pure code in the first place. A major source of bugs is that often, programs have unintended side-effects. It's easy to introduce defects in a code base when side-effects are uncontrolled. That's the case for languages like C# and Java, and, unfortunately, F# as well. When you look at a method or function, there's no easy way to determine whether it has side-effects. In other words: all methods or functions could have side effects. (Also: all methods could return null.)

This slows you down when you work on an existing code base, because there's only one way to determine if side-effects or non-deterministic behaviour are part of the code you're about to call: you have to read it all.

On the other hand, if you can tell, just by looking at a function's type, whether or not it has side-effects, you can save yourself a lot of time. By definition, all the pure functions have no side-effect. You don't need to read the code in order to detect that.

In Haskell, this is guaranteed. In F#, you can design your system that way, but some discipline is required in order to uphold such a guarantee.

Dependency injection is passing an argument

Is dependency injection really just passing an argument? A brief review.

This is the first article in a small article series called from dependency injection to dependency rejection.

In a talk at the 2012 Northeast Scala Symposium, Rúnar Bjarnason casually remarked that dependency injection is "really just a pretentious way to say 'taking an argument'". Given that I've written a 500+ pages book about dependency injection, you might expect me to disagree with that. Yet, there's some truth to that statement, although it's not quite as simple as that.

In this article, I'll show you some simple examples and explain why, on the one hand, Rúnar Bjarnason is right, but also, on the other hand, why there's a bit more to it.

Restaurant reservation example #

Like the other articles in this series, the example scenario is on-line restaurant reservation. Imagine that you've been asked to develop an HTTP-based API that accepts JSON documents containing restaurant reservations. Furthermore, assume that you're using ASP.NET Web API with C# for the job, and that you're aspiring to use domain-driven design.

In order to handle the incoming POST request, you could write an action method like this:

public IHttpActionResult Post(ReservationRequestDto dto) { var validationMsg = validator.Validate(dto); if (validationMsg != "") return this.BadRequest(validationMsg); var r = mapper.Map(dto); var id = maîtreD.TryAccept(r); if (id == null) return this.StatusCode(HttpStatusCode.Forbidden); return this.Ok(); }

This method follows a simple and familiar path: validate input, map to a domain model, delegate to said model, examine posterior state, and return a result.

You may have noticed, though, that this method doesn't do all the work itself. It delegates some of the work to collaborators: validator, mapper, and maîtreD. Where do these collaborators come from?

They are dependencies. Could you make the Post method take them as arguments?

Unfortunately, you can't. The Post method constitutes part of the boundary of the HTTP API. ASP NET Web API routes and dispatches incoming HTTP requests by convention, and action methods must follow that convention. You can't just make the function take any argument you'd like, so you have to find another place to pass those dependencies to the object.

The second-best option (after the Post method itself) is via the constructor:

public ReservationsController( IValidator validator, IMapper mapper, IMaîtreD maîtreD) { this.validator = validator; this.mapper = mapper; this.maîtreD = maîtreD; }

This is the application of a design pattern called constructor injection. It captures the dependencies in class fields, making them available for members (like Post) of the class.

This turns out to be a regular pattern.

Turtles all the way down #

You could argue that the Post method is a special case, since it's part of the boundary of the system, and therefore must adhere to specific rules. On the other hand, these rule don't apply deeper in the implementation, so could you implement other objects by simply passing in dependencies as arguments?

Consider, as an example, the implementation of IMaîtreD.TryAccept:

public int? TryAccept(Reservation reservation) { var reservedSeats = reservationsRepository .ReadReservations(reservation.Date) .Sum(r => r.Quantity); if (reservedSeats + reservation.Quantity <= capacity) { reservation.IsAccepted = true; return reservationsRepository.Create(reservation); } return null; }

This method has another collaborator: reservationsRepository. It's another dependency. Where does it come from?

Could you make the TryAccept method take reservationsRepository as an argument?

Unfortunately, that's not possible either, because the method is defined by the IMaîtreD interface:

public interface IMaîtreD { int? TryAccept(Reservation reservation); }

You may recall that the above Post method is programmed against the IMaîtreD interface, and not the concrete class. It'd be a leaky abstraction to add IReservationsRepository as an argument to IMaîtreD.TryAccept, because not all implementations of the interface may need that dependency. Or perhaps another implementation has another dependency. Should we add that to the parameter list of IMaîtreD.TryAcceptas well?

Surely, that's not a tenable design principle. On the other hand, by using constructor injection, you can decouple implementation details from your abstractions:

public MaîtreD(int capacity, IReservationsRepository reservationsRepository) { this.capacity = capacity; this.reservationsRepository = reservationsRepository; }

This constructor not only takes an IReservationsRepository object, but also an integer that represents the capacity of the restaurant in question. This demonstrates that dependencies can also be primitive values.

Summary #

Dependency injection is, in a sense, only a specific way for objects to take arguments. Often, however, objects have roles defined by the interfaces they implement. Such objects may need collaborators that are not available via the APIs defined by these interfaces, so you'll have to supply dependencies via members that belong to the concrete class in question. Passing dependencies via a class' constructor is the best way to do that.

Comments

Hey Mark. You may want to update the article slightly to cover the use of the [FromServices] attribute, which allows you to inject any service into a controller action as method-level injection. The main article on it is this one.

I'm personally not a fan of using it, but it is an option that transforms standard injection into parameter-passing for controllers.

Juliano, thank you for writing. I wasn't aware of that particular capability, so thank you for bringing it to my attention.

My article attempts to explain a general software design problem, as well as a possible solution. While it uses ASP.NET as an example context, the main point is independent of any particular framework, or language, for that matter.

From dependency injection to dependency rejection

The problem typically solved by dependency injection in object-oriented programming is solved in a completely different way in functional programming.

Several years ago, I wrote a book called Dependency Injection in .NET, which was published in 2011. The book contains examples in C#, but since then I've increasingly become interested in functional programming to the extend that I now consider F# my primary language.

With that combination, it's no wonder that people often ask me how to do dependency injection in functional programming.

I've seen more than one answer, from other people, explaining how partial function application is equivalent to dependency injection. In a small series of articles, I'll explain both why this is true, but also why it's not functional. I'll conclude by showing a functional alternative to decoupling logic and (side) effects.

(Comic courtesy of John Muellerleile and Igal Tabachnik.)

There's another school of functional programmers who believe that dependency injection in functional programming involves a Free monad.

You can often make do with less, though.

In my experience, it's usually enough to refactor a unit to take only direct input and output, and then compose an impure/pure/impure 'sandwich'. You'll see an example later.

This article series contains the following parts:

- Dependency injection is passing an argument

- Partial application is dependency injection

- Dependency rejection

- Pure interactions

The scenario is to implement an HTTP-based API that can accept incoming JSON documents that represent restaurant reservations.

The fourth article on pure interactions is a gateway to another article series on free monads.

I should point out that nowhere in this article series do I reject dependency injection as a set of object-oriented patterns. In object-oriented programming, dependency injection is a well-known and comprehensively described way to achieve decoupling and testability. In the next article, you'll see a brief review of dependency injection in C#.

Decoupling application errors from domain models

How to prevent application-specific error cases from infecting your domain models.

Functional error-handling is often done with the Either monad. If all is good, the right case is returned, but if things go wrong, you'll want to return a value that indicates the error. In an application, you'll often need to be able to distinguish between different kinds of errors.

From application errors to HTTP responses #

When an application encounters an error, it should respond appropriately. A GUI-based application should inform the user about the error, a batch job should log it, and a REST API should return the appropriate HTTP status code.

Regular readers of this blog will know that I write many RESTful APIs in F#, using ASP.NET Web API. Since I like to write functional F#, but ASP.NET Web API is an object-oriented framework, I prefer to escape the object-oriented framework as soon as possible. (In general, it makes good architectural sense to write most of your code as framework-independent as possible.)

In my Test-Driven Development with F# Pluralsight course (a free, condensed version is also available), I demonstrate how to handle various error cases in a Controller class:

type ReservationsController (imp) = inherit ApiController () member this.Post (dtr : ReservationDtr) : IHttpActionResult = match imp dtr with | Failure (ValidationError msg) -> this.BadRequest msg :> _ | Failure CapacityExceeded -> this.StatusCode HttpStatusCode.Forbidden :> _ | Success () -> this.Ok () :> _

The injected imp function is a complete, composed, vertical feature implementation that performs both input validation, business logic, and data access. If input validation fails, it'll return Failure (ValidationError msg), and that value is translated to a 400 Bad Request response. Likewise, if the business logic returns Failure CapacityExceeded, the response becomes 403 Forbidden, and a success is returned as 200 OK.

Both ValidationError and CapacityExceeded are cases of an Error type. This is only a simple example, so these are the only cases defined by that type:

type Error = | ValidationError of string | CapacityExceeded

This seems reasonable, but there's a problem.

Error infection #

In F#, a function can't use a type unless that type is already defined. This is a problem because the Error type defined above mixes different concerns. If you seek to make illegal states unrepresentable, it follows that validation is not a concern in your domain model. Validation is still important at the boundary of an application, so you can't just ignore it. The ValidationError case relates to the application boundary, while CapacityExceeded relates to the domain model.

Still, when implementing your domain model, you may want to return a CapacityExceeded value from time to time:

// int -> int -> Reservation -> Result<Reservation,Error> let checkCapacity capacity reservedSeats reservation = if capacity < reservation.Quantity + reservedSeats then Failure CapacityExceeded else Success reservation

Notice how the return type of this function is Result<Reservation,Error>. In order to be able to implement your domain model, you've now pulled in the Error type, which also defines the ValidationError case. Your domain model is now polluted by an application boundary concern.

I think many developers would consider this trivial, but in my experience, failure to manage dependencies is the dominant reason for code rot. It makes the code less general, and less reusable, because it's now coupled to something that may not fit into a different context.

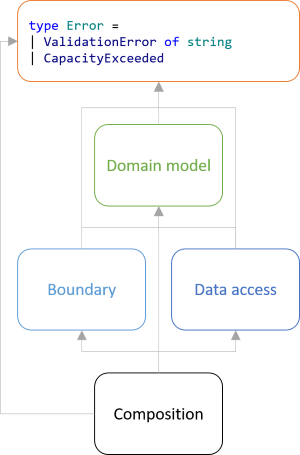

Particularly, the situation in the example looks like this:

Boundary and data access modules depend on the domain model, as they should, but everything depends on the Error type. This is wrong. Modules or libraries should be able to define their own error types.

The Error type belongs in the Composition Root, but it's impossible to put it there because F# prevents circular dependencies (a treasured language feature).

Fortunately, the fix is straightforward.

Mapped Either values #

A domain model should be self-contained. As Robert C. Martin puts it in APPP:

Abstractions should not depend upon details. Details should depend upon abstractions.Your domain model is an abstraction of the real world (that's why it's called a model), and is the reason you're developing a piece of software in the first place. So start with the domain model:

type BookingError = CapacityExceeded // int -> int -> Reservation -> Result<Reservation,BookingError> let checkCapacity capacity reservedSeats reservation = if capacity < reservation.Quantity + reservedSeats then Failure CapacityExceeded else Success reservation

In this example, there's only a single type of domain error (CapacityExceeded), but that's mostly because this is an example. Real production code could define a domain error union with several cases. The crux of the matter is that BookingError isn't infected with irrelevant implementation details like validation error types.

You're still going to need an exhaustive discriminated union to model all possible error cases for your particular application, but that type belongs in the Composition Root. Accordingly, you also need a way to return validation errors in your validation module. Often, a string is all you need:

// ReservationDtr -> Result<Reservation,string> let validateReservation (dtr : ReservationDtr) = match dtr.Date |> DateTimeOffset.TryParse with | (true, date) -> Success { Reservation.Date = date Name = dtr.Name Email = dtr.Email Quantity = dtr.Quantity } | _ -> Failure "Invalid date."

The validateReservation function returns a Reservation value when validation succeeds, and a simple string with an error message if it fails.

You could, conceivably, return string values for errors from many different places in your code, so you're going to map them into an appropriate error case that makes sense in your application.

In this particular example, the Controller shown above should still look like this:

type Error = | ValidationError of string | DomainError type ReservationsController (imp) = inherit ApiController () member this.Post (dtr : ReservationDtr) : IHttpActionResult = match imp dtr with | Failure (ValidationError msg) -> this.BadRequest msg :> _ | Failure DomainError -> this.StatusCode HttpStatusCode.Forbidden :> _ | Success () -> this.Ok () :> _

Notice how similar this is to the initial example. The important difference, however, is that Error is defined in the same module that also implements ReservationsController. This is part of the composition of the specific application.

In order to make that work, you're going to need to map from one failure type to another. This is trivial to do with an extra function belonging to your Result (or Either) module:

// ('a -> 'b) -> Result<'c,'a> -> Result<'c,'b> let mapFailure f x = match x with | Success succ -> Success succ | Failure fail -> Failure (f fail)

This function takes any Result value and maps the failure case instead of the success case. It enables you to transform e.g. a BookingError into a DomainError:

let imp candidate = either { let! r = validateReservation candidate |> mapFailure ValidationError let i = SqlGateway.getReservedSeats connectionString r.Date let! r = checkCapacity 10 i r |> mapFailure (fun _ -> DomainError) return SqlGateway.saveReservation connectionString r }

This composition is a variation of the composition I've previously published. The only difference is that the error cases are now mapped into the application-specific Error type.

Conclusion #

Errors can occur in diverse places in your code base: when validating input, when making business decisions, when writing to, or reading from, databases, and so on.

When you use the Either monad for error handling, in a strongly typed language like F#, you'll need to define a discriminated union that models all the error cases you care about in the specific application. You can map module-specific error types into such a comprehensive error type using a function like mapFailure. In Haskell, it would be the first function of the Bifunctor typeclass, so this is a well-known function.

Comments

Mark,

Why is it a problem to use HttpStatusCode in the domain model. They appear to be a standard way of categorizing errors.

David, thank you for writing. The answer depends on your goals and definition of domain model.

I usually think of domain models in terms of separation of concerns. The purpose of a domain model is to model the business logic, and as Martin Fowler writes in PoEAA about the Domain Model pattern, "you'll want the minimum of coupling from the Domain Model to other layers in the system. You'll notice that a guiding force of many layering patterns is to keep as few dependencies as possible between the domain model and the other parts of the system."

In other words, you're separating the concern of implementing the business rules from the concerns of being able to save data in a database, render it on a screen, send emails, and so on. While also important, these are separate concerns, and I want to be able to vary those independently.

People often hear statements like that as though I want to reserve myself the right to replace my SQL Server database with Neo4J (more on that later, though!). That's actually not my main goal, but I find that if concerns are mixed, all change becomes harder. It becomes more difficult to change how data is saved in a database, and it becomes harder to change business rules.

The Dependency Inversion Principle tries to address such problems by advising that abstractions shouldn't depend on implementation details, but instead, implementation details should depend on abstractions.

This is where the goals come in. I find Robert C. Martin's definition of software architecture helpful. Paraphrased from memory, he defines a software architect's role as enabling change; not predicting change, but making sure that when change has to happen, it's as economical as possible.

As an architect, one of the heuristics I use is that I try to imagine how easily I can replace one component with another. It's not that I really believe that I may have to replace the SQL Server database with Neo4J, but thinking about how hard it would be gives me some insights about how to structure a software solution.

I also imagine what it'd be like to port an application to another environment. Can I port my web site's business rules to a batch job? Can I port my desktop client to a smart phone app? Again, it's not that I necessarily predict that I'll have to do this, but it tells me something about the degrees of freedom offered by the architecture.

If not explicitly addressed, the opposite of freedom tends to happen. In APPP, Robert C. Martin describes a number of design smells, one of them Immobility: "A design is immobile when it contains parts that could be useful in other systems, but the effort and risk involved with separating those parts from the original system are too great. This is an unfortunate, but very common occurrence."

Almost as side-effect, an immobile system is difficult to test. A unit test is a different environment than the intended environment. Well-architected systems are easy to unit test.

HTTP is a communications protocol. Its purpose is to enable exchange of information over networks. While it does that well, it's specifically concerned with that purpose. This includes HTTP status code.

If you use the heuristic of imagining that you'd have to move the heart of your application to a batch job, status codes like 301 Moved Permanently, 404 Not Found, or 405 Method Not Allowed make little sense.

Using HTTP status codes in a domain model couples the model to a particular environment, at least conceptually. It has little to do with the ubiquitous language that Eric Evans discusses in DDD.

From REST to algebraic data

Mapping RESTful HTTP requests to values of algebraic data types is easy.

In previous articles, you've seen how to easily model a simple domain model with algebraic data types, and how to use RESTful API design to surface such a model at the boundary of an application. In this article, you'll see how trivial it is to map incoming HTTP requests back to values of algebraic data types.

The advantage of REST is that you can make illegal states unrepresentable. Clients follow links, and while clients are supposed to treat links as opaque values, URLs still contain information your API can use.

Routing and dispatching #

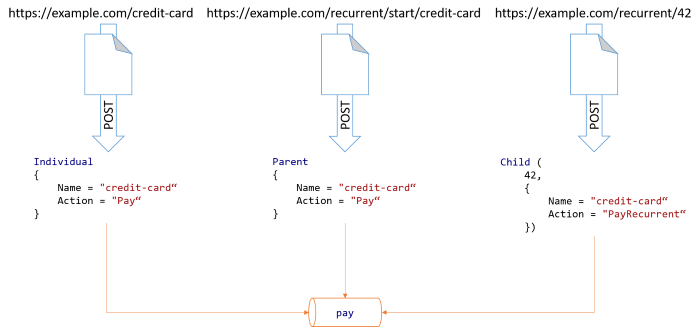

Continuing where the previous article left off, clients can issue POST requests against a URL like https://example.com/credit-card. On the server, a well-known piece of code handles such requests. (In the example code base I've used so far, I've been using ASP.NET Web API, so the code that handles such a request is a Controller.) Since you know that URLs like that are always routed to that particular piece of code, you can create a new PaymentType value that specifically represents an individual payment with a credit card:

let paymentType = Individual { Name = "credit-card"; Action = "Pay" }

If, on the other hand, the client is using a provided link to POST a representation against the URL https://example.com/recurrent/start/credit-card, your server-side dispatcher will route the request to a different handler (Controller), in which case you can create a PaymentType value like this:

let paymentType = Parent { Name = "credit-card"; Action = "Pay" }

Finally, if the client has already created a parent payment and is now using the resulting link to create child payments, it may be POSTing to a URL like https://example.com/recurrent/42. Your server-side dispatcher will route that request to a third handler. Most web frameworks, including ASP.NET Web API, will be able to pull values out of URLs. In this case, you can configure it so that it pulls the value 42 out of the URL and binds it to a value called transactionKey. With this, again it's trivial to create a PaymentType value:

let paymentType = Child (transactionKey, { Name = "credit-card"; Action = "PayRecurrent" })

Notice that, despite containing different data, and being created three different places in the code base, they all have the same type: PaymentType. This means that you can pass these values to a common pay function, which handles the actual communication with the third-party payment service.

Code reuse #

Independent of the route the data arrived at, a central, reusable function named pay handles all such payments. This is still an impure boundary function that takes various other input apart from PaymentType. Without going into too much detail, it has a type like Config -> PaymentType -> Result<PaymentDtr,BoundaryFailure>. Don't worry if some of the details look obscure; the important point is that pay is a function that takes a PaymentType value as input. You can visualise the transition from HTTP requests to a function call like this:

The pay function is composed from various smaller functions, some pure and some impure. Ultimately, it transforms all the input data to the format required by the third-party payment service, and forwards the transaction information. Inside that function you'll find the pattern match that you saw in my previous article.

Summary #

By making good use of routing and dispatching, you can easily map incoming HTTP requests to values of algebraic data types. This enables you to close the loop on exposing your domain model at the boundary of your system. Not only can clients request data from your API in terms of your model, but when clients send data to your API, you can translate that data back to your model.

Domain modelling with REST

Make illegal states unrepresentable by using hyperlinks as the engine of application state.

Every piece of software, whether it's a web service, smart phone app, batch job, or speech recognition system, interfaces with the world in some way. Sometimes, that interface is a user interface, sometimes it's a machine-readable interface; sometimes it involves rendering pixels on a screen, and sometimes it involves writing to files, selecting records from a database, sending emails, and so on.

Programmers often struggle with how to model these interactions. This is particularly difficult because at the boundaries, systems no longer adhere to popular programming paradigms. Previously, I've explained why, at the boundaries, applications aren't object-oriented. By the same type of argument, neither are they functional (as in 'functional programming').

If that's the case, why should you even bother with 'domain modelling'? Particularly, does it even matter that, with algebraic data types, you can make illegal states unrepresentable? If you need to compromise once you hit the boundary of your application, is it worth the effort?

It is, if you structure your application correctly. Proper (level 3) REST architecture gives you one way to structure applications in such a way that you can surface the constraints of your domain model to the interface layer. When done correctly, you can also make illegal states unrepresentable at the boundary.

A payment example #

In my previous article, I demonstrated how to use (static) types to model an on-line payment domain. To summarise, my task was to model three types of payments:

- Individual payments, which happen only once.

- Parent payments, which start a long-term payment relationship.

- Child payments, which are automated payments originally authorised by an initial parent payment.

"StartRecurrent" : "false" |

"StartRecurrent" : "true" |

|

|---|---|---|

"OriginalTransactionKey" : null |

Individual | Parent |

"OriginalTransactionKey" : "1234ABCD" |

Child | (Illegal) |

StartRecurrent to true. The other three combinations, on the other hand, are valid.

As I demonstrated in my previous article, you can easily model this with algebraic data types.

At the boundary, however, there are no static types, so how could you model something like that as a web service?

A RESTful solution #

A major advantage of REST is that it gives you a way to realise your domain model at the application boundary. It does require, though, that you design the API according to level 3 of the Richardson maturity model. In other words, it's not REST if you're merely tunnelling JSON (or XML) through HTTP. It's still not REST if you publish URL templates and expect clients to fill data into specific place-holders of those URLs.

It's REST if the only way a client can interact with your API is by following hyperlinks.

If you follow those design principles, however, it's easy to model the above payment domain as a RESTful API.

In the following, I will show examples in XML, but it could as well have been JSON. After all, a true REST API must support content negotiation. One of the reasons that I prefer XML is that I can use XPath to point out various nodes.

A client must begin at a pre-published 'home' resource, just like the home page of a web site. This resource presents affordances in the shape of hyperlinks. As recommended by the RESTful Web Services Cookbook, I always use ATOM links:

<home xmlns="http://example.com/payment" xmlns:atom="http://www.w3.org/2005/Atom"> <payment-methods> <payment-method> <links> <atom:link rel="example:pay-individual" href="https://example.com/gift-card" /> </links> <id>gift-card</id> </payment-method> <payment-method> <links> <atom:link rel="example:pay-individual" href="https://example.com/credit-card" /> <atom:link rel="example:pay-parent" href="https://example.com/recurrent/start/credit-card" /> </links> <id>credit-card</id> </payment-method> </payment-methods> </home>

A client receiving the above response is effectively presented with a choice. It can choose to pay with a gift card or credit card, and nothing else, however much it'd like to pay with, say, PayPal. For each of these payment methods, zero or more links are available.

Specifically, there are links to create both an individual or a parent payment with a credit card, but it's only possible to make an individual payment with a gift card. You can't set up a long-term, automated payment relationship with a gift card. (This may or may not make sense, depending on how you look at it, but it's fundamentally a business decision.)

Links are defined by relationship types, which are unique identifiers present in the rel attributes. You can think of them as equivalent to the human-readable text in an HTML a tag that suggests to a human user what to expect from clicking the link; only, rel attribute values are machine-readable and part of the contract between client and service.

Notice how the above XML representation only gives a client the option of making an individual or a parent payment with a credit card. A client can't make a child payment at this point. This follows the domain model, because you can't make a child payment without first having made a parent payment.

RESTful individual payments #

If a client wishes to make an individual payment, it follows the link identified by

/home/payment-methods/payment-method[id = 'credit-card']/links/atom:link[@rel = 'example:pay-individual']/@href

In the above XPath query, I've ignored the default document namespace in order to make the expression more readable. The query returns https://example.com/credit-card, and the client can now make an HTTP POST request against that URL with a JSON or XML document containing details about the payment (not shown here, because it's not important for this particular discussion).

RESTful parent payments #

If a client wishes to make a parent payment, the initial procedure is the same. First, it follows the link identified by

/home/payment-methods/payment-method[id = 'credit-card']/links/atom:link[@rel = 'example:pay-parent']/@href

The result of that XPath query is https://example.com/recurrent/start/credit-card, so the client can make an HTTP POST request against that URL with the payment details. Unlike the response for an individual payment, the response for a parent payment contains another link:

<payment xmlns="http://example.com/payment" xmlns:atom="http://www.w3.org/2005/Atom"> <links> <atom:link rel="example:pay-child" href="https://example.com/recurrent/42" /> <atom:link rel="example:payment-details" href="https://example.com/42" /> </links> <amount>13.37</amount> <currency>EUR</currency> <invoice>1234567890</invoice> </payment>

This response echoes the details of the payment: this is a payment of 13.37 EUR for invoice 1234567890. It also includes some links that a client can use to further interact with the payment:

- The

example:payment-detailslink can be used to query the API for details about the payment, for example its status. - The

example:pay-childlink can be used to make a child payment.

example:pay-child link is only returned if the previous payment was a parent payment. When a client makes an individual payment, this link isn't present in the response, but when the client makes a parent payment, it is.

Another design principle of REST is that cool URIs don't change; once the API has shown a URL like https://example.com/recurrent/42 to a client, it should honour that URL indefinitely. The upshot of that is that a client can save that URL for later use. If a client wants to, say, renew a subscription, it can make a new HTTP POST request to that URL a month later, and that's going to be a child payment. Clients don't have to hack the URL in order to figure out what the transaction key is; they can simply store the complete URL as is and use it later.

A network of options #

Using a design like the one sketched above, you can make illegal states unrepresentative. There's no way for a client to make a payment with StartRecurrent = true and a non-null transaction key; there's no link to that combination. Such an API uses hypermedia as the engine of application state.

It shouldn't be surprising that proper RESTful design works that way. After all, REST is essentially a distillate of the properties that make the World Wide Web work. On a human-readable web page, the user follows links to other pages, and a well-designed web site will only enable a link if the destination exists.

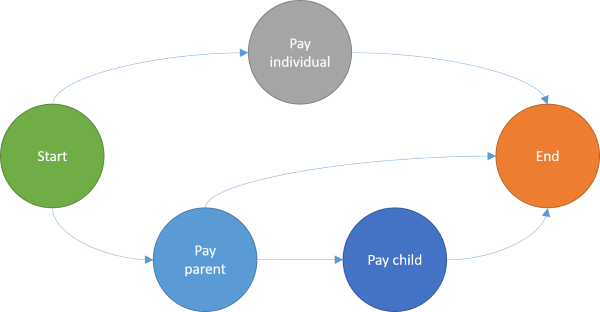

You can even draw a graph of the API I've sketched above:

In this diagram, you can see that when you make an individual payment, that's all you can do. You can also see that the only way to make a child payment is by first making a parent payment. There's also a path from parent payments directly to the end node, because a client doesn't have to make a child payment just because it made a parent payment.

If you think that this looks like a finite state machine, then that's no coincidence. That's exactly what it is. You have states (the nodes) and paths between them. If a state is illegal, then don't add that node; only add nodes for legal states, then add links between the nodes that model legal transitions.

Incidentally, languages like F# excel at implementing finite state machines, so it's no wonder I like to implement RESTful APIs in F#.

Summary #

Truly RESTful design enables you to make illegal states unrepresentable by using hypermedia as the engine of application state. This gives you a powerful design tool to ensure that clients can only perform correct operations.

As I also wrote in my previous article, this, too, is no silver bullet. You can turn an API into a pit of success, but there are still many fault scenarios that you can't prevent.

If you were intrigued by this article, but are having trouble applying these design techniques to your own field, I'm available for hire for short or long-term engagements.

Easy domain modelling with types

Algebraic data types make domain modelling easy.

People often ask me if I think that F# is a good general-purpose language, and when I emphatically answer yes!, the natural next question is: why?

For years, I've been able to answer this question in the abstract, but I've been looking for a good concrete example with which I could illustrate the answer. I believe that I've now found such an example.

The abstract answer, by the way, is that F# has algebraic data types, which makes domain modelling much easier than in languages that don't have such types. Don't worry if the word 'algebraic' sounds scary, though. It's not at all difficult to understand, and I'll show you a simple example.

Payment types #

At the moment, I'm working on an integration project: I'm developing a RESTful API that serves as Facade in front of a third-party payment provider. The third-party provider exposes their own API and web-based GUI that enable our end users to pay for services using credit cards, PayPal, and so on. The API that I'm developing presents a simplified, RESTful API to other clients in our organisation.

The example you're going to see here is real code that I'm writing in order to implement the desired functionality.

The system must be able to handle several different types of payment:

- Sometimes, a user pays for a single thing, and that's the end of that transaction.

- Other times, however, a user engages into a long-term payment relationship. This could be, for example, a subscription, or an 'auto-fill' style of relationship. This is handled in two distinct phases:

- An initial payment (can sometimes be for a zero amount) that authorises the merchant to make further transactions.

- Subsequent payments, based off that initial payment. These payments can be automated, because they require no further user interaction than the initial authorisation.

You can indicate the type of payment when interacting with the payment service's JSON-based API, like this:

{

...

"StartRecurrent": "false"

...

}

Obviously, as the (illegal) ellipses suggests, there's much more data associated with a payment, but that's not important in this example. Since StartRecurrent is false, this is either an individual payment, or a child payment. If you want to start a long-term relationship, you must create a parent payment and set StartRecurrent to true.

Child payments, however, are a bit different, because you have to tell the payment service about the parent payment:

{

...

"OriginalTransactionKey": "1234ABCD",

"StartRecurrent": "false"

...

}

As you can see, when making a child payment, you supply the transaction ID for the parent payment. (This ID is given to you by the payment service when you initiate the parent payment.)

In this case, you're clearly not starting a new recurrent transaction.

There are two dimensions of variation in this example: StartRecurrent and OriginalTransactionKey. Let's put them in a table:

"StartRecurrent" : "false" |

"StartRecurrent" : "true" |

|

|---|---|---|

"OriginalTransactionKey" : null |

Individual | Parent |

"OriginalTransactionKey" : "1234ABCD" |

Child | (Illegal) |

OriginalTransactionKey and setting StartRecurrent to true is illegal, or, in best case, meaningless.

How would you model the rules laid out in the above table? In languages like C#, it's difficult, but in F# it's easy.

C# attempts #

Most C# developers would, I think, attempt to model a payment transaction with a class. If they aim for poka-yoke design, they might come up with a design like this:

public class PaymentType { public PaymentType(bool startRecurrent) { this.StartRecurrent = startRecurrent; } public PaymentType(string originalTransactionKey) { if (originalTransactionKey == null) throw new ArgumentNullException(nameof(originalTransactionKey)); this.StartRecurrent = false; this.OriginalTransactionKey = originalTransactionKey; } public bool StartRecurrent { private set; get; } public string OriginalTransactionKey { private set; get; } }

This goes a fair way towards making illegal states unrepresentable, but it doesn't communicate to a fellow programmer how it should be used.

Code that uses instances of this PaymentType class could attempt to read the OriginalTransactionKey, which, depending on the type of payment, could return null. That sort of design leads to defensive coding.

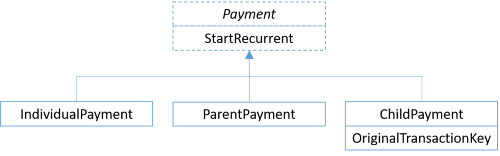

Other people might attempt to solve the problem by designing a class hierarchy:

(A variation on this design is to define an IPayment interface, and three concrete classes that implement that interface.)

This design trades better protection of invariants for violations of the Liskov Substitution Principle. Clients will have to (attempt to) downcast to subtypes in order to access all relevant data (particularly OriginalTransactionKey).

For completeness sake, I can think of at least one other option with significantly different trade-offs: applying the Visitor design pattern. This is, however, quite a complex solution, and most people will find the disadvantages greater than the benefits.

Is it such a big deal, then? After all, it's only a single data value (OriginalTransactionKey) that may or may not be there. Surely, most programmers will be able to deal with that.

This may be true in this isolated case, but keep in mind that this is only a motivating example. In many other situations, the domain you're trying to model is much more intricate, with many more exceptions to general rules. The more dimensions you add, the more difficult it becomes to reason about the code.

F# model #

F#, on the other hand, makes dealing with such problems so simple that it's almost anticlimactic. The reason is that F#'s type system enables you to model alternatives of data, in addition to the combinations of data that C# (or Java) enables. Such alternatives are called discriminated unions.

In the code base I'm currently developing, I model the various payment types like this:

type PaymentService = { Name : string; Action : string } type PaymentType = | Individual of PaymentService | Parent of PaymentService | Child of originalTransactionKey : string * paymentService : PaymentService

Here, PaymentService is a record type with some data about the payment (e.g. which credit card to use).

Even if you're not used to reading F# code, you can see three alternatives outlined on each of the three lines of code that start with a vertical bar (|). The PaymentType type has exactly three 'subtypes' (they're called cases, though). The illegal state of a non-null OriginalTransactionKey combined with StartRecurrent value of true is not possible. It can't be compiled.

Not only that, but all clients given a PaymentType value must deal with all three cases (or the compiler will issue a warning). Here's one example where our code is creating the JSON document to send to the payment service:

let name, action, startRecurrent, transaction = match req.PaymentType with | Individual { Name = name; Action = action } -> name, action, false, None | Parent { Name = name; Action = action } -> name, action, true, None | Child (transactionKey, { Name = name; Action = action }) -> name, action, false, Some transactionKey

This code example also extracts name and action from the PaymentType value, but the relevant values to be aware of are startRecurrent and transaction.

- For an individual payment,

startRecurrentbecomesfalseandtransactionbecomesNone(meaning that the value is missing). - For a parent payment,

startRecurrentbecomestrueandtransactionbecomesNone. - For a child payment,

startRecurrentbecomesfalseandtransactionbecomesSome transactionKey.

transactionKey is only available when the payment is a child payment.

The values startRecurrent and transaction (as well as name and action) are then used to create a JSON document. I'm not showing that part of the code here, since there's actually a lot going on in the real code base, and it's not related to how to model the domain. Imagine that these values are passed to a constructor.

This is a real-world example that, I hope, demonstrates why I prefer F# over C# for domain modelling. The type system enables me to model alternatives as well as combinations of data, and thereby making illegal states unrepresentable - all in only a few lines of code.

Summary #

Classes, in languages like C# and Java, enable you to model combinations of data. The more fields or properties you add to a class, the more combinations are possible. This is often useful, but sometimes you need to be able to model alternatives, rather than combinations.

Some languages, like F#, Haskell, OCaml, Elm, Kotlin, and many others, have type systems that give you the power to model both combinations and alternatives. Such types systems are said to have algebraic data types, but while the word sounds 'mathy', such types make it much easier to model complex domains.

There are more reasons to love F# than only its algebraic data types, but this is the foremost reason I find it a better language for mainstream development work than C#.

If you want to see a more complex example of modelling with types, a good next step would be the first article in my Types + Properties = Software article series.

Finally, I should be careful that I don't oversell the idea of making illegal states unrepresentable. Algebraic data types give you an extra dimension in which you can model domains, but there are still rules that they can't enforce. As an example, you can't state that integers must only fall in a certain range (e.g. only positive integers allowed). There are other type systems, such as dependent types, that give you even more power to embed domain rules into types, but as far as I know, there are no type systems that can fully model all rules as types. You'll still have to write some code as well.

The article is an instalment in the 2016 F# Advent calendar.

Comments

Mark,

I must be missing something important but it seems to me that the only advantage of using F# in this case is that the match is enforced to be exhaustive by the compiler. And of course the syntax is also nicer than a bunch of if's. In all other respects, the solution is basically equivalent to the C# class hierarchy approach.

Am I mistaken?

Botond, thank you for writing. The major advantage is that enumeration of all possible cases is available at compile-time. One derived advantage of that is that the compiler can check whether a piece of code handles all cases. That's already, in my experience, a big deal. The sooner you can get feedback on your work, the better, and it doesn't get faster than compile-time feedback.

Another advantage of having all cases encoded in the type system is that it gives you better tool support. Imagine that you're looking at the return value of a function, and that this is the first time you're encountering that return type. If the return value is an abstract base class (or interface), you'll need to resort to either the documentation or reflection in order to figure out which subtypes exist. There can be arbitrarily many subtypes, and they can be scattered over arbitrarily many libraries (assemblies). Figuring out what to do with an abstract base class introduces a context switch that could have been avoided.

This is exactly another advantage offered by discriminated unions: when a function returns a discriminated union, you can immediately get tool support to figure out what to do with it, even if you've never encountered the type before.

The problem with examples such as the above is that I'm trying to explain how a language feature can help you with modelling complex domains, but if I try to present a really complex problem, no-one will have the patience to read the article. Instead, I have to come up with an example that's so simple that the reader doesn't give up, and hopefully still complex enough that the reader can imagine how it's a stand-in for a more complex problem.

When you look at the problem presented above, it's not that complex, so you can still keep a C# implementation in your head. As you add more variability to the problem, however, you can easily find yourself in a situation where the combinatorial explosion of possible values make it difficult to ensure that you've dealt with all edge cases. This is one of the main reasons that C# and Java code often throws run-time exceptions (particularly null-reference exceptions).

It did, in fact, turn out that the above example domain became more complex as I learned more about the entire range of problems I had to solve. When I described the problem above, I thought that all payments would have pre-selected payment methods. In other words, when a user is presented with a check-out page, he or she selects the payment method (PayPal, direct debit, and so on), and only then, when we know payment method, do we initiate the payment flow. It turns out, though, that in some cases, we should start the payment flow first, and then let the user pick the payment method from a list of options. It should be noted, however, that user-selection only makes sense for interactive payments, so a child payment can never be user-selectable (since it's automated).

It was trivial to extend the domain model with that new requirement:

type PaymentService = { Name : string; Action : string } type PaymentMethod = | PreSelected of PaymentService | UserSelectable of string list type TransactionKey = TransactionKey of string with override this.ToString () = match this with TransactionKey s -> s type PaymentType = | Individual of PaymentMethod | Parent of PaymentMethod | Child of TransactionKey * PaymentService

This effectively uses the static type system to state that both the Individual and Parent cases can be defined in one of two ways: PreSelected or UserSelectable, each of which, again, contains heterogeneous data (PaymentService versus string list). Child payments, on the other hand, can't be user-selectable, but must be defined by a PaymentService value, as well as a transaction key (at this point, I'd also created a single-case union for the transaction key, but that's a different topic; it's still a string).

Handling all the different combinations was equally easy, and the compiler guarantees that I've handled all possible combinations:

let services, selectables, startRecurrent, transaction = match req.PaymentType with | Individual (PreSelected ps) -> service ps, None, false, None | Individual (UserSelectable us) -> [||], us |> String.concat ", " |> Some, false, None | Parent (PreSelected ps) -> service ps, None, true, None | Parent (UserSelectable us) -> [||], us |> String.concat ", " |> Some, true, None | Child (TransactionKey transactionKey, ps) -> service ps, None, false, Some transactionKey

How would you handle this with a class hierarchy, and what would the consuming code look like?

When variable names are in the way

While Clean Code recommends using good variable names to communicate the intent of code, sometimes, variable names can be in the way.

Good guides to more readable code, like Clean Code, explain how explicitly introducing variables with descriptive names can make the code easier to understand. There's much literature on the subject, so I'm not going to reiterate it here. It's not the topic of this article.

In the majority of cases, introducing a well-named variable will make the code more readable. There are, however, no rules without exceptions. After all, one of the hardest tasks in programming is naming things. In this article, I'll show you an example of such an exception. While the example is slightly elaborate, it's a real-world example I recently ran into.

Escaping object-orientation #

Regular readers of this blog will know that I write many RESTful APIs in F#, but using ASP.NET Web API. Since I like to write functional F#, but ASP.NET Web API is an object-oriented framework, I prefer to escape the object-oriented framework as soon as possible. (In general, it makes good architectural sense to write most of your code as framework-independent as possible.)

ASP.NET Web API expects you handle HTTP requests using Controllers, so I use Constructor Injection to inject a function that will do all the actual work, and delegate each request to a function call. It often looks like this:

type PushController (imp) = inherit ApiController () member this.Post (portalId : string, req : PushRequestDtr) : IHttpActionResult = match imp req with | Success () -> this.Ok () :> _ | Failure (ValidationFailure msg) -> this.BadRequest msg :> _ | Failure (IntegrationFailure msg) -> this.InternalServerError (InvalidOperationException msg) :> _

This particular Controller only handles HTTP POST requests, and it does it by delegating to the injected imp function and translating the return value of that function call to various HTTP responses. This enables me to compose imp from F# functions, and thereby escape the object-oriented design of ASP.NET Web API. In other words, each Controller is an Adapter over a functional implementation.

For good measure, though, this Controller implementation ought to be unit tested.

A naive unit test attempt #

Each HTTP request is handled at the boundary of the system, and the boundary of the system is always impure - even in Haskell. This is particularly clear in the case of the above PushController, because it handles Success (). In success cases, the result is () (unit), which strongly implies a side effect. Thus, a unit test ought to care not only about what imp returns, but also the input to the function.

While you could write a unit test like the following, it'd be naive.

[<Property(QuietOnSuccess = true)>] let ``Post returns correct result on validation failure`` req (NonNull msg) = let imp _ = Result.fail (ValidationFailure msg) use sut = new PushController (imp) let actual = sut.Post req test <@ actual |> convertsTo<Results.BadRequestErrorMessageResult> |> Option.map (fun r -> r.Message) |> Option.exists ((=) msg) @>

This unit test uses FsCheck's integration for xUnit.net, and Unquote for assertions. Additionally, it uses a convertsTo function that I've previously described.

The imp function for PushController must have the type PushRequestDtr -> Result<unit, BoundaryFailure>. In the unit test, it uses a wild-card (_) for the input value, so its type is 'a -> Result<'b, BoundaryFailure>. That's a wider type, but it matches the required type, so the test compiles (and passes).

FsCheck populates the req argument to the test function itself. This value is passed to sut.Post. If you look at the definition of sut.Post, you may wonder what happened to the individual (and currently unused) portalId argument. The explanation is that the Post method can be viewed as a method with two parameters, but it can also be viewed as an impure function that takes a single argument of the type string * PushRequestDtr - a tuple. In other words, the test function's req argument is a tuple. The test is not only concise, but also robust against refactorings. If you change the signature of the Post method, odds are that the test will still compile. (This is one of the many benefits of type inference.)

The problem with the test is that it doesn't verify the data flow into imp, so this version of PushController also passes the test:

type PushController (imp) = inherit ApiController () member this.Post (portalId : string, req : PushRequestDtr) : IHttpActionResult = let minimalReq = { Transaction = { Invoice = ""; Status = { Code = { Code = 0 } } } } match imp minimalReq with | Success () -> this.Ok () :> _ | Failure (ValidationFailure msg) -> this.BadRequest msg :> _ | Failure (IntegrationFailure msg) -> this.InternalServerError (InvalidOperationException msg) :> _

As the name implies, the minimalReq value is the 'smallest' value I can create of the PushRequestDtr type. As you can see, this implementation ignores the req method argument and instead passes minimalReq to imp. This is clearly wrong, but it passes the unit test test.

Data flow testing #

Not only should you care about the output of imp, but you should also care about the input. This is because imp is inherently impure, so it'd be conceivable that the input values matter in some way.

As xUnit Test Patterns explains, automated tests should contain no branching, so I don't think it's a good idea to define a test-specific imp function using conditionals. Instead, we can use guard assertions to verify that the input is as expected:

[<Property(QuietOnSuccess = true)>] let ``Post returns correct result on validation failure`` req (NonNull msg) = let imp candidate = candidate =! snd req Result.fail (ValidationFailure msg) use sut = new PushController (imp) let actual = sut.Post req test <@ actual |> convertsTo<Results.BadRequestErrorMessageResult> |> Option.map (fun r -> r.Message) |> Option.exists ((=) msg) @>