NuGet Package Restore considered harmful by Mark Seemann

The NuGet Package Restore feature is a really bad idea; this post explains why.

One of the first things I do with a new installation of Visual Studio is to disable the NuGet Package Restore feature. There are many reasons for that, but it all boils down to this:

NuGet Package Restore introduces more problems than it solves.

Before I tell you about all those problems, I'll share the solution with you: check your NuGet packages into source control. Yes, it's that simple.

Storage implications #

If you're like most other people, you don't like that solution, because it feels inefficient. And so what? Let's look at some numbers.

- The AutoFixture repository is 28.6 MB, and that's a pretty big code base (181,479 lines of code).

- The Hyprlinkr repository is 32.2 MB.

- The Albedo repository is 8.85 MB.

- The ZeroToNine repository is 4.91 MB.

- The sample code repository for my new Pluralsight course is 69.9 MB.

- The repository for Grean's largest production application is 32.5 MB.

- Last year I helped one of my clients build a big, scalable REST API. We had several repositories, of which the largest one takes up 95.3 MB on my disk.

On my laptops I'm using Lenovo-supported SSDs, so they're fairly expensive drives. Looking up current prices, it seems that a rough estimates of prices puts those disks at approximately 1 USD per GB.

On average, each of my repositories containing NuGet packages cost me four cents of disk drive space.

Perhaps I could have saved some of this money with Package Restore...

Clone time #

Another problem that the Package Restore feature seems to address, is the long time it takes to clone a repository - if you're on a shaky internet connection in a train. While it can be annoying to wait for a repository to clone, how often do you do that, compared to normal synchronization operations such as pull, push or fetch?

What should you be optimizing for? Cloning, which you do once in a while? Or fetch, pull, and push, which you do several times a day?

In most cases, the amount of time it takes to clone a repository is irrelevant.

To summarize so far: the problems that Package Restore solves are a couple of cents of disk cost, as well as making a rarely performed operation faster. From where I stand, it doesn't take a lot of problems before they outweigh the benefits - and there are plenty of problems with this feature.

Fragility #

The more moving parts you add to a system, the greater the risk of failure. If you use a Distributed Version Control System (DVCS) and keep all NuGet packages in the repository, you can work when you're off-line. With Package Restore, you've added a dependency on at least one package source.

- What happens if you have no network connection?

- What happens if your package source (e.g. NuGet.org) is down?

- What happens if you use multiple package sources (e.g. both NuGet.org and MyGet.org)?

This is a well-known trait of any distributed system: The system is only as strong as its weakest link. The more services you add, the higher is the risk that something breaks.

Custom package sources #

NuGet itself is a nice system, and I often encourage organizations to adopt it for internal use. You may have reusable components that you want to share within your organization, but not with the whole world. In Grean, we have such components, and we use MyGet to host the packages. This is great, but if you use Package Restore, now you depend on multiple services (NuGet.org and MyGet.org) to be available at the same time.

While Myget is a nice and well-behaved NuGet host, I've also worked with internal NuGet package sources, set up as an internal service in an organization. Some of these are not as well-behaved. In one case, 'old' packages were deleted from the package source, which had the consequence that when I later wanted to use an older version of the source code, I couldn't complete a Package Restore because the package with the desired version number was no longer available. There was simply no way to build that version of the code base!

Portability #

One of the many nice things about a DVCS is that you can xcopy your repository and move it to another machine. You can also copy it and give it to someone else. You could, for example, zip it and hand it over to an external consultant. If you use Package Restore and internal package sources, the consultant will not be able to compile the code you gave him or her.

Setup #

Perhaps you don't use external consultants, but maybe you set up a new developer machine once in a while. Perhaps you occasionally get a new colleague, who needs help with setting up the development environment. Particularly if you use custom package feeds, making it all work is yet another custom configuration step you need to remember.

Bandwidth cost #

As far as I've been able to tell, the purpose of Package Restore is efficiency. However, every time you compile with Package Restore enabled, you're using the network.

Consider a Build Server. Every time it makes a build, it should start with a clean slate. It can get the latest deltas from the shared source control repository, but it should start with a clean working folder. This means that every time it builds, it'll need to download all the NuGet packages via Package Restore. This not only wastes bandwidth, but takes time. In contrast, if you keep NuGet packages in the repository itself, the Build Server has everything it needs as soon as it has the latest version of the repository.

The same goes for your own development machine. Package Restore will make your compile process slower.

Glitches #

Finally, Package Restore simply doesn't work very well. Personally, I've wasted many hours troubleshooting problems that turned out to be related to Package Restore. Allow me to share one of these stories.

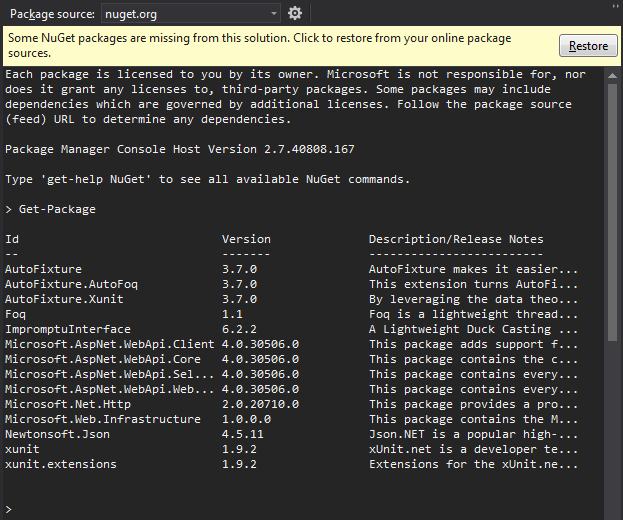

Recently, I encountered this sight when I opened a solution in Visual Studio:

My problem was that at first, I didn't understand what was wrong. Even though I store NuGet packages in my repositories, all of a sudden I got this error message. It turned out that this happened at the time when NuGet switched to enabling Package Restore by default, and I hadn't gotten around to disable it again.

The strange thing was the everything compiled and worked just great, so why was I getting that error message?

After much digging around, it turned out that the ImpromptuInterface.FSharp package was missing a .nuspec file. You may notice that ImpromptuInterface.FSharp is also missing in the package list above. All binaries, as well as the .nupkg file, was in the repository, but the ImpromptuInterface.FSharp.1.2.13.nuspec was missing. I hadn't noticed for weeks, because I didn't need it, but NuGet complained.

After I added the appropriate .nuspec file, the error message went away.

The resolution to this problem turned out to be easy, and benign, but I wasted an hour or two troubleshooting. It didn't make me feel productive at all.

This story is just one among many run-ins I've had with NuGet Package Restore, before I decided to ditch it.

Just say no #

The Package Restore feature solves these problems:

- It saves a nickel per repository in storage costs.

- It saves time when you clone a new repository, which you shouldn't be doing that often.

- adds complexity

- makes it harder to use custom package sources

- couples your ability to compile to having a network connection

- makes it more difficult to copy a code base

- makes it more difficult to set up your development environment

- uses more bandwidth

- leads to slower build times

- just overall wastes your time

For me, the verdict is clear. The benefits of Package Restore don't warrant the disadvantages. Personally, I always disable the feature and instead check in all packages in my repositories. This never gives me any problems.

Comments

"So going on two years from when you wrote this post, is this still how you feel about nuget packages being included in the repository? I have to say, all the points do seem to still apply, and I found myself agreeing with many of them, but I havne't been able to find many oppinions that mirror it. Most advice on the subject seems to be firmly in the other camp (not including nuget packages in the repo), though, as you note, the tradeoff doesn't seem to be a favorable one.

Blake, thank you for writing. Yes, this is still how I feel; nothing has changed.

Mark, completely agree with all your points, however in the future, not using package restore will no longer be an option. See Project.json all the things, most notably "Packages are now stored in a per-user cache instead of alongside the solution".

Like Peter, I am also interested in what you do now.

When you wrote that post, NuGet package dependencies were specificed (in part) by

packages.configfiles. Then cameproject.json. The Microsoft-recommened approach these days isPackageReference. The first approach caches the "restored" NuGet packages in the respository, but the latter two (as Peter said) only cache in a global location (namely%userprofile%\.nuget\packages). I expect that you are using thePackageReferenceapproach now, is that correct?I see where Peter is coming from. It does seem at first like NuGet restore is now "necessary". Of course it is still possible to commit the NuGet packages in the respository. Then I could add this directory as a local NuGet package source and restore the NuGet packages, which will copy them from the respository to the global cache (so that the build can copy the corresponding DLLs from the global cache to the output directory).

However, maybe it is possible to specify the location of the cached NuGet packages when building the solution. I just thought of this possibility while writing this, so I haven't been able to fully investiagate it. This seems reasonable to me, and my initial searches also seem to point toward this being possible.

So how do you handle NuGet dependencies now? Does your build obtain them from the global cache or have you found a way to point the build to a directory of your choice?

Tyson, thank you for writing. Currently, I use the standard tooling. I've lost that battle.

My opinion hasn't changed, but while it's possible to avoid package restore on .NET, I'm not aware of how to do that on .NET Core. I admit, however, that I haven't investigated this much.

I haven't done that much .NET Core development, and when I do, I typically do it to help other people. The things I help with typically relate to architecture, modelling, or testing. It can be hard for people to learn new things, so I aim at keeping the level of new things people have to absorb as low as possible.

Since people rarely come to me to learn package management, I don't want to rock that boat while I attempt to help people with something completely different. Therefore, I let them use their preferred approach, which is almost always the standard way.