The Liskov Substitution Principle as a profunctor by Mark Seemann

With a realistic example in C#.

Like the previous article on Postel's law as a profunctor, this article is part of a series titled Some design patterns as universal abstractions. And like the previous article, it's a bit of a stretch to include the present article in that series, since the Liskov Substitution Principle (LSP) isn't a design pattern, but rather a software design principle or heuristic. I still think, however, that the article fits the spirit of the article series, if not the letter.

As was also the case for the previous article, I don't claim that any of this is new. Michael Feathers and Rúnar Bjarnason blazed that trail long before me.

LSP distilled #

In more or less natural language, the LSP states that subtypes must preserve correctness. A subtype isn't allowed to change behaviour in such a way that client code breaks.

Note that subtypes are allowed to change behaviour. That's often the point of subtyping. By providing a subtype, you can change the behaviour of a system. You can, for example, override how messages are sent, so that an SMS becomes a Slack notification or a Tweet.

If client code 'originally' supplied correct input for sending an SMS, this input should also be valid for posting a Tweet.

Specifically (paraphrasing the Wikipedia entry as of early November 2021):

- Subtypes must be contravariant in input

- Subtypes must be covariant in output

- Preconditions must not be strengthened in the subtype

- Postconditions must not be weakened in the subtype

There's a bit more, but in this article, I'll focus on those rules. The first two we already know. Since any function is already a profunctor, we know that functions are contravariant in input and covariant in output.

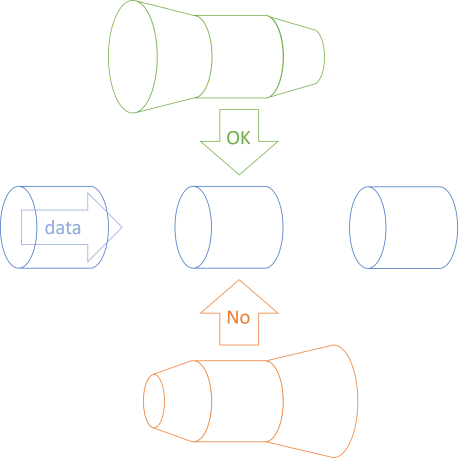

The LSP, however, isn't a rule about a single function. Rather, it's a rule about a family of functions. Think about a function a -> b as a pipe. You can replace the pipe segment with another pipe segment that has exactly the same shape, but replacing it with a flanged pipe also works, as long as the input flange is wider, and the nozzle narrower than the original pipe shape.

On the other hand, if you narrow the pipe at the input and widen it at the output, spillage will happen. That's essentially what the LSP states: The upper, green, flanged pipe is a good replacement for the supertype (the blue pipe in the middle), while the lower, orange, flanged pipe is not useful.

The previous article already described that visual metaphor when it comes to co- and contravariance, so this article will focus on pre- and postconditions. My conjecture is that this is just another take on co- and contravariance.

Supertype example #

When encountering statements about subtypes and supertypes, most people tend to think about object inheritance, but that's just one example. As I've previously outlined, anything that can 'act as' something else is a subtype of that something else. Specifically, an interface implementation is a subtype of the interface, and the interface itself the supertype.

Consider, as a supertype example, this interface from my book Code That Fits in Your Head:

public interface IReservationsRepository { Task Create(int restaurantId, Reservation reservation); Task<IReadOnlyCollection<Reservation>> ReadReservations( int restaurantId, DateTime min, DateTime max); Task<Reservation?> ReadReservation(int restaurantId, Guid id); Task Update(int restaurantId, Reservation reservation); Task Delete(int restaurantId, Guid id); }

Specifically, in this article, I'll focus exclusively on the ReadReservations method. You can imagine that there's an interface with only that method, or that when subtyping the interface in the following, we vary only that method and keep everything else fixed.

What might be the pre- and postconditions of the ReadReservations method?

ReadReservations preconditions #

The most basic kind of precondition is captured by the parameter types. In order to be able to call the method, you must supply an int and two DateTime instances. You can't omit any of them or replace one of the DateTime values with a Guid. In a statically typed language, this is obvious, and the compiler will take care of that.

Both int and DateTime are value types (structs), so they can't be null. Had one of the parameters been a reference type, it'd be appropriate to consider whether or not null constitutes valid input.

So far, we've only discussed static types. Of course a subtype must satisfy the compiler, but what other pre-conditions might be implied by ReadReservations?

The purpose of the method is to enable client code to query a data store and retrieve the reservations for a particular restaurant and in a particular time interval.

Is any restaurantId acceptable? 1? 0? -235?

It's probably a distraction that the restaurant ID is even an int. After all, you don't add IDs together, or multiply one with another one. That an ID is an integer is really just a leaky implementation detail - databases like it best when IDs are integers. I should actually have defined the restaurant ID as an opaque object with Value Object semantics, but I didn't (like other humans, I'm imperfect and lazy). The bottom line is that any number is as good as any other number. No precondition there.

What about the two DateTime parameters? Are DateTime.MinValue or DateTime.MaxValue valid values? Probably: If you'd like to retrieve all reservations in the past, you could ask for DateTime.MinValue as min and DateTime.Now as max. On the other hand, it'd be reasonable to require that min should be less than (or equal to?) max. That sounds like a proper precondition, and one that's difficult to express as a type.

We may also consider it a precondition that the object that implements the ReadReservations method is properly initialised, but I'll take that as a given. Making sure of that is the responsibility of the constructor, not the ReadReservations method.

To summarise, apart from the types and other things handled by the compiler, there's only one additional pre-condition: that min must be less than max.

Are there any postconditions?

ReadReservations postconditions #

The code base for the book obeys Command-Query Separation. Since ReadReservations returns data, it must be a Query. Thus, we can assume that calling it isn't supposed to change state. Thus, postconditions can only be statements about the return value.

Again, static typing takes care of the most fundamental postconditions. An implementation can't return a double or an UriBuilder. It must be a Task, and that task must compute an IReadOnlyCollection<Reservation>.

Why IReadOnlyCollection, by the way? That's my attempt at describing a particular postcondition as a type.

The IReadOnlyCollection<T> interface is a restriction of IEnumerable<T> that adds a Count. By adding the Count property, the interface strongly suggests that the collection is finite.

IEnumerable<T> implementations can be infinite sequences. These can be useful as functional alternatives to infinite loops, but are clearly not appropriate when retrieving reservations from a database.

The use of IReadOnlyCollection tells us about a postcondition: The collection of reservations is finite. This is, however, captured by the type. Any valid implementation of the interface ought to make that guarantee.

Is there anything else? Is it okay if the collection is empty? Yes, that could easily happen, if you have no reservations in the requested interval.

What else? Not much comes to mind, only that we'd expect the collection to be 'stable'. Technically, you could implement the GetEnumerator method so that it generates Count random Reservation objects every time you enumerate it, but none of the built-in implementations do that; that's quite a low bar, as postconditions go.

To summarise the postconditions: None, apart from a well-behaved implementation of IReadOnlyCollection<Reservation>.

SQL implementation #

According to the LSP, a subtype should be allowed to weaken preconditions. Keep in mind that I consider an interface implementation a subtype, so every implementation of ReadReservations constitutes a subtype. Consider the SQL Server-based implementation:

public async Task<IReadOnlyCollection<Reservation>> ReadReservations( int restaurantId, DateTime min, DateTime max) { var result = new List<Reservation>(); using var conn = new SqlConnection(ConnectionString); using var cmd = new SqlCommand(readByRangeSql, conn); cmd.Parameters.AddWithValue("@RestaurantId", restaurantId); cmd.Parameters.AddWithValue("@Min", min); cmd.Parameters.AddWithValue("@Max", max); await conn.OpenAsync().ConfigureAwait(false); using var rdr = await cmd.ExecuteReaderAsync().ConfigureAwait(false); while (await rdr.ReadAsync().ConfigureAwait(false)) result.Add(ReadReservationRow(rdr)); return result.AsReadOnly(); } private const string readByRangeSql = @" SELECT [PublicId], [At], [Name], [Email], [Quantity] FROM [dbo].[Reservations] WHERE [RestaurantId] = @RestaurantId AND @Min <= [At] AND [At] <= @Max";

This implementation actually doesn't enforce the precondition that min ought to be less than max. It doesn't have to, since the code will run even if that's not the case - the result set, if min is greater than max, will always be empty.

While perhaps not useful, weakening this precondition doesn't adversely affect well-behaved clients, and buggy clients are always going to receive empty results. If this implementation also fulfils all postconditions, it's already LSP-compliant.

Still, could it weaken preconditions even more, or in a different way?

Weakening of preconditions #

As Postel's law suggests, a method should be liberal in what it accepts. If it understands 'what the caller meant', it should perform the desired operation instead of insisting on the letter of the law.

Imagine that you receive a call where min is midnight June 6 and max is midnight June 5. While wrong, what do you think that the caller 'meant'?

The caller probably wanted to retrieve the reservations for June 5.

You could weaken that precondition by swapping back min and max if you detect that they've been swapped.

Let's assume, for the sake of argument, that we make the above ReadReservations implementation virtual. This enables us to inherit from SqlReservationsRepository and override the method:

public override Task<IReadOnlyCollection<Reservation>> ReadReservations( int restaurantId, DateTime min, DateTime max) { if (max < min) return base.ReadReservations(restaurantId, max, min); return base.ReadReservations(restaurantId, min, max); }

While this weakens preconditions, it breaks no existing clients because they all 'know' that they must pass the lesser value before the greater value.

Strengthening of postconditions #

Postel's law also suggests that a method should be conservative in what it sends. What could that mean?

In the case of ReadReservations, you may notice that the result set isn't explicitly sorted. Perhaps we'd like to also sort it on the date and time:

public override async Task<IReadOnlyCollection<Reservation>> ReadReservations( int restaurantId, DateTime min, DateTime max) { var query = min < max ? base.ReadReservations(restaurantId, min, max) : base.ReadReservations(restaurantId, max, min); var reservations = await query.ConfigureAwait(false); return reservations.OrderBy(r => r.At).ToList(); }

This implementation retains the weakened precondition from before, but now it also explicitly sorts the reservations on At.

Since no client code relies on sorting, this breaks no existing clients.

While the behaviour changes, it does so in a way that doesn't violate the original contract.

Profunctor #

While we've used terms such as weaken preconditions and strengthen postconditions, doesn't this look an awful lot like co- and contravariance?

I think it does, so let's rewrite the above implementation using the Reader profunctor.

First, we'll need to express the original method in the shape of a function like a -> b - that is: a function that takes a single input and returns a single output. While ReadReservations return a single value (a Task), it takes three input arguments. To make it fit the a -> b mould, we have to convert those three parameters to a Parameter Object.

This enables us to write the original implementation as a function:

Func<QueryParameters, Task<IReadOnlyCollection<Reservation>>> imp = q => base.ReadReservations(q.RestaurantId, q.Min, q.Max);

If we didn't want to weaken any preconditions or strengthen any postconditions, we could simply call imp and return its output.

The above weakened precondition can be expressed like this:

Func<QueryParameters, QueryParameters> pre = q => q.Min < q.Max ? new QueryParameters(q.RestaurantId, q.Min, q.Max) : new QueryParameters(q.RestaurantId, q.Max, q.Min);

Notice that this is a function from QueryParameters to QueryParameters. As above, it simply swaps Min and Max if required.

Likewise, we can express the strengthened postcondition as a function:

Func<Task<IReadOnlyCollection<Reservation>>, Task<IReadOnlyCollection<Reservation>>> post = t => t.Select(rs => rs.OrderBy(r => r.At).ToReadOnly());

The Select method exists because Task forms an asynchronous functor.

It's now possible to compose imp with pre and post using DiMap:

Func<QueryParameters, Task<IReadOnlyCollection<Reservation>>> composition = imp.DiMap(pre, post);

You can now call composition with the original arguments:

composition(new QueryParameters(restaurantId, min, max))

The output of such a function call is entirely equivalent to the above, subtyped ReadReservations implementation.

In case you've forgotten, the presence of a lawful DiMap function is what makes something a profunctor. We already knew that functions are profunctors, but now we've also seen that we can use this knowledge to weaken preconditions and strengthen postconditions.

It seems reasonable to conjecture that the LSP actually describes a profunctor.

It seems to me that the profunctor composition involved with the LSP always takes the specialised form where, for a function a -> b, the preprocessor (the contravariant mapping) always takes the form a -> a, and the postprocessor (the covariant mapping) always takes the form b -> b. This is because polymorphism must preserve the shape of the original function (a -> b).

Conclusion #

We already know that something contravariant in input and covariant in output is a profunctor candidate, but the Liskov Substitution Principle is usually expressed in terms of pre- and postconditions. Subtypes may weaken preconditions and strengthen postconditions, but not the other way around.

Evidence suggests that you can phrase these rules as a profunctor specialisation.

Comments

I think this depends on the language and your perspective on subtype polymorphism. In Java, a subclass

Yof a superclassXcan override a methodmonXthat has return typebwith a method onYthat has return typecprovidedcis a subtype ofb. To be clear, I am not saying that the instance returned fromY::mcan have runtime-typec, though that is also true. I am saying that the compile-time return type ofY::mcan bec.With this in mind, I am willing to argue that the postprocessor can take the form

b -> cprovidedcis a subtype ofb. As the programmer, that is how I think ofY::m. And yet, if someone has a instance ofYwith compile-time typeX, then callingmreturns something with compile-time typebby composing myb -> cfunction with the naturalc -> bfunction from subtype polymorphism to get theb -> bfunction that you suggested is required.Tyson, thank you for writing. Yes, true, that nuance may exist. This implies that the LSP is recursive, which really isn't surprising.

A function

a -> bmay be defined for typesaandb, where both are, themselves, polymorphic. So, ifais HttpStyleUriParser andbis MemberInfo, we'd have a functionFunc<HttpStyleUriParser, MemberInfo>(orHttpStyleUriParser -> MemberInfoif we want to stick with an ML-style type signature - we can imagine that it's an F# function).If

Func<HttpStyleUriParser, MemberInfo>is our polymorphic target signature, according to C# co- and contravariance, we're allowed to vary both input and output. We can, for example, widen the input type to UriParser and restrict the output type to Type. Thus, aspecificfunction of the typeFunc<UriParser, Type>is compatible with a general function of the typeFunc<HttpStyleUriParser, MemberInfo>:Func<HttpStyleUriParser, MemberInfo> general = specific;Thus, you're correct that both pre- and postprocessor may take the form

a' -> aandb -> b', wherea'is a supertype ofa, andbis a supertype ofb'. This is true for C# function delegates, since they're defined with theinandoutkeywords. You can putinandouton your own interface definitions as well, but most people don't bother (I rarely do, either).As with all practical recursion you need base cases to stop the recursion. While you can define polymorphic functions (or classes) where input parameters and return types are themselves polymorphic, etc., these should still obey the LSP.