ploeh blog danish software design

Serializing restaurant tables in F#

Using System.Text.Json, with and without Reflection.

This article is part of a short series of articles about serialization with and without Reflection. In this instalment I'll explore some options for serializing JSON with F# using the API built into .NET: System.Text.Json. I'm not going use Json.NET in this article, but I've done similar things with that library in the past, so what's here is, at least, somewhat generalizable.

Natural numbers #

Before we start investigating how to serialize to and from JSON, we must have something to serialize. As described in the introductory article we'd like to parse and write restaurant table configurations like this:

{

"singleTable": {

"capacity": 16,

"minimalReservation": 10

}

}

On the other hand, I'd like to represent the Domain Model in a way that encapsulates the rules governing the model, making illegal states unrepresentable.

As the first step, we observe that the numbers involved are all natural numbers. In F# it's both idiomatic and easy to define a predicative data type:

type NaturalNumber = private NaturalNumber of int

Since it's defined with a private constructor we need to also supply a way to create valid values of the type:

module NaturalNumber = let tryCreate n = if n < 1 then None else Some (NaturalNumber n)

In this, as well as the other articles in this series, I've chosen to model the potential for errors with Option values. I could also have chosen to use Result if I wanted to communicate information along the 'error channel', but sticking with Option makes the code a bit simpler. Not so much in F# or Haskell, but once we reach C#, applicative validation becomes complicated.

There's no loss of generality in this decision, since both Option and Result are applicative functors.

> NaturalNumber.tryCreate -1;;

val it: NaturalNumber option = None

> let x = NaturalNumber.tryCreate 42;;

val x: NaturalNumber option = Some NaturalNumber 42

The tryCreate function enables client developers to create NaturalNumber values, and due to the F#'s default equality and comparison implementation, you can even compare them:

> let y = NaturalNumber.tryCreate 2112;;

val y: NaturalNumber option = Some NaturalNumber 2112

> x < y;;

val it: bool = true

That's it for natural numbers. Three lines of code. Compare that to the Haskell implementation, which required eight lines of code. This is mostly due to F#'s private keyword, which Haskell doesn't have.

Domain Model #

Modelling a restaurant table follows in the same vein. One invariant I would like to enforce is that for a 'single' table, the minimal reservation should be a NaturalNumber less than or equal to the table's capacity. It doesn't make sense to configure a table for four with a minimum reservation of six.

In the same spirit as above, then, define this type:

type Table = private | SingleTable of NaturalNumber * NaturalNumber | CommunalTable of NaturalNumber

Once more the private keyword makes it impossible for client code to create instances directly, so we need a pair of functions to create values:

module Table = let trySingle capacity minimalReservation = option { let! cap = NaturalNumber.tryCreate capacity let! min = NaturalNumber.tryCreate minimalReservation if cap < min then return! None else return SingleTable (cap, min) } let tryCommunal = NaturalNumber.tryCreate >> Option.map CommunalTable

Notice that trySingle checks the invariant that the capacity must be greater than or equal to the minimalReservation.

Again, notice how much easier it is to define a predicative type in F#, compared to Haskell.

This isn't a competition between languages, and while F# certainly scores a couple of points here, Haskell has other advantages.

The point of this little exercise, so far, is that it encapsulates the contract implied by the Domain Model. It does this by using the static type system to its advantage.

JSON serialization by hand #

At the boundaries of applications, however, there are no static types. Is the static type system still useful in that situation?

For a long time, the most popular .NET library for JSON serialization was Json.NET, but these days I find the built-in API offered in the System.Text.Json namespace adequate. This is also the case here.

The original rationale for this article series was to demonstrate how serialization can be done without Reflection, so I'll start there and return to Reflection later.

In this article series, I consider the JSON format fixed. A single table should be rendered as shown above, and a communal table should be rendered like this:

{ "communalTable": { "capacity": 42 } }

Often in the real world you'll have to conform to a particular protocol format, or, even if that's not the case, being able to control the shape of the wire format is important to deal with backwards compatibility.

As I outlined in the introduction article you can usually find a more weakly typed API to get the job done. For serializing Table to JSON it looks like this:

let serializeTable = function | SingleTable (NaturalNumber capacity, NaturalNumber minimalReservation) -> let j = JsonObject () j["singleTable"] <- JsonObject () j["singleTable"]["capacity"] <- capacity j["singleTable"]["minimalReservation"] <- minimalReservation j.ToJsonString () | CommunalTable (NaturalNumber capacity) -> let j = JsonObject () j["communalTable"] <- JsonObject () j["communalTable"]["capacity"] <- capacity j.ToJsonString ()

In order to separate concerns, I've defined this functionality in a new module that references the module that defines the Domain Model. The serializeTable function pattern-matches on SingleTable and CommunalTable to write two different JsonObject objects, using the JSON API's underlying Document Object Model (DOM).

JSON deserialization by hand #

You can also go the other way, and when it looks more complicated, it's because it is. When serializing an encapsulated value, not a lot can go wrong because the value is already valid. When deserializing a JSON string, on the other hand, all sorts of things can go wrong: It might not even be a valid string, or the string may not be valid JSON, or the JSON may not be a valid Table representation, or the values may be illegal, etc.

Here I found it appropriate to first define a small API of parsing functions, mostly in order to make the object-oriented API more composable. First, I need some code that looks at the root JSON object to determine which kind of table it is (if it's a table at all). I found it appropriate to do that as a pair of active patterns:

let private (|Single|_|) (node : JsonNode) = match node["singleTable"] with | null -> None | tn -> Some tn let private (|Communal|_|) (node : JsonNode) = match node["communalTable"] with | null -> None | tn -> Some tn

It turned out that I also needed a function to even check if a string is a valid JSON document:

let private tryParseJson (candidate : string) = try JsonNode.Parse candidate |> Some with | :? System.Text.Json.JsonException -> None

If there's a way to do that without a try/with expression, I couldn't find it. Likewise, trying to parse an integer turns out to be surprisingly complicated:

let private tryParseInt (node : JsonNode) = match node with | null -> None | _ -> if node.GetValueKind () = JsonValueKind.Number then try node |> int |> Some with | :? FormatException -> None // Thrown on decimal numbers else None

Both tryParseJson and tryParseInt are, however, general-purpose functions, so if you have a lot of JSON you need to parse, you can put them in a reusable library.

With those building blocks you can now define a function to parse a Table:

let tryDeserializeTable (candidate : string) = match tryParseJson candidate with | Some (Single node) -> option { let! capacity = node["capacity"] |> tryParseInt let! minimalReservation = node["minimalReservation"] |> tryParseInt return! Table.trySingle capacity minimalReservation } | Some (Communal node) -> option { let! capacity = node["capacity"] |> tryParseInt return! Table.tryCommunal capacity } | _ -> None

Since both serialisation and deserialization is based on string values, you should write automated tests that verify that the code works, and in fact, I did. Here are a few examples:

[<Fact>] let ``Deserialize single table for 4`` () = let json = """{"singleTable":{"capacity":4,"minimalReservation":3}}""" let actual = tryDeserializeTable json Table.trySingle 4 3 =! actual [<Fact>] let ``Deserialize non-table`` () = let json = """{"foo":42}""" let actual = tryDeserializeTable json None =! actual

Apart from module declaration and imports etc. this hand-written JSON capability requires 46 lines of code, although, to be fair, some of that code (tryParseJson and tryParseInt) are general-purpose functions that belong in a reusable library. Can we do better with static types and Reflection?

JSON serialisation based on types #

The static JsonSerializer class comes with Serialize<T> and Deserialize<T> methods that use Reflection to convert a statically typed object to and from JSON. You can define a type (a Data Transfer Object (DTO) if you will) and let Reflection do the hard work.

In Code That Fits in Your Head I explain how you're usually better off separating the role of serialization from the role of Domain Model. One way to do that is exactly by defining a DTO for serialisation, and let the Domain Model remain exclusively to model the rules of the application. The above Table type plays the latter role, so we need new DTO types:

type CommunalTableDto = { Capacity : int } type SingleTableDto = { Capacity : int; MinimalReservation : int } type TableDto = { CommunalTable : CommunalTableDto option SingleTable : SingleTableDto option }

One way to model a sum type with a DTO is to declare both cases as option fields. While it does allow illegal states to be representable (i.e. both kinds of tables defined at the same time, or none of them present) this is only par for the course at the application boundary.

While you can serialize values of that type, by default the generated JSON doesn't have the right format:

> val dto: TableDto = { CommunalTable = Some { Capacity = 42 }

SingleTable = None }

> JsonSerializer.Serialize dto;;

val it: string = "{"CommunalTable":{"Capacity":42},"SingleTable":null}"

There are two problems with the generated JSON document:

- The casing is wrong

- The null value shouldn't be there

None of those are too hard to address, but it does make the API a bit more awkward to use, as this test demonstrates:

[<Fact>] let ``Serialize communal table via reflection`` () = let dto = { CommunalTable = Some { Capacity = 42 }; SingleTable = None } let actual = JsonSerializer.Serialize ( dto, JsonSerializerOptions ( PropertyNamingPolicy = JsonNamingPolicy.CamelCase, IgnoreNullValues = true )) """{"communalTable":{"capacity":42}}""" =! actual

You can, of course, define this particular serialization behaviour as a reusable function, so it's not a problem that you can't address. I just wanted to include this, since it's part of the overall work that you have to do in order to make this work.

JSON deserialisation based on types #

To allow parsing of JSON into the above DTO the Reflection-based Deserialize method pretty much works out of the box, although again, it needs to be configured. Here's a passing test that demonstrates how that works:

[<Fact>] let ``Deserialize single table via reflection`` () = let json = """{"singleTable":{"capacity":4,"minimalReservation":2}}""" let actual = JsonSerializer.Deserialize<TableDto> ( json, JsonSerializerOptions ( PropertyNamingPolicy = JsonNamingPolicy.CamelCase )) { CommunalTable = None SingleTable = Some { Capacity = 4; MinimalReservation = 2 } } =! actual

There's only difference in casing, so you'd expect the Deserialize method to be a Tolerant Reader, but no. It's very particular about that, so the JsonNamingPolicy.CamelCase configuration is necessary. Perhaps the API designers found that explicit is better than implicit.

In any case, you could package that in a reusable Deserialize function that has all the options that are appropriate in a particular code context, so not a big deal. That takes care of actually writing and parsing JSON, but that's only half the battle. This only gives you a way to parse and serialize the DTO. What you ultimately want is to persist or dehydrate Table data.

Converting DTO to Domain Model, and vice versa #

As usual, converting a nice, encapsulated value to a more relaxed format is safe and trivial:

let toTableDto = function | SingleTable (NaturalNumber capacity, NaturalNumber minimalReservation) -> { CommunalTable = None SingleTable = Some { Capacity = capacity MinimalReservation = minimalReservation } } | CommunalTable (NaturalNumber capacity) -> { CommunalTable = Some { Capacity = capacity }; SingleTable = None }

Going the other way is fundamentally a parsing exercise:

let tryParseTableDto candidate = match candidate.CommunalTable, candidate.SingleTable with | Some { Capacity = capacity }, None -> Table.tryCommunal capacity | None, Some { Capacity = capacity; MinimalReservation = minimalReservation } -> Table.trySingle capacity minimalReservation | _ -> None

Such an operation may fail, so the result is a Table option. It could also have been a Result<Table, 'something>, if you wanted to return information about errors when things go wrong. It makes the code marginally more complex, but doesn't change the overall thrust of this exploration.

Ironically, while tryParseTableDto is actually more complex than toTableDto it looks smaller, or at least denser.

Let's take stock of the type-based alternative. It requires 26 lines of code, distributed over three DTO types and the two conversions tryParseTableDto and toTableDto, but here I haven't counted configuration of Serialize and Deserialize, since I left that to each test case that I wrote. Since all of this code generally stays within 80 characters in line width, that would realistically add another 10 lines of code, for a total around 36 lines.

This is smaller than the DOM-based code, although at the same magnitude.

Conclusion #

In this article I've explored two alternatives for converting a well-encapsulated Domain Model to and from JSON. One option is to directly manipulate the DOM. Another option is take a more declarative approach and define types that model the shape of the JSON data, and then leverage type-based automation (here, Reflection) to automatically parse and write the JSON.

I've deliberately chosen a Domain Model with some constraints, in order to demonstrate how persisting a non-trivial data model might work. With that setup, writing 'loosely coupled' code directly against the DOM requires 46 lines of code, while taking advantage of type-based automation requires 36 lines of code. Contrary to the Haskell example, Reflection does seem to edge out a win this round.

Serializing restaurant tables in Haskell

Using Aeson, with and without generics.

This article is part of a short series of articles about serialization with and without Reflection. In this instalment I'll explore some options for serializing JSON using Aeson.

The source code is available on GitHub.

Natural numbers #

Before we start investigating how to serialize to and from JSON, we must have something to serialize. As described in the introductory article we'd like to parse and write restaurant table configurations like this:

{

"singleTable": {

"capacity": 16,

"minimalReservation": 10

}

}

On the other hand, I'd like to represent the Domain Model in a way that encapsulates the rules governing the model, making illegal states unrepresentable.

As the first step, we observe that the numbers involved are all natural numbers. While I'm aware that Haskell has built-in Nat type, I choose not to use it here, for a couple of reasons. One is that Nat is intended for type-level programming, and while this might be useful here, I don't want to pull in more exotic language features than are required. Another reason is that, in this domain, I want to model natural numbers as excluding zero (and I honestly don't remember if Nat allows zero, but I think that it does..?).

Another option is to use Peano numbers, but again, for didactic reasons, I'll stick with something a bit more idiomatic.

You can easily introduce a wrapper over, say, Integer, to model natural numbers:

newtype Natural = Natural Integer deriving (Eq, Ord, Show)

This, however, doesn't prevent you from writing Natural (-1), so we need to make this a predicative data type. The first step is to only export the type, but not its data constructor:

module Restaurants ( Natural, -- More exports here... ) where

But this makes it impossible for client code to create values of the type, so we need to supply a smart constructor:

tryNatural :: Integer -> Maybe Natural tryNatural n | n < 1 = Nothing | otherwise = Just (Natural n)

In this, as well as the other articles in this series, I've chosen to model the potential for errors with Maybe values. I could also have chosen to use Either if I wanted to communicate information along the 'error channel', but sticking with Maybe makes the code a bit simpler. Not so much in Haskell or F#, but once we reach C#, applicative validation becomes complicated.

There's no loss of generality in this decision, since both Maybe and Either are Applicative instances.

With the tryNatural function you can now (attempt to) create Natural values:

ghci> tryNatural (-1) Nothing ghci> x = tryNatural 42 ghci> x Just (Natural 42)

This enables client developers to create Natural values, and due to the type's Ord instance, you can even compare them:

ghci> y = tryNatural 2112 ghci> x < y True

Even so, there will be cases when you need to extract the underlying Integer from a Natural value. You could supply a normal function for that purpose, but in order to make some of the following code a little more elegant, I chose to do it with pattern synonyms:

{-# COMPLETE N #-}

pattern N :: Integer -> Natural

pattern N i <- Natural i

That needs to be exported as well.

So, eight lines of code to declare a predicative type that models a natural number. Incidentally, this'll be 2-3 lines of code in F#.

Domain Model #

Modelling a restaurant table follows in the same vein. One invariant I would like to enforce is that for a 'single' table, the minimal reservation should be a Natural number less than or equal to the table's capacity. It doesn't make sense to configure a table for four with a minimum reservation of six.

In the same spirit as above, then, define this type:

data SingleTable = SingleTable { singleCapacity :: Natural , minimalReservation :: Natural } deriving (Eq, Ord, Show)

Again, only export the type, but not its data constructor. In order to extract values, then, supply another pattern synonym:

{-# COMPLETE SingleT #-}

pattern SingleT :: Natural -> Natural -> SingleTable

pattern SingleT c m <- SingleTable c m

Finally, define a Table type and two smart constructors:

data Table = Single SingleTable | Communal Natural deriving (Eq, Show) trySingleTable :: Integer -> Integer -> Maybe Table trySingleTable capacity minimal = do c <- tryNatural capacity m <- tryNatural minimal if c < m then Nothing else Just (Single (SingleTable c m)) tryCommunalTable :: Integer -> Maybe Table tryCommunalTable = fmap Communal . tryNatural

Notice that trySingleTable checks the invariant that the capacity must be greater than or equal to the minimal reservation.

The point of this little exercise, so far, is that it encapsulates the contract implied by the Domain Model. It does this by using the static type system to its advantage.

JSON serialization by hand #

At the boundaries of applications, however, there are no static types. Is the static type system still useful in that situation?

For Haskell, the most common JSON library is Aeson, and I admit that I'm no expert. Thus, it's possible that there's an easier way to serialize to and deserialize from JSON. If so, please leave a comment explaining the alternative.

The original rationale for this article series was to demonstrate how serialization can be done without Reflection, or, in the case of Haskell, Generics (not to be confused with .NET generics, which in Haskell usually is called parametric polymorphism). We'll return to Generics later in this article.

In this article series, I consider the JSON format fixed. A single table should be rendered as shown above, and a communal table should be rendered like this:

{ "communalTable": { "capacity": 42 } }

Often in the real world you'll have to conform to a particular protocol format, or, even if that's not the case, being able to control the shape of the wire format is important to deal with backwards compatibility.

As I outlined in the introduction article you can usually find a more weakly typed API to get the job done. For serializing Table to JSON it looks like this:

newtype JSONTable = JSONTable Table deriving (Eq, Show) instance ToJSON JSONTable where toJSON (JSONTable (Single (SingleT (N c) (N m)))) = object ["singleTable" .= object [ "capacity" .= c, "minimalReservation" .= m]] toJSON (JSONTable (Communal (N c))) = object ["communalTable" .= object ["capacity" .= c]]

In order to separate concerns, I've defined this functionality in a new module that references the module that defines the Domain Model. Thus, to avoid orphan instances, I've defined a JSONTable newtype wrapper that I then make a ToJSON instance.

The toJSON function pattern-matches on Single and Communal to write two different Values, using Aeson's underlying Document Object Model (DOM).

JSON deserialization by hand #

You can also go the other way, and when it looks more complicated, it's because it is. When serializing an encapsulated value, not a lot can go wrong because the value is already valid. When deserializing a JSON string, on the other hand, all sorts of things can go wrong: It might not even be a valid string, or the string may not be valid JSON, or the JSON may not be a valid Table representation, or the values may be illegal, etc.

It's no surprise, then, that the FromJSON instance is bigger:

instance FromJSON JSONTable where parseJSON (Object v) = do single <- v .:? "singleTable" communal <- v .:? "communalTable" case (single, communal) of (Just s, Nothing) -> do capacity <- s .: "capacity" minimal <- s .: "minimalReservation" case trySingleTable capacity minimal of Nothing -> fail "Expected natural numbers." Just t -> return $ JSONTable t (Nothing, Just c) -> do capacity <- c .: "capacity" case tryCommunalTable capacity of Nothing -> fail "Expected a natural number." Just t -> return $ JSONTable t _ -> fail "Expected exactly one of singleTable or communalTable." parseJSON _ = fail "Expected an object."

I could probably have done this more succinctly if I'd spent even more time on it than I already did, but it gets the job done and demonstrates the point. Instead of relying on run-time Reflection, the FromJSON instance is, unsurprisingly, a parser, composed from Aeson's specialised parser combinator API.

Since both serialisation and deserialization is based on string values, you should write automated tests that verify that the code works.

Apart from module declaration and imports etc. this hand-written JSON capability requires 27 lines of code. Can we do better with static types and Generics?

JSON serialisation based on types #

The intent with the Aeson library is that you define a type (a Data Transfer Object (DTO) if you will), and then let 'compiler magic' do the rest. In Haskell, it's not run-time Reflection, but a compilation technology called Generics. As I understand it, it automatically 'writes' the serialization and parsing code and turns it into machine code as part of normal compilation.

You're supposed to first turn on the

{-# LANGUAGE DeriveGeneric #-}

language pragma and then tell the compiler to automatically derive Generic for the DTO in question. You'll see an example of that shortly.

It's a fairly flexible system that you can tweak in various ways, but if it's possible to do it directly with the above Table type, please leave a comment explaining how. I tried, but couldn't make it work. To be clear, I could make it serializable, but not to the above JSON format. After enough Aeson Whac-A-Mole I decided to change tactics.

In Code That Fits in Your Head I explain how you're usually better off separating the role of serialization from the role of Domain Model. The way to do that is exactly by defining a DTO for serialisation, and let the Domain Model remain exclusively to model the rules of the application. The above Table type plays the latter role, so we need new DTO types.

We may start with the building blocks:

newtype CommunalDTO = CommunalDTO { communalCapacity :: Integer } deriving (Eq, Show, Generic)

Notice how it declaratively derives Generic, which works because of the DeriveGeneric language pragma.

From here, in principle, all that you need is just a single declaration to make it serializable:

instance ToJSON CommunalDTO

While it does serialize to JSON, it doesn't have the right format:

{ "communalCapacity": 42 }

The property name should be capacity, not communalCapacity. Why did I call the record field communalCapacity instead of capacity? Can't I just fix my CommunalDTO record?

Unfortunately, I can't just do that, because I also need a capacity JSON property for the single-table case, and Haskell isn't happy about duplicated field names in the same module. (This language feature truly is one of the weak points of Haskell.)

Instead, I can tweak the Aeson rules by supplying an Options value to the instance definition:

communalJSONOptions :: Options communalJSONOptions = defaultOptions { fieldLabelModifier = \s -> case s of "communalCapacity" -> "capacity" _ -> s } instance ToJSON CommunalDTO where toJSON = genericToJSON communalJSONOptions toEncoding = genericToEncoding communalJSONOptions

This instructs the compiler to modify how it generates the serialization code, and the generated JSON fragment is now correct.

We can do the same with the single-table case:

data SingleDTO = SingleDTO { singleCapacity :: Integer , minimalReservation :: Integer } deriving (Eq, Show, Generic) singleJSONOptions :: Options singleJSONOptions = defaultOptions { fieldLabelModifier = \s -> case s of "singleCapacity" -> "capacity" "minimalReservation" -> "minimalReservation" _ -> s } instance ToJSON SingleDTO where toJSON = genericToJSON singleJSONOptions toEncoding = genericToEncoding singleJSONOptions

This takes care of that case, but we still need a container type that will hold either one or the other:

data TableDTO = TableDTO { singleTable :: Maybe SingleDTO , communalTable :: Maybe CommunalDTO } deriving (Eq, Show, Generic) tableJSONOptions :: Options tableJSONOptions = defaultOptions { omitNothingFields = True } instance ToJSON TableDTO where toJSON = genericToJSON tableJSONOptions toEncoding = genericToEncoding tableJSONOptions

One way to model a sum type with a DTO is to declare both cases as Maybe fields. While it does allow illegal states to be representable (i.e. both kinds of tables defined at the same time, or none of them present) this is only par for the course at the application boundary.

That's quite a bit of infrastructure to stand up, but at least most of it can be reused for parsing.

JSON deserialisation based on types #

To allow parsing of JSON into the above DTO we can make them all FromJSON instances, e.g.:

instance FromJSON CommunalDTO where parseJSON = genericParseJSON communalJSONOptions

Notice that you can reuse the same communalJSONOptions used for the ToJSON instance. Repeat that exercise for the two other record types.

That's only half the battle, though, since this only gives you a way to parse and serialize the DTO. What you ultimately want is to persist or dehydrate Table data.

Converting DTO to Domain Model, and vice versa #

As usual, converting a nice, encapsulated value to a more relaxed format is safe and trivial:

toTableDTO :: Table -> TableDTO toTableDTO (Single (SingleT (N c) (N m))) = TableDTO (Just (SingleDTO c m)) Nothing toTableDTO (Communal (N c)) = TableDTO Nothing (Just (CommunalDTO c))

Going the other way is fundamentally a parsing exercise:

tryParseTable :: TableDTO -> Maybe Table tryParseTable (TableDTO (Just (SingleDTO c m)) Nothing) = trySingleTable c m tryParseTable (TableDTO Nothing (Just (CommunalDTO c))) = tryCommunalTable c tryParseTable _ = Nothing

Such an operation may fail, so the result is a Maybe Table. It could also have been an Either something Table, if you wanted to return information about errors when things go wrong. It makes the code marginally more complex, but doesn't change the overall thrust of this exploration.

Let's take stock of the type-based alternative. It requires 62 lines of code, distributed over three DTO types, their Options, their ToJSON and FromJSON instances, and finally the two conversions tryParseTable and toTableDTO.

Conclusion #

In this article I've explored two alternatives for converting a well-encapsulated Domain Model to and from JSON. One option is to directly manipulate the DOM. Another option is take a more declarative approach and define types that model the shape of the JSON data, and then leverage type-based automation (here, Generics) to automatically produce the code that parses and writes the JSON.

I've deliberately chosen a Domain Model with some constraints, in order to demonstrate how persisting a non-trivial data model might work. With that setup, writing 'loosely coupled' code directly against the DOM requires 27 lines of code, while 'taking advantage' of type-based automation requires 62 lines of code.

To be fair, the dice don't always land that way. You can't infer a general rule from a single example, and it's possible that I could have done something clever with Aeson to reduce the code. Even so, I think that there's a conclusion to be drawn, and it's this:

Type-based automation (Generics, or run-time Reflection) may seem simple at first glance. Just declare a type and let some automation library do the rest. It may happen, however, that you need to tweak the defaults so much that it would be easier skipping the type-based approach and instead directly manipulating the DOM.

I love static type systems, but I'm also watchful of their limitations. There's likely to be an inflection point where, on the one side, a type-based declarative API is best, while on the other side of that point, a more 'lightweight' approach is better.

The position of such an inflection point will vary from context to context. Just be aware of the possibility, and explore alternatives if things begin to feel awkward.

Serialization with and without Reflection

An investigation of alternatives.

I recently wrote a tweet that caused more responses than usual:

"A decade ago, I used .NET Reflection so often that I know most the the API by heart.

"Since then, I've learned better ways to solve my problems. I can't remember when was the last time I used .NET Reflection. I never need it.

"Do you?"

Most people who read my tweets are programmers, and some are, perhaps, not entirely neurotypical, but I intended the last paragraph to be a rhetorical question. My point, really, was to point out that if I tell you it's possible to do without Reflection, one or two readers might keep that in mind and at least explore options the next time the urge to use Reflection arises.

A common response was that Reflection is useful for (de)serialization of data. These days, the most common case is going to and from JSON, but the problem is similar if the format is XML, CSV, or another format. In a sense, even reading to and from a database is a kind of serialization.

In this little series of articles, I'm going to explore some alternatives to Reflection. I'll use the same example throughout, and I'll stick to JSON, but you can easily extrapolate to other serialization formats.

Table layouts #

As always, I find the example domain of online restaurant reservation systems to be so rich as to furnish a useful example. Imagine a multi-tenant service that enables restaurants to take and manage reservations.

When a new reservation request arrives, the system has to make a decision on whether to accept or reject the request. The layout, or configuration, of tables plays a role in that decision.

Such a multi-tenant system may have an API for configuring the restaurant; essentially, entering data into the system about the size and policies regarding tables in a particular restaurant.

Most restaurants have 'normal' tables where, if you reserve a table for three, you'll have the entire table for a duration. Some restaurants also have one or more communal tables, typically bar seating where you may get a view of the kitchen. Quite a few high-end restaurants have tables like these, because it enables them to cater to single diners without reserving an entire table that could instead have served two paying customers.

In Copenhagen, on the other hand, it's also not uncommon to have a special room for larger parties. I think this has something to do with the general age of the buildings in the city. Most establishments are situated in older buildings, with all the trappings, including load-bearing walls, cellars, etc. As part of a restaurant's location, there may be a big cellar room, second-story room, or other room that's not practical for the daily operation of the place, but which works for parties of, say, 15-30 people. Such 'private dining' rooms can be used for private occasions or company outings.

A maître d'hôtel may wish to configure the system with a variety of tables, including communal tables, and private dining tables as described above.

One way to model such requirements is to distinguish between two kinds of tables: Communal tables, and 'single' tables, and where single tables come with an additional property that models the minimal reservation required to reserve that table. A JSON representation might look like this:

{

"singleTable": {

"capacity": 16,

"minimalReservation": 10

}

}

This may represent a private dining table that seats up to sixteen people, and where the maître d'hôtel has decided to only accept reservations for at least ten guests.

A singleTable can also be used to model 'normal' tables without special limits. If the restaurant has a table for four, but is ready to accept a reservation for one person, you can configure a table for four, with a minimum reservation of one.

Communal tables are different, though:

{ "communalTable": { "capacity": 10 } }

Why not just model that as ten single tables that each seat one?

You don't want to do that because you want to make sure that parties can eat together. Some restaurants have more than one communal table. Imagine that you only have two communal tables of ten seats each. What happens if you model this as twenty single-person tables?

If you do that, you may accept reservations for parties of six, six, and six, because 6 + 6 + 6 = 18 < 20. When those three groups arrive, however, you discover that you have to split one of the parties! The party getting separated may not like that at all, and you are, after all, in the hospitality business.

Exploration #

In each article in this short series, I'll explore serialization with and without Reflection in a few languages. I'll start with Haskell, since that language doesn't have run-time Reflection. It does have a related facility called generics, not to be confused with .NET or Java generics, which in Haskell are called parametric polymorphism. It's confusing, I know.

Haskell generics look a bit like .NET Reflection, and there's some overlap, but it's not quite the same. The main difference is that Haskell generic programming all 'resolves' at compile time, so there's no run-time Reflection in Haskell.

If you don't care about Haskell, you can skip that article.

- Serializing restaurant tables in Haskell

- Serializing restaurant tables in F#

- Serializing restaurant tables in C#

As you can see, the next article repeats the exercise in F#, and if you also don't care about that language, you can skip that article as well.

The C# article, on the other hand, should be readable to not only C# programmers, but also developers who work in sufficiently equivalent languages.

Descriptive, not prescriptive #

The purpose of this article series is only to showcase alternatives. Based on the reactions my tweet elicited I take it that some people can't imagine how serialisation might look without Reflection.

It is not my intent that you should eschew the Reflection-based APIs available in your languages. In .NET, for example, a framework like ASP.NET MVC expects you to model JSON or XML as Data Transfer Objects. This gives you an illusion of static types at the boundary.

Even a Haskell web library like Servant expects you to model web APIs with static types.

When working with such a framework, it doesn't always pay to fight against its paradigm. When I work with ASP.NET, I define DTOs just like everyone else. On the other hand, if communicating with a backend system, I sometimes choose to skip static types and instead working directly with a JSON Document Object Model (DOM).

I occasionally find that it better fits my use case, but it's not the majority of times.

Conclusion #

While some sort of Reflection or metadata-driven mechanism is often used to implement serialisation, it often turns out that such convenient language capabilities are programmed on top of an ordinary object model. Even isolated to .NET, I think I'm on my third JSON library, and most (all?) turned out to have an underlying DOM that you can manipulate.

In this article I've set the stage for exploring how serialisation can work, with or (mostly) without Reflection.

If you're interested in the philosophy of science and epistemology, you may have noticed a recurring discussion in academia: A wider society benefits not only from learning what works, but also from learning what doesn't work. It would be useful if researchers published their failures along with their successes, yet few do (for fairly obvious reasons).

Well, I depend neither on research grants nor salary, so I'm free to publish negative results, such as they are.

Not that I want to go so far as to categorize what I present in the present articles as useless, but they're probably best applied in special circumstances. On the other hand, I don't know your context, and perhaps you're doing something I can't even imagine, and what I present here is just what you need.

Synchronizing concurrent teams

Or, rather: Try not to.

A few months ago I visited a customer and as the day was winding down we got to talk more informally. One of the architects mentioned, in an almost off-hand manner, "we've embarked on a SAFe journey..."

"Yes..?" I responded, hoping that my inflection would sound enough like a question that he'd elaborate.

Unfortunately, I'm apparently sometimes too subtle when dealing with people face-to-face, so I never got to hear just how that 'SAFe journey' was going. Instead, the conversation shifted to the adjacent topic of how to coordinate independent teams.

I told them that, in my opinion, the best way to coordinate independent teams is to not coordinate them. I don't remember exactly how I proceeded from there, but I probably said something along the lines that I consider coordination meetings between teams to be an 'architecture smell'. That the need to talk to other teams was a symptom that teams were too tightly coupled.

I don't remember if I said exactly that, but it would have been in character.

The architect responded: "I don't like silos."

How do you respond to that?

Autonomous teams #

I couldn't very well respond that silos are great. First, it doesn't sound very convincing. Second, it'd be an argument suitable only in a kindergarten. Are not! -Are too! -Not! -Too! etc.

After feeling momentarily checked, for once I managed to think on my feet, so I replied, "I don't suggest that your teams should be isolated from each other. I do encourage people to talk to each other, but I don't think that teams should coordinate much. Rather, think of each team as an organism on the savannah. They interact, and what they do impact others, but in the end they're autonomous life forms. I believe an architect's job is like a ranger's. You can't control the plants or animals, but you can nurture the ecosystem, herding it in a beneficial direction."

That ranger metaphor is an old pet peeve of mine, originating from what I consider one of my most under-appreciated articles: Zookeepers must become Rangers. It's closely related to the more popular metaphor of software architecture as gardening, but I like the wildlife variation because it emphasizes an even more hands-off approach. It removes the illusion that you can control a fundamentally unpredictable process, but replaces it with the hopeful emphasis on stewardship.

How do ecosystems thrive? A software architect (or ranger) should nurture resilience in each subsystem, just like evolution has promoted plants' and animals' ability to survive a variety of unforeseen circumstances: Flood, draught, fire, predators, lack of prey, disease, etc.

You want teams to work independently. This doesn't mean that they work in isolation, but rather they they are free to act according to their abilities and understanding of the situation. An architect can help them understand the wider ecosystem and predict tomorrow's weather, so to speak, but the team should remain autonomous.

Concurrent work #

I'm assuming that an organisation has multiple teams because they're supposed to work concurrently. While team A is off doing one thing, team B is doing something else. You can attempt to herd them in the same general direction, but beware of tight coordination.

What's the problem with coordination? Isn't it a kind of collaboration? Don't we consider that beneficial?

I'm not arguing that teams should be antagonistic. Like all metaphors, we should be careful not to take the savannah metaphor too far. I'm not imagining that one team consists of lions, apex predators, killing and devouring other teams.

Rather, the reason I'm wary of coordination is because it seems synonymous with synchronisation.

In Code That Fits in Your Head I've already discussed how good practices for Continuous Integration are similar to earlier lessons about optimistic concurrency. It recently struck me that we can draw a similar parallel between concurrent team work and parallel computing.

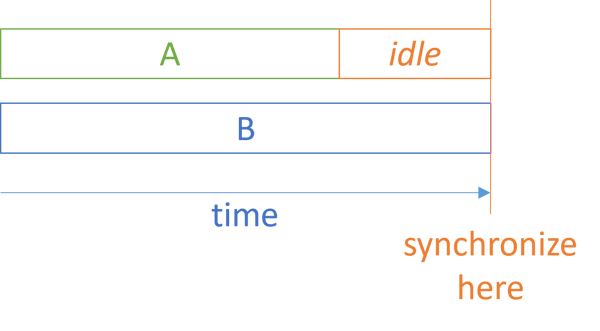

For decades we've known that the less synchronization, the faster parallel code is. Synchronization is costly.

In team work, coordination is like thread synchronization. Instead of doing work, you stop in order to coordinate. This implies that one thread or team has to wait for the other to catch up.

Unless work is perfectly evenly divided, team A may finish before team B. In order to coordinate, team A must sit idle for a while, waiting for B to catch up. (In development organizations, idleness is rarely allowed, so in practice, team A embarks on some other work, with consequences that I've already outlined.)

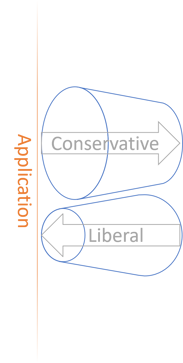

If you have more than two teams, this phenomenon only becomes worse. You'll have more idle time. This reminds me of Amdahl's law, which briefly put expresses that there's a limit to how much of a speed improvement you can get from concurrent work. The limit is related to the percentage of the work that can not be parallelized. The greater the need to synchronize work, the lower the ceiling. Conversely, the more you can let concurrent processes run without coordination, the more you gain from parallelization.

It seems to me that there's a direct counterpart in team organization. The more teams need to coordinate, the less is gained from having multiple teams.

But really, Fred Brooks could you have told you so in 1975.

Versioning #

A small development team may organize work informally. Work may be divided along 'natural' lines, each developer taking on tasks best suited to his or her abilities. If working in a code base with shared ownership, one developer doesn't have to wait on the work done by another developer. Instead, a programmer may complete the required work individually, or working together with a colleague. Coordination happens, but is both informal and frequent.

As development organizations grow, teams are formed. Separate teams are supposed to work independently, but may in practice often depend on each other. Team A may need team B to make a change before they can proceed with their own work. The (felt) need to coordinate team activities arise.

In my experience, this happens for a number of reasons. One is that teams may be divided along wrong lines; this is a socio-technical problem. Another, more technical, reason is that zookeepers rarely think explicitly about versioning or avoiding breaking changes. Imagine that team A needs team B to develop a new capability. This new capability implies a breaking change, so the teams will now need to coordinate.

Instead, team B should develop the new feature in such a way that it doesn't break existing clients. If all else fails, the new feature must exist side-by-side with the old way of doing things. With Continuous Deployment the new feature becomes available when it's ready. Team A still has to wait for the feature to become available, but no synchronization is required.

Conclusion #

Yet another lesson about thread-safety and concurrent transactions seems to apply to people and processes. Parallel processes should be autonomous, with as little synchronization as possible. The more you coordinate development teams, the more you limit the speed of overall work. This seems to suggest that something akin to Amdahl's law also applies to development organizations.

Instead of coordinating teams, encourage them to exist as autonomous entities, but set things up so that not breaking compatibility is a major goal for each team.

Trimming a Fake Object

A refactoring example.

When I introduce the Fake Object testing pattern to people, a common concern is the maintenance burden of it. The point of the pattern is that you write some 'working' code only for test purposes. At a glance, it seems as though it'd be more work than using a dynamic mock library like Moq or Mockito.

This article isn't really about that, but the benefit of a Fake Object is that it has a lower maintenance footprint because it gives you a single class to maintain when you change interfaces or base classes. Dynamic mock objects, on the contrary, leads to Shotgun surgery because every time you change an interface or base class, you have to revisit multiple tests.

In a recent article I presented a Fake Object that may have looked bigger than most people would find comfortable for test code. In this article I discuss how to trim it via a set of refactorings.

Original Fake read registry #

The article presented this FakeReadRegistry, repeated here for your convenience:

internal sealed class FakeReadRegistry : IReadRegistry { private readonly IReadOnlyCollection<Room> rooms; private readonly IDictionary<DateOnly, IReadOnlyCollection<Room>> views; public FakeReadRegistry(params Room[] rooms) { this.rooms = rooms; views = new Dictionary<DateOnly, IReadOnlyCollection<Room>>(); } public IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure) { return EnumerateDates(arrival, departure) .Select(GetView) .Aggregate(rooms.AsEnumerable(), Enumerable.Intersect) .ToList(); } public void RoomBooked(Booking booking) { foreach (var d in EnumerateDates(booking.Arrival, booking.Departure)) { var view = GetView(d); var newView = QueryService.Reserve(booking, view); views[d] = newView; } } private static IEnumerable<DateOnly> EnumerateDates(DateOnly arrival, DateOnly departure) { var d = arrival; while (d < departure) { yield return d; d = d.AddDays(1); } } private IReadOnlyCollection<Room> GetView(DateOnly date) { if (views.TryGetValue(date, out var view)) return view; else return rooms; } }

This is 47 lines of code, spread over five members (including the constructor). Three of the methods have a cyclomatic complexity (CC) of 2, which is the maximum for this class. The remaining two have a CC of 1.

While you can play some CC golf with those CC-2 methods, that tends to pull the code in a direction of being less idiomatic. For that reason, I chose to present the code as above. Perhaps more importantly, it doesn't save that many lines of code.

Had this been a piece of production code, no-one would bat an eye at size or complexity, but this is test code. To add spite to injury, those 47 lines of code implement this two-method interface:

public interface IReadRegistry { IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure); void RoomBooked(Booking booking); }

Can we improve the situation?

Root cause analysis #

Before you rush to 'improve' code, it pays to understand why it looks the way it looks.

Code is a wonderfully malleable medium, so you should regard nothing as set in stone. On the other hand, there's often a reason it looks like it does. It may be that the previous programmers were incompetent ogres for hire, but often there's a better explanation.

I've outlined my thinking process in the previous article, and I'm not going to repeat it all here. To summarise, though, I've applied the Dependency Inversion Principle.

"clients [...] own the abstract interfaces"

In other words, I let the needs of the clients guide the design of the IReadRegistry interface, and then the implementation (FakeReadRegistry) had to conform.

But that's not the whole truth.

I was doing a programming exercise - the CQRS booking kata - and I was following the instructions given in the description. They quite explicitly outline the two dependencies and their methods.

When trying a new exercise, it's a good idea to follow instructions closely, so that's what I did. Once you get a sense of a kata, though, there's no law saying that you have to stick to the original rules. After all, the purpose of an exercise is to train, and in programming, trying new things is training.

Test code that wants to be production code #

A major benefit of test-driven development (TDD) is that it provides feedback. It pays to be tuned in to that channel. The above FakeReadRegistry seems to be trying to tell us something.

Consider the GetFreeRooms method. I'll repeat the single-expression body here for your convenience:

return EnumerateDates(arrival, departure)

.Select(GetView)

.Aggregate(rooms.AsEnumerable(), Enumerable.Intersect)

.ToList();

Why is that the implementation? Why does it need to first enumerate the dates in the requested interval? Why does it need to call GetView for each date?

Why don't I just do the following and be done with it?

internal sealed class FakeStorage : Collection<Booking>, IWriteRegistry, IReadRegistry { private readonly IReadOnlyCollection<Room> rooms; public FakeStorage(params Room[] rooms) { this.rooms = rooms; } public IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure) { var booked = this.Where(b => b.Overlaps(arrival, departure)).ToList(); return rooms .Where(r => !booked.Any(b => b.RoomName == r.Name)) .ToList(); } public void Save(Booking booking) { Add(booking); } }

To be honest, that's what I did first.

While there are two interfaces, there's only one Fake Object implementing both. That's often an easy way to address the Interface Segregation Principle and still keeping the Fake Object simple.

This is much simpler than FakeReadRegistry, so why didn't I just keep that?

I didn't feel it was an honest attempt at CQRS. In CQRS you typically write the data changes to one system, and then you have another logical process that propagates the information about the data modification to the read subsystem. There's none of that here. Instead of being based on one or more 'materialised views', the query is just that: A query.

That was what I attempted to address with FakeReadRegistry, and I think it's a much more faithful CQRS implementation. It's also more complex, as CQRS tends to be.

In both cases, however, it seems that there's some production logic trapped in the test code. Shouldn't EnumerateDates be production code? And how about the general 'algorithm' of RoomBooked:

- Enumerate the relevant dates

- Get the 'materialised' view for each date

- Calculate the new view for that date

- Update the collection of views for that date

That seems like just enough code to warrant moving it to the production code.

A word of caution before we proceed. When deciding to pull some of that test code into the production code, I'm making a decision about architecture.

Until now, I'd been following the Dependency Inversion Principle closely. The interfaces exist because the client code needs them. Those interfaces could be implemented in various ways: You could use a relational database, a document database, files, blobs, etc.

Once I decide to pull the above algorithm into the production code, I'm choosing a particular persistent data structure. This now locks the data storage system into a design where there's a persistent view per date, and another database of bookings.

Now that I'd learned some more about the exercise, I felt confident making that decision.

Template Method #

The first move I made was to create a superclass so that I could employ the Template Method pattern:

public abstract class ReadRegistry : IReadRegistry { public IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure) { return EnumerateDates(arrival, departure) .Select(GetView) .Aggregate(Rooms.AsEnumerable(), Enumerable.Intersect) .ToList(); } public void RoomBooked(Booking booking) { foreach (var d in EnumerateDates(booking.Arrival, booking.Departure)) { var view = GetView(d); var newView = QueryService.Reserve(booking, view); UpdateView(d, newView); } } protected abstract void UpdateView(DateOnly date, IReadOnlyCollection<Room> view); protected abstract IReadOnlyCollection<Room> Rooms { get; } protected abstract bool TryGetView(DateOnly date, out IReadOnlyCollection<Room> view); private static IEnumerable<DateOnly> EnumerateDates(DateOnly arrival, DateOnly departure) { var d = arrival; while (d < departure) { yield return d; d = d.AddDays(1); } } private IReadOnlyCollection<Room> GetView(DateOnly date) { if (TryGetView(date, out var view)) return view; else return Rooms; } }

This looks similar to FakeReadRegistry, so how is this an improvement?

The new ReadRegistry class is production code. It can, and should, be tested. (Due to the history of how we got here, it's already covered by tests, so I'm not going to repeat that effort here.)

True to the Template Method pattern, three abstract members await a child class' implementation. These are the UpdateView and TryGetView methods, as well as the Rooms read-only property (glorified getter method).

Imagine that in the production code, these are implemented based on file/document/blob storage - one per date. TryGetView would attempt to read the document from storage, UpdateView would create or modify the document, while Rooms returns a default set of rooms.

A Test Double, however, can still use an in-memory dictionary:

internal sealed class FakeReadRegistry : ReadRegistry { private readonly IReadOnlyCollection<Room> rooms; private readonly IDictionary<DateOnly, IReadOnlyCollection<Room>> views; protected override IReadOnlyCollection<Room> Rooms => rooms; public FakeReadRegistry(params Room[] rooms) { this.rooms = rooms; views = new Dictionary<DateOnly, IReadOnlyCollection<Room>>(); } protected override void UpdateView(DateOnly date, IReadOnlyCollection<Room> view) { views[date] = view; } protected override bool TryGetView(DateOnly date, out IReadOnlyCollection<Room> view) { return views.TryGetValue(date, out view); } }

Each override is a one-liner with cyclomatic complexity 1.

First round of clean-up #

An abstract class is already a polymorphic object, so we no longer need the IReadRegistry interface. Delete that, and update all code accordingly. Particularly, the QueryService now depends on ReadRegistry rather than IReadRegistry:

private readonly ReadRegistry readRegistry; public QueryService(ReadRegistry readRegistry) { this.readRegistry = readRegistry; }

Now move the Reserve function from QueryService to ReadRegistry. Once this is done, the QueryService looks like this:

public sealed class QueryService { private readonly ReadRegistry readRegistry; public QueryService(ReadRegistry readRegistry) { this.readRegistry = readRegistry; } public IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure) { return readRegistry.GetFreeRooms(arrival, departure); } }

That class is only passing method calls along, so clearly no longer serving any purpose. Delete it.

This is a not uncommon in CQRS. One might even argue that if CQRS is done right, there's almost no code on the query side, since all the data view update happens as events propagate.

From abstract class to Dependency Injection #

While the current state of the code is based on an abstract base class, the overall architecture of the system doesn't hinge on inheritance. From Abstract class isomorphism we know that it's possible to refactor an abstract class to Constructor Injection. Let's do that.

First add an IViewStorage interface that mirrors the three abstract methods defined by ReadRegistry:

public interface IViewStorage { IReadOnlyCollection<Room> Rooms { get; } void UpdateView(DateOnly date, IReadOnlyCollection<Room> view); bool TryGetView(DateOnly date, out IReadOnlyCollection<Room> view); }

Then implement it with a Fake Object:

public sealed class FakeViewStorage : IViewStorage { private readonly IDictionary<DateOnly, IReadOnlyCollection<Room>> views; public IReadOnlyCollection<Room> Rooms { get; } public FakeViewStorage(params Room[] rooms) { Rooms = rooms; views = new Dictionary<DateOnly, IReadOnlyCollection<Room>>(); } public void UpdateView(DateOnly date, IReadOnlyCollection<Room> view) { views[date] = view; } public bool TryGetView(DateOnly date, out IReadOnlyCollection<Room> view) { return views.TryGetValue(date, out view); } }

Notice the similarity to FakeReadRegistry, which we'll get rid of shortly.

Now inject IViewStorage into ReadRegistry, and make ReadRegistry a regular (sealed) class:

public sealed class ReadRegistry { private readonly IViewStorage viewStorage; public ReadRegistry(IViewStorage viewStorage) { this.viewStorage = viewStorage; } public IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure) { return EnumerateDates(arrival, departure) .Select(GetView) .Aggregate(viewStorage.Rooms.AsEnumerable(), Enumerable.Intersect) .ToList(); } public void RoomBooked(Booking booking) { foreach (var d in EnumerateDates(booking.Arrival, booking.Departure)) { var view = GetView(d); var newView = Reserve(booking, view); viewStorage.UpdateView(d, newView); } } public static IReadOnlyCollection<Room> Reserve( Booking booking, IReadOnlyCollection<Room> existingView) { return existingView .Where(r => r.Name != booking.RoomName) .ToList(); } private static IEnumerable<DateOnly> EnumerateDates(DateOnly arrival, DateOnly departure) { var d = arrival; while (d < departure) { yield return d; d = d.AddDays(1); } } private IReadOnlyCollection<Room> GetView(DateOnly date) { if (viewStorage.TryGetView(date, out var view)) return view; else return viewStorage.Rooms; } }

You can now delete the FakeReadRegistry Test Double, since FakeViewStorage has now taken its place.

Finally, we may consider if we can make FakeViewStorage even slimmer. While I usually favour composition over inheritance, I've found that deriving Fake Objects from collection base classes is often an efficient way to get a lot of mileage out of a few lines of code. FakeReadRegistry, however, had to inherit from ReadRegistry, so it couldn't derive from any other class.

FakeViewStorage isn't constrained in that way, so it's free to inherit from Dictionary<DateOnly, IReadOnlyCollection<Room>>:

public sealed class FakeViewStorage : Dictionary<DateOnly, IReadOnlyCollection<Room>>, IViewStorage { public IReadOnlyCollection<Room> Rooms { get; } public FakeViewStorage(params Room[] rooms) { Rooms = rooms; } public void UpdateView(DateOnly date, IReadOnlyCollection<Room> view) { this[date] = view; } public bool TryGetView(DateOnly date, out IReadOnlyCollection<Room> view) { return TryGetValue(date, out view); } }

This last move isn't strictly necessary, but I found it worth at least mentioning.

I hope you'll agree that this is a Fake Object that looks maintainable.

Conclusion #

Test-driven development is a feedback mechanism. If something is difficult to test, it tells you something about your System Under Test (SUT). If your test code looks bloated, that tells you something too. Perhaps part of the test code really belongs in the production code.

In this article, we started with a Fake Object that looked like it contained too much production code. Via a series of refactorings I moved the relevant parts to the production code, leaving me with a more idiomatic and conforming implementation.

CC golf

Noun. Game in which the goal is to minimise cyclomatic complexity.

Cyclomatic complexity (CC) is a rare code metric since it can be actually useful. In general, it's a good idea to minimise it as much as possible.

In short, CC measures looping and branching in code, and this is often where bugs lurk. While it's only a rough measure, I nonetheless find the metric useful as a general guideline. Lower is better.

Golf #

I'd like to propose the term "CC golf" for the activity of minimising cyclomatic complexity in an area of code. The name derives from code golf, in which you have to implement some behaviour (typically an algorithm) in fewest possible characters.

Such games can be useful because they enable you to explore different ways to express yourself in code. It's always a good kata constraint. The first time I tried that was in 2011, and when looking back on that code today, I'm not that impressed. Still, it taught me a valuable lesson about the Visitor pattern that I never forgot, and that later enabled me to connect some important dots.

But don't limit CC golf to katas and the like. Try it in your production code too. Most production code I've seen could benefit from some CC golf, and if you use Git tactically you can always stash the changes if they're no good.

Idiomatic tension #

Alternative expressions with lower cyclomatic complexity may not always be idiomatic. Let's look at a few examples. In my previous article, I listed some test code where some helper methods had a CC of 2. Here's one of them:

private static IEnumerable<DateOnly> EnumerateDates(DateOnly arrival, DateOnly departure) { var d = arrival; while (d < departure) { yield return d; d = d.AddDays(1); } }

Can you express this functionality with a CC of 1? In Haskell it's essentially built in as (. pred) . enumFromTo, and in F# it's also idiomatic, although more verbose:

let enumerateDates (arrival : DateOnly) departure = Seq.initInfinite id |> Seq.map arrival.AddDays |> Seq.takeWhile (fun d -> d < departure)

Can we do the same in C#?

If there's a general API in .NET that corresponds to the F#-specific Seq.initInfinite I haven't found it, but we can do something like this:

private static IEnumerable<DateOnly> EnumerateDates(DateOnly arrival, DateOnly departure) { const int infinity = int.MaxValue; // As close as int gets, at least return Enumerable.Range(0, infinity).Select(arrival.AddDays).TakeWhile(d => d < departure); }

In C# infinite sequences are generally unusual, but if you were to create one, a combination of while true and yield return would be the most idiomatic. The problem with that, though, is that such a construct has a cyclomatic complexity of 2.

The above suggestion gets around that problem by pretending that int.MaxValue is infinity. Practically, at least, a 32-bit signed integer can't get larger than that anyway. I haven't tried to let F#'s Seq.initInfinite run out, but by its type it seems int-bound as well, so in practice it, too, probably isn't infinite. (Or, if it is, the index that it supplies will have to overflow and wrap around to a negative value.)

Is this alternative C# code better than the first? You be the judge of that. It has a lower cyclomatic complexity, but is less idiomatic. This isn't uncommon. In languages with a procedural background, there's often tension between lower cyclomatic complexity and how 'things are usually done'.

Checking for null #

Is there a way to reduce the cyclomatic complexity of the GetView helper method?

private IReadOnlyCollection<Room> GetView(DateOnly date) { if (views.TryGetValue(date, out var view)) return view; else return rooms; }

This is an example of the built-in API being in the way. In F#, you naturally write the same behaviour with a CC of 1:

let getView (date : DateOnly) =

views |> Map.tryFind date |> Option.defaultValue rooms |> Set.ofSeq

That TryGet idiom is in the way for further CC reduction, it seems. It is possible to reach a CC of 1, though, but it's neither pretty nor idiomatic:

private IReadOnlyCollection<Room> GetView(DateOnly date) { views.TryGetValue(date, out var view); return new[] { view, rooms }.Where(x => x is { }).First()!; }

Perhaps there's a better way, but if so, it escapes me. Here, I use my knowledge that view is going to remain null if TryGetValue doesn't find the dictionary entry. Thus, I can put it in front of an array where I put the fallback value rooms as the second element. Then I filter the array by only keeping the elements that are not null (that's what the x is { } pun means; I usually read it as x is something). Finally, I return the first of these elements.

I know that rooms is never null, but apparently the compiler can't tell. Thus, I have to suppress its anxiety with the ! operator, telling it that this will result in a non-null value.

I would never use such a code construct in a professional C# code base.

Side effects #

The third helper method suggests another kind of problem that you may run into:

public void RoomBooked(Booking booking) { foreach (var d in EnumerateDates(booking.Arrival, booking.Departure)) { var view = GetView(d); var newView = QueryService.Reserve(booking, view); views[d] = newView; } }

Here the higher-than-one CC stems from the need to loop through dates in order to produce a side effect for each. Even in F# I do that:

member this.RoomBooked booking = for d in enumerateDates booking.Arrival booking.Departure do let newView = getView d |> QueryService.reserve booking |> Seq.toList views <- Map.add d newView views

This also has a cyclomatic complexity of 2. You could do something like this:

member this.RoomBooked booking = enumerateDates booking.Arrival booking.Departure |> Seq.iter (fun d -> let newView = getView d |> QueryService.reserve booking |> Seq.toList in views <- Map.add d newView views)

but while that nominally has a CC of 1, it has the same level of indentation as the previous attempt. This seems to indicate, at least, that it doesn't really address any complexity issue.

You could also try something like this:

member this.RoomBooked booking = enumerateDates booking.Arrival booking.Departure |> Seq.map (fun d -> d, getView d |> QueryService.reserve booking |> Seq.toList) |> Seq.iter (fun (d, newView) -> views <- Map.add d newView views)

which, again, may be nominally better, but forced me to wrap the map output in a tuple so that both d and newView is available to Seq.iter. I tend to regard that as a code smell.

This latter version is, however, fairly easily translated to C#:

public void RoomBooked(Booking booking) { EnumerateDates(booking.Arrival, booking.Departure) .Select(d => (d, view: QueryService.Reserve(booking, GetView(d)))) .ToList() .ForEach(x => views[x.d] = x.view); }

The standard .NET API doesn't have something equivalent to Seq.iter (although you could trivially write such an action), but you can convert any sequence to a List<T> and use its ForEach method.

In practice, though, I tend to agree with Eric Lippert. There's already an idiomatic way to iterate over each item in a collection, and being explicit is generally helpful to the reader.

Church encoding #

There's a general solution to most of CC golf: Whenever you need to make a decision and branch between two or more pathways, you can model that with a sum type. In C# you can mechanically model that with Church encoding or the Visitor pattern. If you haven't tried that, I recommend it for the exercise, but once you've done it enough times, you realise that it requires little creativity.

As an example, in 2021 I revisited the Tennis kata with the explicit purpose of translating my usual F# approach to the exercise to C# using Church encoding and the Visitor pattern.

Once you've got a sense for how Church encoding enables you to simulate pattern matching in C#, there are few surprises. You may also rightfully question what is gained from such an exercise:

public IScore VisitPoints(IPoint playerOnePoint, IPoint playerTwoPoint) { return playerWhoWinsBall.Match( playerOne: playerOnePoint.Match<IScore>( love: new Points(new Fifteen(), playerTwoPoint), fifteen: new Points(new Thirty(), playerTwoPoint), thirty: new Forty(playerWhoWinsBall, playerTwoPoint)), playerTwo: playerTwoPoint.Match<IScore>( love: new Points(playerOnePoint, new Fifteen()), fifteen: new Points(playerOnePoint, new Thirty()), thirty: new Forty(playerWhoWinsBall, playerOnePoint))); }

Believe it or not, but that method has a CC of 1 despite the double indentation strongly suggesting that there's some branching going on. To a degree, this also highlights the limitations of the cyclomatic complexity metric. Conversely, stupidly simple code may have a high CC rating.

Most of the examples in this article border on the pathological, and I don't recommend that you write code like that. I recommend that you do the exercise. In less pathological scenarios, there are real benefits to be reaped.

Idioms #

In 2015 I published an article titled Idiomatic or idiosyncratic? In it, I tried to explore the idea that the notion of idiomatic code can sometimes hold you back. I revisited that idea in 2021 in an article called Against consistency. The point in both cases is that just because something looks unfamiliar, it doesn't mean that it's bad.

Coding idioms somehow arose. If you believe that there's a portion of natural selection involved in the development of coding idioms, you may assume by default that idioms represent good ways of doing things.

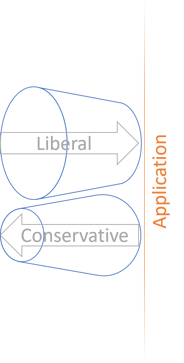

To a degree I believe this to be true. Many idioms represent the best way of doing things at the time they settled into the shape that we now know them. Languages and contexts change, however. Just look at the many approaches to data lookups there have been over the years. For many years now, C# has settled into the so-called TryParse idiom to solve that problem. In my opinion this represents a local maximum.

Languages that provide Maybe (AKA option) and Either (AKA Result) types offer a superior alternative. These types naturally compose into CC 1 pipelines, whereas TryParse requires you to stop what you're doing in order to check a return value. How very C-like.

All that said, I still think you should write idiomatic code by default, but don't be a slave by what's considered idiomatic, just as you shouldn't be a slave to consistency. If there's a better way of doing things, choose the better way.

Conclusion #

While cyclomatic complexity is a rough measure, it's one of the few useful programming metrics I know of. It should be as low as possible.

Most professional code I encounter implements decisions almost exclusively with language primitives: if, for, switch, while, etc. Once, an organisation hired me to give a one-day anti-if workshop. There are other ways to make decisions in code. Most of those alternatives reduce cyclomatic complexity.

That's not really a goal by itself, but reducing cyclomatic complexity tends to produce the beneficial side effect of structuring the code in a more sustainable way. It becomes easier to understand and change.

As the cliché goes: Choose the right tool for the job. You can't, however, do that if you have nothing to choose from. If you only know of one way to do a thing, you have no choice.

Play a little CC golf with your code from time to time. It may improve the code, or it may not. If it didn't, just stash those changes. Either way, you've probably learned something.

Fakes are Test Doubles with contracts

Contracts of Fake Objects can be described by properties.

The first time I tried my hand with the CQRS Booking kata, I abandoned it after 45 minutes because I found that I had little to learn from it. After all, I've already done umpteen variations of (restaurant) booking code examples, in several programming languages. The code example that accompanies my book Code That Fits in Your Head is only the largest and most complete of those.

I also wrote an MSDN Magazine article in 2011 about CQRS, so I think I have that angle covered as well.

Still, while at first glance the kata seemed to have little to offer me, I've found myself coming back to it a few times. It does enable me to focus on something else than the 'production code'. In fact, it turns out that even if (or perhaps particularly when) you use test-driven development (TDD), there's precious little production code. Let's get that out of the way first.

Production code #

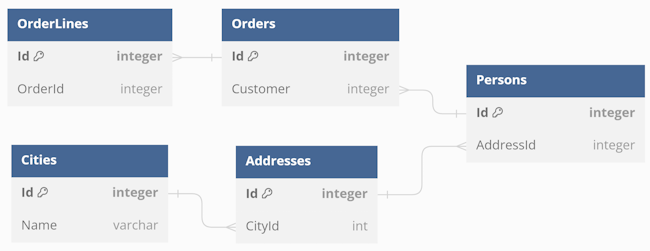

The few times I've now done the kata, there's almost no 'production code'. The implied CommandService has two lines of effective code:

public sealed class CommandService { private readonly IWriteRegistry writeRegistry; private readonly IReadRegistry readRegistry; public CommandService(IWriteRegistry writeRegistry, IReadRegistry readRegistry) { this.writeRegistry = writeRegistry; this.readRegistry = readRegistry; } public void BookARoom(Booking booking) { writeRegistry.Save(booking); readRegistry.RoomBooked(booking); } }

The QueryService class isn't much more exciting:

public sealed class QueryService { private readonly IReadRegistry readRegistry; public QueryService(IReadRegistry readRegistry) { this.readRegistry = readRegistry; } public static IReadOnlyCollection<Room> Reserve( Booking booking, IReadOnlyCollection<Room> existingView) { return existingView.Where(r => r.Name != booking.RoomName).ToList(); } public IReadOnlyCollection<Room> GetFreeRooms(DateOnly arrival, DateOnly departure) { return readRegistry.GetFreeRooms(arrival, departure); } }

The kata only suggests the GetFreeRooms method, which is only a single line. The only reason the Reserve function also exists is to pull a bit of testable logic back from the below Fake object. I'll return to that shortly.

I've also done the exercise in F#, essentially porting the C# implementation, which only highlights how simple it all is:

module CommandService = let bookARoom (writeRegistry : IWriteRegistry) (readRegistry : IReadRegistry) booking = writeRegistry.Save booking readRegistry.RoomBooked booking module QueryService = let reserve booking existingView = existingView |> Seq.filter (fun r -> r.Name <> booking.RoomName) let getFreeRooms (readRegistry : IReadRegistry) arrival departure = readRegistry.GetFreeRooms arrival departure

That's both the Command side and the Query side!

This represents my honest interpretation of the kata. Really, there's nothing to it.

The reason I still find the exercise interesting is that it explores other aspects of TDD than most katas. The most common katas require you to write a little algorithm: Bowling, Word wrap, Roman Numerals, Diamond, Tennis, etc.

The CQRS Booking kata suggests no interesting algorithm, but rather teaches some important lessons about software architecture, separation of concerns, and, if you approach it with TDD, real-world test automation. In contrast to all those algorithmic exercises, this one strongly suggests the use of Test Doubles.

Fakes #

You could attempt the kata with a dynamic 'mocking' library such as Moq or Mockito, but I haven't tried. Since Stubs and Mocks break encapsulation I favour Fake Objects instead.

Creating a Fake write registry is trivial:

internal sealed class FakeWriteRegistry : Collection<Booking>, IWriteRegistry { public void Save(Booking booking) { Add(booking); } }

Its counterpart, the Fake read registry, turns out to be much more involved: