ploeh blog danish software design

A restaurant sandwich

An Impureim Sandwich example in C#.

When learning functional programming (FP) people often struggle with how to organize code. How do you discern and maintain purity? How do you do Dependency Injection in FP? What does a functional architecture look like?

A common FP design pattern is the Impureim Sandwich. The entry point of an application is always impure, so you push all impure actions to the boundary of the system. This is also known as Functional Core, Imperative Shell. If you have a micro-operation-based architecture, which includes all web-based systems, you can often get by with a 'sandwich'. Perform impure actions to collect all the data you need. Pass all data to a pure function. Finally, use impure actions to handle the referentially transparent return value from the pure function.

No design pattern applies universally, and neither does this one. In my experience, however, it's surprisingly often possible to apply this architecture. We're far past the Pareto principle's 80 percent.

Examples may help illustrate the pattern, as well as explore its boundaries. In this article you'll see how I refactored an entry point of a REST API, specifically the PUT handler in the sample code base that accompanies Code That Fits in Your Head.

Starting point #

As discussed in the book, the architecture of the sample code base is, in fact, Functional Core, Imperative Shell. This isn't, however, the main theme of the book, and the code doesn't explicitly apply the Impureim Sandwich. In spirit, that's actually what's going on, but it isn't clear from looking at the code. This was a deliberate choice I made, because I wanted to highlight other software engineering practices. This does have the effect, though, that the Impureim Sandwich is invisible.

For example, the book follows the 80/24 rule closely. This was a didactic choice on my part. Most code bases I've seen in the wild have far too big methods, so I wanted to hammer home the message that it's possible to develop and maintain a non-trivial code base with small code blocks. This meant, however, that I had to split up HTTP request handlers (in ASP.NET known as action methods on Controllers).

The most complex HTTP handler is the one that handles PUT requests for reservations. Clients use this action when they want to make changes to a restaurant reservation.

The action method actually invoked by an HTTP request is this Put method:

[HttpPut("restaurants/{restaurantId}/reservations/{id}")] public async Task<ActionResult> Put( int restaurantId, string id, ReservationDto dto) { if (dto is null) throw new ArgumentNullException(nameof(dto)); if (!Guid.TryParse(id, out var rid)) return new NotFoundResult(); Reservation? reservation = dto.Validate(rid); if (reservation is null) return new BadRequestResult(); var restaurant = await RestaurantDatabase .GetRestaurant(restaurantId).ConfigureAwait(false); if (restaurant is null) return new NotFoundResult(); return await TryUpdate(restaurant, reservation).ConfigureAwait(false); }

Since I, for pedagogical reasons, wanted to fit each method inside an 80x24 box, I made a few somewhat unnatural design choices. The above code is one of them. While I don't consider it completely indefensible, this method does a bit of up-front input validation and verification, and then delegates execution to the TryUpdate method.

This may seem all fine and dandy until you realize that the only caller of TryUpdate is that Put method. A similar thing happens in TryUpdate: It calls a method that has only that one caller. We may try to inline those two methods to see if we can spot the Impureim Sandwich.

Inlined Transaction Script #

Inlining those two methods leave us with a larger, Transaction Script-like entry point:

[HttpPut("restaurants/{restaurantId}/reservations/{id}")] public async Task<ActionResult> Put( int restaurantId, string id, ReservationDto dto) { if (dto is null) throw new ArgumentNullException(nameof(dto)); if (!Guid.TryParse(id, out var rid)) return new NotFoundResult(); Reservation? reservation = dto.Validate(rid); if (reservation is null) return new BadRequestResult(); var restaurant = await RestaurantDatabase .GetRestaurant(restaurantId).ConfigureAwait(false); if (restaurant is null) return new NotFoundResult(); using var scope = new TransactionScope( TransactionScopeAsyncFlowOption.Enabled); var existing = await Repository .ReadReservation(restaurant.Id, reservation.Id) .ConfigureAwait(false); if (existing is null) return new NotFoundResult(); var reservations = await Repository .ReadReservations(restaurant.Id, reservation.At) .ConfigureAwait(false); reservations = reservations.Where(r => r.Id != reservation.Id).ToList(); var now = Clock.GetCurrentDateTime(); var ok = restaurant.MaitreD.WillAccept( now, reservations, reservation); if (!ok) return NoTables500InternalServerError(); await Repository.Update(restaurant.Id, reservation) .ConfigureAwait(false); scope.Complete(); return new OkObjectResult(reservation.ToDto()); }

While I've definitely seen longer methods in the wild, this variation is already so big that it no longer fits on my laptop screen. I have to scroll up and down to read the whole thing. When looking at the bottom of the method, I have to remember what was at the top, because I can no longer see it.

A major point of Code That Fits in Your Head is that what limits programmer productivity is human cognition. If you have to scroll your screen because you can't see the whole method at once, does that fit in your brain? Chances are, it doesn't.

Can you spot the Impureim Sandwich now?

If you can't, that's understandable. It's not really clear because there's quite a few small decisions being made in this code. You could argue, for example, that this decision is referentially transparent:

if (existing is null) return new NotFoundResult();

These two lines of code are deterministic and have no side effects. The branch only returns a NotFoundResult when existing is null. Additionally, these two lines of code are surrounded by impure actions both before and after. Is this the Sandwich, then?

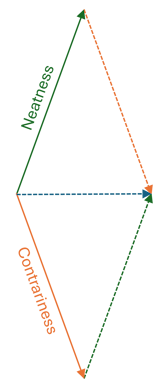

No, it's not. This is how idiomatic imperative code looks. To borrow a diagram from another article, pure and impure code is interleaved without discipline:

Even so, the above Put method implements the Functional Core, Imperative Shell architecture. The Put method is the Imperative Shell, but where's the Functional Core?

Shell perspective #

One thing to be aware of is that when looking at the Imperative Shell code, the Functional Core is close to invisible. This is because it's typically only a single function call.

In the above Put method, this is the Functional Core:

var ok = restaurant.MaitreD.WillAccept( now, reservations, reservation); if (!ok) return NoTables500InternalServerError();

It's only a few lines of code, and had I not given myself the constraint of staying within an 80 character line width, I could have instead laid it out like this and inlined the ok flag:

if (!restaurant.MaitreD.WillAccept(now, reservations, reservation)) return NoTables500InternalServerError();

Now that I try this, in fact, it turns out that this actually still stays within 80 characters. To be honest, I don't know exactly why I had that former code instead of this, but perhaps I found the latter alternative too dense. Or perhaps I simply didn't think of it. Code is rarely perfect. Usually when I revisit a piece of code after having been away from it for some time, I find some thing that I want to change.

In any case, that's beside the point. What matters here is that when you're looking through the Imperative Shell code, the Functional Core looks insignificant. Blink and you'll miss it. Even if we ignore all the other small pure decisions (the if statements) and pretend that we already have an Impureim Sandwich, from this viewpoint, the architecture looks like this:

It's tempting to ask, then: What's all the fuss about? Why even bother?

This is a natural experience for a code reader. After all, if you don't know a code base well, you often start at the entry point to try to understand how the application handles a certain stimulus. Such as an HTTP PUT request. When you do that, you see all of the Imperative Shell code before you see the Functional Core code. This could give you the wrong impression about the balance of responsibility.

After all, code like the above Put method has inlined most of the impure code so that it's right in your face. Granted, there's still some code hiding behind, say, Repository.ReadReservations, but a substantial fraction of the imperative code is visible in the method.

On the other hand, the Functional Core is just a single function call. If we inlined all of that code, too, the picture might rather look like this:

This obviously depends on the de-facto ratio of pure to imperative code. In any case, inlining the pure code is a thought experiment only, because the whole point of functional architecture is that a referentially transparent function fits in your head. Regardless of the complexity and amount of code hiding behind that MaitreD.WillAccept function, the return value is equal to the function call. It's the ultimate abstraction.

Standard combinators #

As I've already suggested, the inlined Put method looks like a Transaction Script. The cyclomatic complexity fortunately hovers on the magical number seven, and branching is exclusively organized around Guard Clauses. Apart from that, there are no nested if statements or for loops.

Apart from the Guard Clauses, this mostly looks like a procedure that runs in a straight line from top to bottom. The exception is all those small conditionals that may cause the procedure to exit prematurely. Conditions like this:

if (!Guid.TryParse(id, out var rid)) return new NotFoundResult();

or

if (reservation is null) return new BadRequestResult();

Such checks occur throughout the method. Each of them are actually small pure islands amidst all the imperative code, but each is ad hoc. Each checks if it's possible for the procedure to continue, and returns a kind of error value if it decides that it's not.

Is there a way to model such 'derailments' from the main flow?

If you've ever encountered Scott Wlaschin's Railway Oriented Programming you may already see where this is going. Railway-oriented programming is a fantastic metaphor, because it gives you a way to visualize that you have, indeed, a main track, but then you have a side track that you may shuffle some trains too. And once the train is on the side track, it can't go back to the main track.

That's how the Either monad works. Instead of all those ad-hoc if statements, we should be able to replace them with what we may call standard combinators. The most important of these combinators is monadic bind. Composing a Transaction Script like Put with standard combinators will 'hide away' those small decisions, and make the Sandwich nature more apparent.

If we had had pure code, we could just have composed Either-valued functions. Unfortunately, most of what's going on in the Put method happens in a Task-based context. Thankfully, Either is one of those monads that nest well, implying that we can turn the combination into a composed TaskEither monad. The linked article shows the core TaskEither SelectMany implementations.

The way to encode all those small decisions between 'main track' or 'side track', then, is to wrap 'naked' values in the desired Task<Either<L, R>> container:

Task.FromResult(id.TryParseGuid().OnNull((ActionResult)new NotFoundResult()))

This little code snippet makes use of a few small building blocks that we also need to introduce. First, .NET's standard TryParse APIs don't, compose, but since they're isomorphic to Maybe-valued functions, you can write an adapter like this:

public static Guid? TryParseGuid(this string candidate) { if (Guid.TryParse(candidate, out var guid)) return guid; else return null; }

In this code base, I treat nullable reference types as equivalent to the Maybe monad, but if your language doesn't have that feature, you can use Maybe instead.

To implement the Put method, however, we don't want nullable (or Maybe) values. We need Either values, so we may introduce a natural transformation:

public static Either<L, R> OnNull<L, R>(this R? candidate, L left) where R : struct { if (candidate.HasValue) return Right<L, R>(candidate.Value); return Left<L, R>(left); }

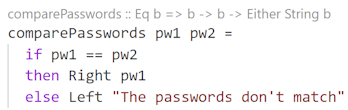

In Haskell one might just make use of the built-in Maybe catamorphism:

ghci> maybe (Left "boo!") Right $ Just 123 Right 123 ghci> maybe (Left "boo!") Right $ Nothing Left "boo!"

Such conversions from Maybe to Either hover just around the Fairbairn threshold, but since we are going to need it more than once, it makes sense to add a specialized OnNull transformation to the C# code base. The one shown here handles nullable value types, but the code base also includes an overload that handles nullable reference types. It's almost identical.

Support for query syntax #

There's more than one way to consume monadic values in C#. While many C# developers like LINQ, most seem to prefer the familiar method call syntax; that is, just call the Select, SelectMany, and Where methods as the normal extension methods they are. Another option, however, is to use query syntax. This is what I'm aiming for here, since it'll make it easier to spot the Impureim Sandwich.

You'll see the entire sandwich later in the article. Before that, I'll highlight details and explain how to implement them. You can always scroll down to see the end result, and then scroll back here, if that's more to your liking.

The sandwich starts by parsing the id into a GUID using the above building blocks:

var sandwich = from rid in Task.FromResult(id.TryParseGuid().OnNull((ActionResult)new NotFoundResult()))

It then immediately proceeds to Validate (parse, really) the dto into a proper Domain Model:

from reservation in dto.Validate(rid).OnNull((ActionResult)new BadRequestResult())

Notice that the second from expression doesn't wrap the result with Task.FromResult. How does that work? Is the return value of dto.Validate already a Task? No, this works because I added 'degenerate' SelectMany overloads:

public static Task<Either<L, R1>> SelectMany<L, R, R1>( this Task<Either<L, R>> source, Func<R, Either<L, R1>> selector) { return source.SelectMany(x => Task.FromResult(selector(x))); } public static Task<Either<L, R1>> SelectMany<L, U, R, R1>( this Task<Either<L, R>> source, Func<R, Either<L, U>> k, Func<R, U, R1> s) { return source.SelectMany(x => k(x).Select(y => s(x, y))); }

Notice that the selector only produces an Either<L, R1> value, rather than Task<Either<L, R1>>. This allows query syntax to 'pick up' the previous value (rid, which is 'really' a Task<Either<ActionResult, Guid>>) and continue with a function that doesn't produce a Task, but rather just an Either value. The first of these two overloads then wraps that Either value and wraps it with Task.FromResult. The second overload is just the usual ceremony that enables query syntax.

Why, then, doesn't the sandwich use the same trick for rid? Why does it explicitly call Task.FromResult?

As far as I can tell, this is because of type inference. It looks as though the C# compiler infers the monad's type from the first expression. If I change the first expression to

from rid in id.TryParseGuid().OnNull((ActionResult)new NotFoundResult())

the compiler thinks that the query expression is based on Either<L, R>, rather than Task<Either<L, R>>. This means that once we run into the first Task value, the entire expression no longer works.

By explicitly wrapping the first expression in a Task, the compiler correctly infers the monad we'd like it to. If there's a more elegant way to do this, I'm not aware of it.

Values that don't fail #

The sandwich proceeds to query various databases, using the now-familiar OnNull combinators to transform nullable values to Either values.

from restaurant in RestaurantDatabase .GetRestaurant(restaurantId) .OnNull((ActionResult)new NotFoundResult()) from existing in Repository .ReadReservation(restaurant.Id, reservation.Id) .OnNull((ActionResult)new NotFoundResult())

This works like before because both GetRestaurant and ReadReservation are queries that may fail to return a value. Here's the interface definition of ReadReservation:

Task<Reservation?> ReadReservation(int restaurantId, Guid id);

Notice the question mark that indicates that the result may be null.

The GetRestaurant method is similar.

The next query that the sandwich has to perform, however, is different. The return type of the ReadReservations method is Task<IReadOnlyCollection<Reservation>>. Notice that the type contained in the Task is not nullable. Barring database connection errors, this query can't fail. If it finds no data, it returns an empty collection.

Since the value isn't nullable, we can't use OnNull to turn it into a Task<Either<L, R>> value. We could try to use the Right creation function for that.

public static Either<L, R> Right<L, R>(R right) { return Either<L, R>.Right(right); }

This works, but is awkward:

from reservations in Repository .ReadReservations(restaurant.Id, reservation.At) .Traverse(rs => Either.Right<ActionResult, IReadOnlyCollection<Reservation>>(rs))

The problem with calling Either.Right is that while the compiler can infer which type to use for R, it doesn't know what the L type is. Thus, we have to tell it, and we can't tell it what L is without also telling it what R is. Even though it already knows that.

In such scenarios, the F# compiler can usually figure it out, and GHC always can (unless you add some exotic language extensions to your code). C# doesn't have any syntax that enables you to tell the compiler about only the type that it doesn't know about, and let it infer the rest.

All is not lost, though, because there's a little trick you can use in cases such as this. You can let the C# compiler infer the R type so that you only have to tell it what L is. It's a two-stage process. First, define an extension method on R:

public static RightBuilder<R> ToRight<R>(this R right) { return new RightBuilder<R>(right); }

The only type argument on this ToRight method is R, and since the right parameter is of the type R, the C# compiler can always infer the type of R from the type of right.

What's RightBuilder<R>? It's this little auxiliary class:

public sealed class RightBuilder<R> { private readonly R right; public RightBuilder(R right) { this.right = right; } public Either<L, R> WithLeft<L>() { return Either.Right<L, R>(right); } }

The code base for Code That Fits in Your Head was written on .NET 3.1, but today you could have made this a record instead. The only purpose of this class is to break the type inference into two steps so that the R type can be automatically inferred. In this way, you only need to tell the compiler what the L type is.

from reservations in Repository .ReadReservations(restaurant.Id, reservation.At) .Traverse(rs => rs.ToRight().WithLeft<ActionResult>())

As indicated, this style of programming isn't language-neutral. Even if you find this little trick neat, I'd much rather have the compiler just figure it out for me. The entire sandwich query expression is already defined as working with Task<Either<ActionResult, R>>, and the L type can't change like the R type can. Functional compilers can figure this out, and while I intend this article to show object-oriented programmers how functional programming sometimes work, I don't wish to pretend that it's a good idea to write code like this in C#. I've covered that ground already.

Not surprisingly, there's a mirror-image ToLeft/WithRight combo, too.

Working with Commands #

The ultimate goal with the Put method is to modify a row in the database. The method to do that has this interface definition:

Task Update(int restaurantId, Reservation reservation);

I usually call that non-generic Task class for 'asynchronous void' when explaining it to non-C# programmers. The Update method is an asynchronous Command.

Task and void aren't legal values for use with LINQ query syntax, so you have to find a way to work around that limitation. In this case I defined a local helper method to make it look like a Query:

async Task<Reservation> RunUpdate(int restaurantId, Reservation reservation, TransactionScope scope) { await Repository.Update(restaurantId, reservation).ConfigureAwait(false); scope.Complete(); return reservation; }

It just echoes back the reservation parameter once the Update has completed. This makes it composable in the larger query expression.

You'll probably not be surprised when I tell you that both F# and Haskell handle this scenario gracefully, without requiring any hoop-jumping.

Full sandwich #

Those are all the building block. Here's the full sandwich definition, colour-coded like the examples in Impureim sandwich.

Task<Either<ActionResult, OkObjectResult>> sandwich = from rid in Task.FromResult( id.TryParseGuid().OnNull((ActionResult)new NotFoundResult())) from reservation in dto.Validate(rid).OnNull( (ActionResult)new BadRequestResult()) from restaurant in RestaurantDatabase .GetRestaurant(restaurantId) .OnNull((ActionResult)new NotFoundResult()) from existing in Repository .ReadReservation(restaurant.Id, reservation.Id) .OnNull((ActionResult)new NotFoundResult()) from reservations in Repository .ReadReservations(restaurant.Id, reservation.At) .Traverse(rs => rs.ToRight().WithLeft<ActionResult>()) let now = Clock.GetCurrentDateTime() let reservations2 = reservations.Where(r => r.Id != reservation.Id) let ok = restaurant.MaitreD.WillAccept( now, reservations2, reservation) from reservation2 in ok ? reservation.ToRight().WithLeft<ActionResult>() : NoTables500InternalServerError().ToLeft().WithRight<Reservation>() from reservation3 in RunUpdate(restaurant.Id, reservation2, scope) .Traverse(r => r.ToRight().WithLeft<ActionResult>()) select new OkObjectResult(reservation3.ToDto());

As is evident from the colour-coding, this isn't quite a sandwich. The structure is honestly more accurately depicted like this:

As I've previously argued, while the metaphor becomes strained, this still works well as a functional-programming architecture.

As defined here, the sandwich value is a Task that must be awaited.

Either<ActionResult, OkObjectResult> either = await sandwich.ConfigureAwait(false); return either.Match(x => x, x => x);

By awaiting the task, we get an Either value. The Put method, on the other hand, must return an ActionResult. How do you turn an Either object into a single object?

By pattern matching on it, as the code snippet shows. The L type is already an ActionResult, so we return it without changing it. If C# had had a built-in identity function, I'd used that, but idiomatically, we instead use the x => x lambda expression.

The same is the case for the R type, because OkObjectResult inherits from ActionResult. The identity expression automatically performs the type conversion for us.

This, by the way, is a recurring pattern with Either values that I run into in all languages. You've essentially computed an Either<T, T>, with the same type on both sides, and now you just want to return whichever T value is contained in the Either value. You'd think this is such a common pattern that Haskell has a nice abstraction for it, but even Hoogle fails to suggest a commonly-accepted function that does this. Apparently, either id id is considered below the Fairbairn threshold, too.

Conclusion #

This article presents an example of a non-trivial Impureim Sandwich. When I introduced the pattern, I gave a few examples. I'd deliberately chosen these examples to be simple so that they highlighted the structure of the idea. The downside of that didactic choice is that some commenters found the examples too simplistic. Therefore, I think that there's value in going through more complex examples.

The code base that accompanies Code That Fits in Your Head is complex enough that it borders on the realistic. It was deliberately written that way, and since I assume that the code base is familiar to readers of the book, I thought it'd be a good resource to show how an Impureim Sandwich might look. I explicitly chose to refactor the Put method, since it's easily the most complicated process in the code base.

The benefit of that code base is that it's written in a programming language that reach a large audience. Thus, for the reader curious about functional programming I thought that this could also be a useful introduction to some intermediate concepts.

As I've commented along the way, however, I wouldn't expect anyone to write production C# code like this. If you're able to do this, you're also able to do it in a language better suited for this programming paradigm.

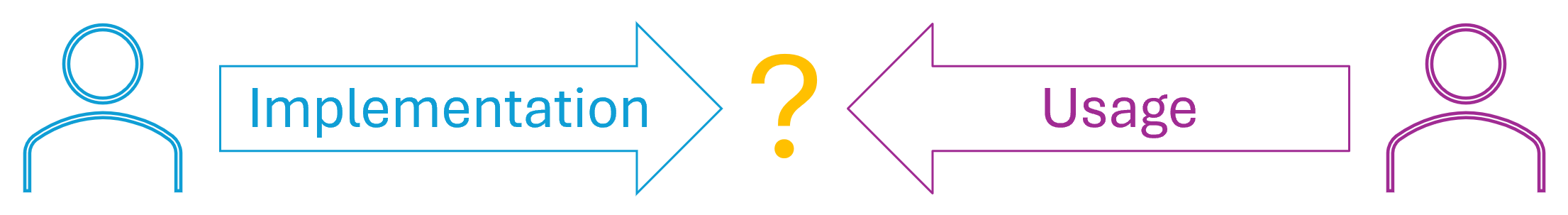

Implementation and usage mindsets

A one-dimensional take on the enduring static-versus-dynamic debate.

It recently occurred to me that one possible explanation for the standing, and probably never-ending, debate about static versus dynamic types may be that each camp have disjoint perspectives on the kinds of problems their favourite languages help them solve. In short, my hypothesis is that perhaps lovers of dynamically-typed languages often approach a problem from an implementation mindset, whereas proponents of static types emphasize usage.

I'll expand on this idea here, and then provide examples in two subsequent articles.

Background #

For years I've struggled to understand 'the other side'. While I'm firmly in the statically typed camp, I realize that many highly skilled programmers and thought leaders enjoy, or get great use out of, dynamically typed languages. This worries me, because it might indicate that I'm stuck in a local maximum.

In other words, just because I, personally, prefer static types, it doesn't follow that static types are universally better than dynamic types.

In reality, it's probably rather the case that we're dealing with a false dichotomy, and that the problem is really multi-dimensional.

"Let me stop you right there: I don't think there is a real dynamic typing versus static typing debate.

"What such debates normally are is language X vs language Y debates (where X happens to be dynamic and Y happens to be static)."

Even so, I can't help thinking about such things. Am I missing something?

For the past few years, I've dabbled with Python to see what writing in a popular dynamically typed language is like. It's not a bad language, and I can clearly see how it's attractive. Even so, I'm still frustrated every time I return to some Python code after a few weeks or more. The lack of static types makes it hard for me to pick up, or revisit, old code.

A question of perspective? #

Whenever I run into a difference of opinion, I often interpret it as a difference in perspective. Perhaps it's my academic background as an economist, but I consider it a given that people have different motivations, and that incentives influence actions.

A related kind of analysis deals with problem definitions. Are we even trying to solve the same problem?

I've discussed such questions before, but in a different context. Here, it strikes me that perhaps programmers who gravitate toward dynamically typed languages are focused on another problem than the other group.

Again, I'd like to emphasize that I don't consider the world so black and white in reality. Some developers straddle the two camps, and as the above Kevlin Henney quote suggests, there really aren't only two kinds of languages. C and Haskell are both statically typed, but the similarities stop there. Likewise, I don't know if it's fair to put JavaScript and Clojure in the same bucket.

That said, I'd still like to offer the following hypothesis, in the spirit that although all models are wrong, some are useful.

The idea is that if you're trying to solve a problem related to implementation, dynamically typed languages may be more suitable. If you're trying to implement an algorithm, or even trying to invent one, a dynamic language seems useful. One year, I did a good chunk of Advent of Code in Python, and didn't find it harder than in Haskell. (I ultimately ran out of steam for reasons unrelated to Python.)

On the other hand, if your main focus may be usage of your code, perhaps you'll find a statically typed language more useful. At least, I do. I can use the static type system to communicate how my APIs work. How to instantiate my classes. How to call my functions. How return values are shaped. In other words, the preconditions, invariants, and postconditions of my reusable code: Encapsulation.

Examples #

Some examples may be in order. In the next two articles, I'll first examine how easy it is to implement an algorithm in various programming languages. Then I'll discuss how to encapsulate that algorithm.

The articles will both discuss the rod-cutting problem from Introduction to Algorithms, but I'll introduce the problem in the next article.

Conclusion #

I'd be naive if I believed that a single model can fully explain why some people prefer dynamically typed languages, and others rather like statically typed languages. Even so, suggesting a model helps me understand how to analyze problems.

My hypothesis is that dynamically typed languages may be suitable for implementing algorithms, whereas statically typed languages offer better encapsulation.

This may be used as a heuristic for 'picking the right tool for the job'. If I need to suss out an algorithm, perhaps I should do it in Python. If, on the other hand, I need to publish a reusable library, perhaps Haskell is a better choice.

Next: Implementing rod-cutting.

Short-circuiting an asynchronous traversal

Another C# example.

This article is a continuation of an earlier post about refactoring a piece of imperative code to a functional architecture. It all started with a Stack Overflow question, but read the previous article, and you'll be up to speed.

Imperative outset #

To begin, consider this mostly imperative code snippet:

var storedItems = new List<ShoppingListItem>(); var failedItems = new List<ShoppingListItem>(); var state = (storedItems, failedItems, hasError: false); foreach (var item in itemsToUpdate) { OneOf<ShoppingListItem, NotFound, Error> updateResult = await UpdateItem(item, dbContext); state = updateResult.Match<(List<ShoppingListItem>, List<ShoppingListItem>, bool)>( storedItem => { storedItems.Add(storedItem); return state; }, notFound => { failedItems.Add(item); return state; }, error => { state.hasError = true; return state; } ); if (state.hasError) return Results.BadRequest(); } await dbContext.SaveChangesAsync(); return Results.Ok(new BulkUpdateResult([.. storedItems], [.. failedItems]));

I'll recap a few points from the previous article. Apart from one crucial detail, it's similar to the other post. One has to infer most of the types and APIs, since the original post didn't show more code than that. If you're used to engaging with Stack Overflow questions, however, it's not too hard to figure out what most of the moving parts do.

The most non-obvious detail is that the code uses a library called OneOf, which supplies general-purpose, but rather abstract, sum types. Both the container type OneOf, as well as the two indicator types NotFound and Error are defined in that library.

The Match method implements standard Church encoding, which enables the code to pattern-match on the three alternative values that UpdateItem returns.

One more detail also warrants an explicit description: The itemsToUpdate object is an input argument of the type IEnumerable<ShoppingListItem>.

The major difference from before is that now the update process short-circuits on the first Error. If an error occurs, it stops processing the rest of the items. In that case, it now returns Results.BadRequest(), and it doesn't save the changes to dbContext.

The implementation makes use of mutable state and undisciplined I/O. How do you refactor it to a more functional design?

Short-circuiting traversal #

The standard Traverse function isn't lazy, or rather, it does consume the entire input sequence. Even various Haskell data structures I investigated do that. And yes, I even tried to traverse ListT. If there's a data structure that you can traverse with deferred execution of I/O-bound actions, I'm not aware of it.

That said, all is not lost, but you'll need to implement a more specialized traversal. While consuming the input sequence, the function needs to know when to stop. It can't do that on just any IEnumerable<T>, because it has no information about T.

If, on the other hand, you specialize the traversal to a sequence of items with more information, you can stop processing if it encounters a particular condition. You could generalize this to, say, IEnumerable<Either<L, R>>, but since I already have the OneOf library in scope, I'll use that, instead of implementing or pulling in a general-purpose Either data type.

In fact, I'll just use a three-way OneOf type compatible with the one that UpdateItem returns.

internal static async Task<IEnumerable<OneOf<T1, T2, Error>>> Sequence<T1, T2>( this IEnumerable<Task<OneOf<T1, T2, Error>>> tasks) { var results = new List<OneOf<T1, T2, Error>>(); foreach (var task in tasks) { var result = await task; results.Add(result); if (result.IsT2) break; } return results; }

This implementation doesn't care what T1 or T2 is, so they're free to be ShoppingListItem and NotFound. The third type argument, on the other hand, must be Error.

The if conditional looks a bit odd, but as I wrote, the types that ship with the OneOf library have rather abstract APIs. A three-way OneOf value comes with three case tests called IsT0, IsT1, and IsT2. Notice that the library uses a zero-indexed naming convention for its type parameters. IsT2 returns true if the value is the third kind, in this case Error. If a task turns out to produce an Error, the Sequence method adds that one error, but then stops processing any remaining items.

Some readers may complain that the entire implementation of Sequence is imperative. It hardly matters that much, since the mutation doesn't escape the method. The behaviour is as functional as it's possible to make it. It's fundamentally I/O-bound, so we can't consider it a pure function. That said, if we hypothetically imagine that all the tasks are deterministic and have no side effects, the Sequence function does become a pure function when viewed as a black box. From the outside, you can't tell that the implementation is imperative.

It is possible to implement Sequence in a proper functional style, and it might make a good exercise. I think, however, that it'll be difficult in C#. In F# or Haskell I'd use recursion, and while you can do that in C#, I admit that I've lost sight of whether or not tail recursion is supported by the C# compiler.

Be that as it may, the traversal implementation doesn't change.

internal static Task<IEnumerable<OneOf<TResult, T2, Error>>> Traverse<T1, T2, TResult>( this IEnumerable<T1> items, Func<T1, Task<OneOf<TResult, T2, Error>>> selector) { return items.Select(selector).Sequence(); }

You can now Traverse the itemsToUpdate:

// Impure IEnumerable<OneOf<ShoppingListItem, NotFound<ShoppingListItem>, Error>> results = await itemsToUpdate.Traverse(item => UpdateItem(item, dbContext));

As the // Impure comment may suggest, this constitutes the first impure layer of an Impureim Sandwich.

Aggregating the results #

Since the above statement awaits the traversal, the results object is a 'pure' object that can be passed to a pure function. This does, however, assume that ShoppingListItem is an immutable object.

The next step must collect results and NotFound-related failures, but contrary to the previous article, it must short-circuit if it encounters an Error. This again suggests an Either-like data structure, but again I'll repurpose a OneOf container. I'll start by defining a seed for an aggregation (a left fold).

var seed = OneOf<(IEnumerable<ShoppingListItem>, IEnumerable<ShoppingListItem>), Error> .FromT0(([], []));

This type can be either a tuple or an error. The .NET tendency is often to define an explicit Result<TSuccess, TFailure> type, where TSuccess is defined to the left of TFailure. This, for example, is how F# defines Result types, and other .NET libraries tend to emulate that design. That's also what I've done here, although I admit that I'm regularly confused when going back and forth between F# and Haskell, where the Right case is idiomatically considered to indicate success.

As already discussed, OneOf follows a zero-indexed naming convention for type parameters, so FromT0 indicates the first (or leftmost) case. The seed is thus initialized with a tuple that contains two empty sequences.

As in the previous article, you can now use the Aggregate method to collect the result you want.

OneOf<BulkUpdateResult, Error> result = results .Aggregate( seed, (state, result) => result.Match( storedItem => state.MapT0( t => (t.Item1.Append(storedItem), t.Item2)), notFound => state.MapT0( t => (t.Item1, t.Item2.Append(notFound.Item))), e => e)) .MapT0(t => new BulkUpdateResult(t.Item1.ToArray(), t.Item2.ToArray()));

This expression is a two-step composition. I'll get back to the concluding MapT0 in a moment, but let's first discuss what happens in the Aggregate step. Since the state is now a discriminated union, the big lambda expression not only has to Match on the result, but it also has to deal with the two mutually exclusive cases in which state can be.

Although it comes third in the code listing, it may be easiest to explain if we start with the error case. Keep in mind that the seed starts with the optimistic assumption that the operation is going to succeed. If, however, we encounter an error e, we now switch the state to the Error case. Once in that state, it stays there.

The two other result cases map over the first (i.e. the success) case, appending the result to the appropriate sequence in the tuple t. Since these expressions map over the first (zero-indexed) case, these updates only run as long as the state is in the success case. If the state is in the error state, these lambda expressions don't run, and the state doesn't change.

After having collected the tuple of sequences, the final step is to map over the success case, turning the tuple t into a BulkUpdateResult. That's what MapT0 does: It maps over the first (zero-indexed) case, which contains the tuple of sequences. It's a standard functor projection.

Saving the changes and returning the results #

The final, impure step in the sandwich is to save the changes and return the results:

// Impure return await result.Match( async bulkUpdateResult => { await dbContext.SaveChangesAsync(); return Results.Ok(bulkUpdateResult); }, _ => Task.FromResult(Results.BadRequest()));

Note that it only calls dbContext.SaveChangesAsync() in case the result is a success.

Accumulating the bulk-update result #

So far, I've assumed that the final BulkUpdateResult class is just a simple immutable container without much functionality. If, however, we add some copy-and-update functions to it, we can use that to aggregate the result, instead of an anonymous tuple.

internal BulkUpdateResult Store(ShoppingListItem item) => new([.. StoredItems, item], FailedItems); internal BulkUpdateResult Fail(ShoppingListItem item) => new(StoredItems, [.. FailedItems, item]);

I would have personally preferred the name NotFound instead of Fail, but I was going with the original post's failedItems terminology, and I thought that it made more sense to call a method Fail when it adds to a collection called FailedItems.

Adding these two instance methods to BulkUpdateResult simplifies the composing code:

// Pure OneOf<BulkUpdateResult, Error> result = results .Aggregate( OneOf<BulkUpdateResult, Error>.FromT0(new([], [])), (state, result) => result.Match( storedItem => state.MapT0(bur => bur.Store(storedItem)), notFound => state.MapT0(bur => bur.Fail(notFound.Item)), e => e));

This variation starts with an empty BulkUpdateResult and then uses Store or Fail as appropriate to update the state. The final, impure step of the sandwich remains the same.

Conclusion #

It's a bit more tricky to implement a short-circuiting traversal than the standard traversal. You can, still, implement a specialized Sequence or Traverse method, but it requires that the input stream carries enough information to decide when to stop processing more items. In this article, I used a specialized three-way union, but you could generalize this to use a standard Either or Result type.

Nested monads

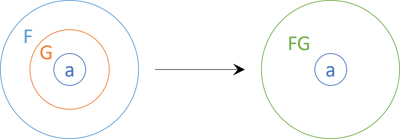

You can stack some monads in such a way that the composition is also a monad.

This article is part of a series of articles about functor relationships. In a previous article you learned that nested functors form a functor. You may have wondered if monads compose in the same way. Does a monad nested in a monad form a monad?

As far as I know, there's no universal rule like that, but some monads compose well. Fortunately, it's been my experience that the combinations that you need in practice are among those that exist and are well-known. In a Haskell context, it's often the case that you need to run some kind of 'effect' inside IO. Perhaps you want to use Maybe or Either nested within IO.

In .NET, you may run into a similar need to compose task-based programming with an effect. This happens more often in F# than in C#, since F# comes with other native monads (option and Result, to name the most common).

Abstract shape #

You'll see some real examples in a moment, but as usual it helps to outline what it is that we're looking for. Imagine that you have a monad. We'll call it F in keeping with tradition. In this article series, you've seen how two or more functors compose. When discussing the abstract shapes of things, we've typically called our two abstract functors F and G. I'll stick to that naming scheme here, because monads are functors (that you can flatten).

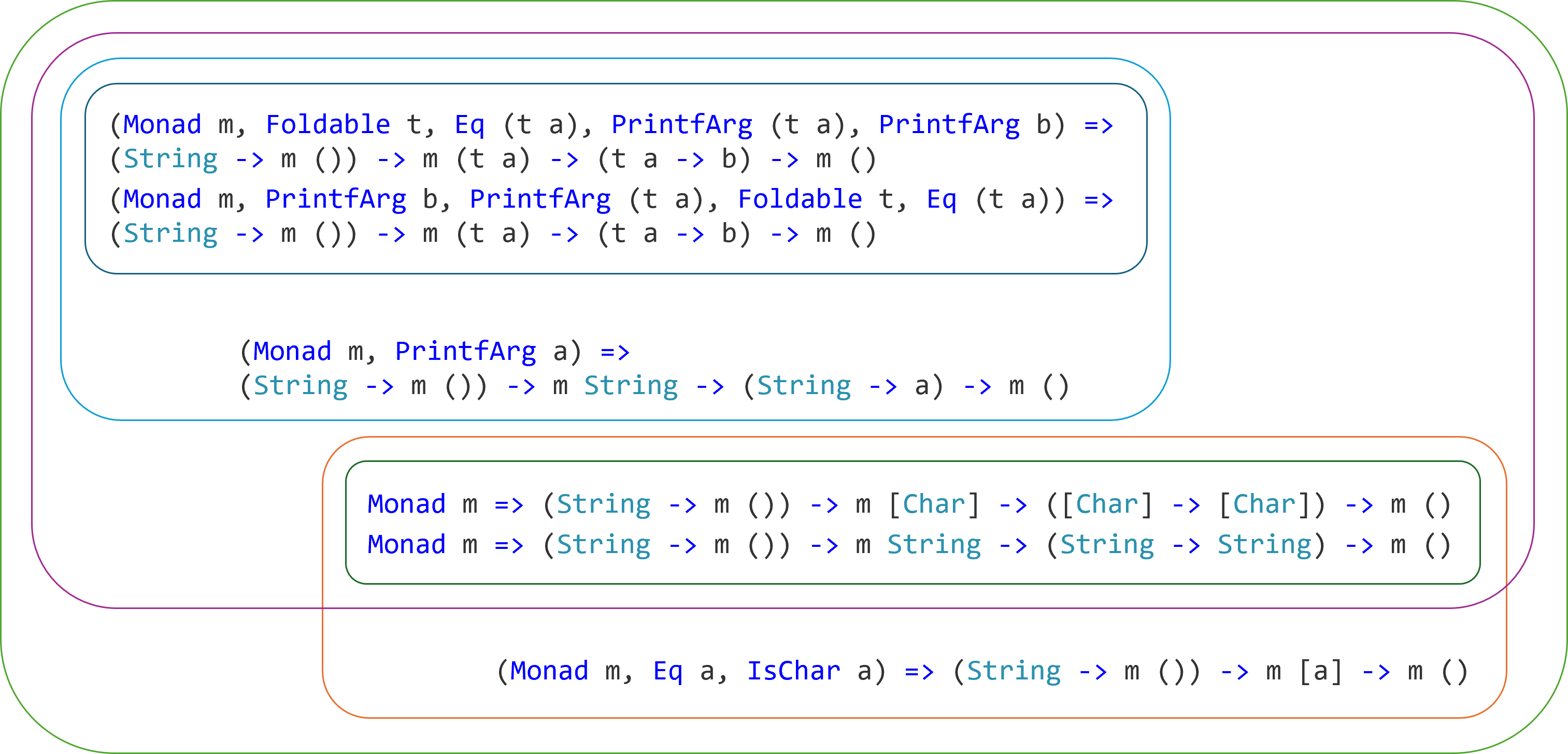

Now imagine that you have a value that stacks two monads: F<G<T>>. If the inner monad G is the 'right' kind of monad, that configuration itself forms a monad.

In the diagram, I've simply named the combined monad FG, which is a naming strategy I've seen in the real world, too: TaskResult, etc.

As I've already mentioned, if there's a general theorem that says that this is always possible, I'm not aware of it. To the contrary, I seem to recall reading that this is distinctly not the case, but the source escapes me at the moment. One hint, though, is offered in the documentation of Data.Functor.Compose:

"The composition of applicative functors is always applicative, but the composition of monads is not always a monad."

Thankfully, the monads that you mostly need to compose do, in fact, compose. They include Maybe, Either, State, Reader, and Identity (okay, that one maybe isn't that useful). In other words, any monad F that composes with e.g. Maybe, that is, F<Maybe<T>>, also forms a monad.

Notice that it's the 'inner' monad that determines whether composition is possible. Not the 'outer' monad.

For what it's worth, I'm basing much of this on my personal experience, which was again helpfully guided by Control.Monad.Trans.Class. I don't, however, wish to turn this article into an article about monad transformers, because if you already know Haskell, you can read the documentation and look at examples. And if you don't know Haskell, the specifics of monad transformers don't readily translate to languages like C# or F#.

The conclusions do translate, but the specific language mechanics don't.

Let's look at some common examples.

TaskMaybe monad #

We'll start with a simple, yet realistic example. The article Asynchronous Injection shows a simple operation that involves reading from a database, making a decision, and potentially writing to the database. The final composition, repeated here for your convenience, is an asynchronous (that is, Task-based) process.

return await Repository.ReadReservations(reservation.Date) .Select(rs => maîtreD.TryAccept(rs, reservation)) .SelectMany(m => m.Traverse(Repository.Create)) .Match(InternalServerError("Table unavailable"), Ok);

The problem here is that TryAccept returns Maybe<Reservation>, but since the overall workflow already 'runs in' an asynchronous monad (Task), the monads are now nested as Task<Maybe<T>>.

The way I dealt with that issue in the above code snippet was to rely on a traversal, but it's actually an inelegant solution. The way that the SelectMany invocation maps over the Maybe<Reservation> m is awkward. Instead of composing a business process, the scaffolding is on display, so to speak. Sometimes this is unavoidable, but at other times, there may be a better way.

In my defence, when I wrote that article in 2019 I had another pedagogical goal than teaching nested monads. It turns out, however, that you can rewrite the business process using the Task<Maybe<T>> stack as a monad in its own right.

A monad needs two functions: return and either bind or join. In C# or F#, you can often treat return as 'implied', in the sense that you can always wrap new Maybe<T> in a call to Task.FromResult. You'll see that in a moment.

While you can be cavalier about monadic return, you'll need to explicitly implement either bind or join. In this case, it turns out that the sample code base already had a SelectMany implementation:

public static async Task<Maybe<TResult>> SelectMany<T, TResult>( this Task<Maybe<T>> source, Func<T, Task<Maybe<TResult>>> selector) { Maybe<T> m = await source; return await m.Match( nothing: Task.FromResult(new Maybe<TResult>()), just: x => selector(x)); }

The method first awaits the Maybe value, and then proceeds to Match on it. In the nothing case, you see the implicit return being used. In the just case, the SelectMany method calls selector with whatever x value was contained in the Maybe object. The result of calling selector already has the desired type Task<Maybe<TResult>>, so the implementation simply returns that value without further ado.

This enables you to rewrite the SelectMany call in the business process so that it instead looks like this:

return await Repository.ReadReservations(reservation.Date) .Select(rs => maîtreD.TryAccept(rs, reservation)) .SelectMany(r => Repository.Create(r).Select(i => new Maybe<int>(i))) .Match(InternalServerError("Table unavailable"), Ok);

At first glance, it doesn't look like much of an improvement. To be sure, the lambda expression within the SelectMany method no longer operates on a Maybe value, but rather on the Reservation Domain Model r. On the other hand, we're now saddled with that graceless Select(i => new Maybe<int>(i)).

Had this been Haskell, we could have made this more succinct by eta reducing the Maybe case constructor and used the <$> infix operator instead of fmap; something like Just <$> create r. In C#, on the other hand, we can do something that Haskell doesn't allow. We can overload the SelectMany method:

public static Task<Maybe<TResult>> SelectMany<T, TResult>( this Task<Maybe<T>> source, Func<T, Task<TResult>> selector) { return source.SelectMany(x => selector(x).Select(y => new Maybe<TResult>(y))); }

This overload generalizes the 'pattern' exemplified by the above business process composition. Instead of a specific method call, it now works with any selector function that returns Task<TResult>. Since selector only returns a Task<TResult> value, and not a Task<Maybe<TResult>> value, as actually required in this nested monad, the overload has to map (that is, Select) the result by wrapping it in a new Maybe<TResult>.

This now enables you to improve the business process composition to something more readable.

return await Repository.ReadReservations(reservation.Date) .Select(rs => maîtreD.TryAccept(rs, reservation)) .SelectMany(Repository.Create) .Match(InternalServerError("Table unavailable"), Ok);

It even turned out to be possible to eta reduce the lambda expression instead of the (also valid, but more verbose) r => Repository.Create(r).

If you're interested in the sample code, I've pushed a branch named use-monad-stack to the GitHub repository.

Not surprisingly, the F# bind function is much terser:

let bind f x = async { match! x with | Some x' -> return! f x' | None -> return None }

You can find that particular snippet in the code base that accompanies the article Refactoring registration flow to functional architecture, although as far as I can tell, it's not actually in use in that code base. I probably just added it because I could.

You can find Haskell examples of combining MaybeT with IO in various articles on this blog. One of them is Dependency rejection.

TaskResult monad #

A similar, but slightly more complex, example involves nesting Either values in asynchronous workflows. In some languages, such as F#, Either is rather called Result, and asynchronous workflows are modelled by a Task container, as already demonstrated above. Thus, on .NET at least, this nested monad is often called TaskResult, but you may also see AsyncResult, AsyncEither, or other combinations. Depending on the programming language, such names may be used only for modules, and not for the container type itself. In C# or F# code, for example, you may look in vain after a class called TaskResult<T>, but rather find a TaskResult static class or module.

In C# you can define monadic bind like this:

public static async Task<Either<L, R1>> SelectMany<L, R, R1>( this Task<Either<L, R>> source, Func<R, Task<Either<L, R1>>> selector) { if (source is null) throw new ArgumentNullException(nameof(source)); Either<L, R> x = await source.ConfigureAwait(false); return await x.Match( l => Task.FromResult(Either.Left<L, R1>(l)), selector).ConfigureAwait(false); }

Here I've again passed the eta-reduced selector straight to the right case of the Either value, but r => selector(r) works, too.

The left case shows another example of 'implicit monadic return'. I didn't bother defining an explicit Return function, but rather use Task.FromResult(Either.Left<L, R1>(l)) to return a Task<Either<L, R1>> value.

As is the case with C#, you'll also need to add a special overload to enable the syntactic sugar of query expressions:

public static Task<Either<L, R1>> SelectMany<L, U, R, R1>( this Task<Either<L, R>> source, Func<R, Task<Either<L, U>>> k, Func<R, U, R1> s) { return source.SelectMany(x => k(x).Select(y => s(x, y))); }

You'll see a comprehensive example using these functions in a future article.

In F# I'd often first define a module with a few functions including bind, and then use those implementations to define a computation expression, but in one article, I jumped straight to the expression builder:

type AsyncEitherBuilder () = // Async<Result<'a,'c>> * ('a -> Async<Result<'b,'c>>) // -> Async<Result<'b,'c>> member this.Bind(x, f) = async { let! x' = x match x' with | Success s -> return! f s | Failure f -> return Failure f } // 'a -> 'a member this.ReturnFrom x = x let asyncEither = AsyncEitherBuilder ()

That article also shows usage examples. Another article, A conditional sandwich example, shows more examples of using this nested monad, although there, the computation expression is named taskResult.

Stateful computations that may fail #

To be honest, you mostly run into a scenario where nested monads are useful when some kind of 'effect' (errors, mostly) is embedded in an I/O-bound computation. In Haskell, this means IO, in C# Task, and in F# either Task or Async.

Other combinations are possible, however, but I've rarely encountered a need for additional nested monads outside of Haskell. In multi-paradigmatic languages, you can usually find other good designs that address issues that you may occasionally run into in a purely functional language. The following example is a Haskell-only example. You can skip it if you don't know or care about Haskell.

Imagine that you want to keep track of some statistics related to a software service you offer. If the variance of some number (say, response time) exceeds 10 then you want to issue an alert that the SLA was violated. Apparently, in your system, reliability means staying consistent.

You have millions of observations, and they keep arriving, so you need an online algorithm. For average and variance we'll use Welford's algorithm.

The following code uses these imports:

import Control.Monad import Control.Monad.Trans.State.Strict import Control.Monad.Trans.Maybe

First, you can define a data structure to hold the aggregate values required for the algorithm, as well as an initial, empty value:

data Aggregate = Aggregate { count :: Int, meanA :: Double, m2 :: Double } deriving (Eq, Show) emptyA :: Aggregate emptyA = Aggregate 0 0 0

You can also define a function to update the aggregate values with a new observation:

update :: Aggregate -> Double -> Aggregate update (Aggregate count mean m2) x = let count' = count + 1 delta = x - mean mean' = mean + delta / fromIntegral count' delta2 = x - mean' m2' = m2 + delta * delta2 in Aggregate count' mean' m2'

Given an existing Aggregate record and a new observation, this function implements the algorithm to calculate a new Aggregate record.

The values in an Aggregate record, however, are only intermediary values that you can use to calculate statistics such as mean, variance, and sample variance. You'll need a data type and function to do that, as well:

data Statistics = Statistics { mean :: Double, variance :: Double, sampleVariance :: Maybe Double } deriving (Eq, Show) extractStatistics :: Aggregate -> Maybe Statistics extractStatistics (Aggregate count mean m2) = if count < 1 then Nothing else let variance = m2 / fromIntegral count sampleVariance = if count < 2 then Nothing else Just $ m2 / fromIntegral (count - 1) in Just $ Statistics mean variance sampleVariance

This is where the computation becomes 'failure-prone'. Granted, we only have a real problem when we have zero observations, but this still means that we need to return a Maybe Statistics value in order to avoid division by zero.

(There might be other designs that avoid that problem, or you might simply decide to tolerate that edge case and code around it in other ways. I've decided to design the extractStatistics function in this particular way in order to furnish an example. Work with me here.)

Let's say that as the next step, you'd like to compose these two functions into a single function that both adds a new observation, computes the statistics, but also returns the updated Aggregate.

You could write it like this:

addAndCompute :: Double -> Aggregate -> Maybe (Statistics, Aggregate) addAndCompute x agg = do let agg' = update agg x stats <- extractStatistics agg' return (stats, agg')

This implementation uses do notation to automate handling of Nothing values. Still, it's a bit inelegant with its two agg values only distinguishable by the prime sign after one of them, and the need to explicitly return a tuple of the value and the new state.

This is the kind of problem that the State monad addresses. You could instead write the function like this:

addAndCompute :: Double -> State Aggregate (Maybe Statistics) addAndCompute x = do modify $ flip update x gets extractStatistics

You could actually also write it as a one-liner, but that's already a bit too terse to my liking:

addAndCompute :: Double -> State Aggregate (Maybe Statistics) addAndCompute x = modify (`update` x) >> gets extractStatistics

And if you really hate your co-workers, you can always visit pointfree.io to entirely obscure that expression, but I digress.

The point is that the State monad amplifies the essential and eliminates the irrelevant.

Now you'd like to add a function that issues an alert if the variance is greater than 10. Again, you could write it like this:

monitor :: Double -> State Aggregate (Maybe String) monitor x = do stats <- addAndCompute x case stats of Just Statistics { variance } -> return $ if 10 < variance then Just "SLA violation" else Nothing Nothing -> return Nothing

But again, the code is graceless with its explicit handling of Maybe cases. Whenever you see code that matches Maybe cases and maps Nothing to Nothing, your spider sense should be tingling. Could you abstract that away with a functor or monad?

Yes you can! You can use the MaybeT monad transformer, which nests Maybe computations inside another monad. In this case State:

monitor :: Double -> State Aggregate (Maybe String) monitor x = runMaybeT $ do Statistics { variance } <- MaybeT $ addAndCompute x guard (10 < variance) return "SLA Violation"

The function type is the same, but the implementation is much simpler. First, the code lifts the Maybe-valued addAndCompute result into MaybeT and pattern-matches on the variance. Since the code is now 'running in' a Maybe-like context, this line of code only executes if there's a Statistics value to extract. If, on the other hand, addAndCompute returns Nothing, the function already short-circuits there.

The guard works just like imperative Guard Clauses. The third line of code only runs if the variance is greater than 10. In that case, it returns an alert message.

The entire do workflow gets unwrapped with runMaybeT so that we return back to a normal stateful computation that may fail.

Let's try it out:

ghci> (evalState $ monitor 1 >> monitor 7) emptyA Nothing ghci> (evalState $ monitor 1 >> monitor 8) emptyA Just "SLA Violation"

Good, rigorous testing suggests that it's working.

Conclusion #

You sometimes run into situations where monads are nested. This mostly happens in I/O-bound computations, where you may have a Maybe or Either value embedded inside Task or IO. This can sometimes make working with the 'inner' monad awkward, but in many cases there's a good solution at hand.

Some monads, like Maybe, Either, State, Reader, and Identity, nest nicely inside other monads. Thus, if your 'inner' monad is one of those, you can turn the nested arrangement into a monad in its own right. This may help simplify your code base.

In addition to the common monads listed here, there are few more exotic ones that also play well in a nested configuration. Additionally, if your 'inner' monad is a custom data structure of your own creation, it's up to you to investigate if it nests nicely in another monad. As far as I can tell, though, if you can make it nest in one monad (e.g Task, Async, or IO) you can probably make it nest in any monad.

Next: Software design isomorphisms.

Collecting and handling result values

The answer is traverse. It's always traverse.

I recently came across a Stack Overflow question about collecting and handling sum types (AKA discriminated unions or, in this case, result types). While the question was tagged functional-programming, the overall structure of the code was so imperative, with so much interleaved I/O, that it hardly qualified as functional architecture.

Instead, I gave an answer which involved a minimal change to the code. Subsequently, the original poster asked to see a more functional version of the code. That's a bit too large a task for a Stack Overflow answer, I think, so I'll do it here on the blog instead.

Further comments and discussion on the original post reveal that the poster is interested in two alternatives. I'll start with the alternative that's only discussed, but not shown, in the question. The motivation for this ordering is that this variation is easier to implement than the other one, and I consider it pedagogical to start with the simplest case.

I'll do that in this article, and then follow up with another article that covers the short-circuiting case.

Imperative outset #

To begin, consider this mostly imperative code snippet:

var storedItems = new List<ShoppingListItem>(); var failedItems = new List<ShoppingListItem>(); var errors = new List<Error>(); var state = (storedItems, failedItems, errors); foreach (var item in itemsToUpdate) { OneOf<ShoppingListItem, NotFound, Error> updateResult = await UpdateItem(item, dbContext); state = updateResult.Match<(List<ShoppingListItem>, List<ShoppingListItem>, List<Error>)>( storedItem => { storedItems.Add(storedItem); return state; }, notFound => { failedItems.Add(item); return state; }, error => { errors.Add(error); return state; } ); } await dbContext.SaveChangesAsync(); return Results.Ok(new BulkUpdateResult([.. storedItems], [.. failedItems], [.. errors]));

There's quite a few things to take in, and one has to infer most of the types and APIs, since the original post didn't show more code than that. If you're used to engaging with Stack Overflow questions, however, it's not too hard to figure out what most of the moving parts do.

The most non-obvious detail is that the code uses a library called OneOf, which supplies general-purpose, but rather abstract, sum types. Both the container type OneOf, as well as the two indicator types NotFound and Error are defined in that library.

The Match method implements standard Church encoding, which enables the code to pattern-match on the three alternative values that UpdateItem returns.

One more detail also warrants an explicit description: The itemsToUpdate object is an input argument of the type IEnumerable<ShoppingListItem>.

The implementation makes use of mutable state and undisciplined I/O. How do you refactor it to a more functional design?

Standard traversal #

I'll pretend that we only need to turn the above code snippet into a functional design. Thus, I'm ignoring that the code is most likely part of a larger code base. Because of the implied database interaction, the method isn't a pure function. Unless it's a top-level method (that is, at the boundary of the application), it doesn't exemplify larger-scale functional architecture.

That said, my goal is to refactor the code to an Impureim Sandwich: Impure actions first, then the meat of the functionality as a pure function, and then some more impure actions to complete the functionality. This strongly suggests that the first step should be to map over itemsToUpdate and call UpdateItem for each.

If, however, you do that, you get this:

IEnumerable<Task<OneOf<ShoppingListItem, NotFound, Error>>> results = itemsToUpdate.Select(item => UpdateItem(item, dbContext));

The results object is a sequence of tasks. If we consider Task as a surrogate for IO, each task should be considered impure, as it's either non-deterministic, has side effects, or both. This means that we can't pass results to a pure function, and that frustrates the ambition to structure the code as an Impureim Sandwich.

This is one of the most common problems in functional programming, and the answer is usually: Use a traversal.

IEnumerable<OneOf<ShoppingListItem, NotFound<ShoppingListItem>, Error>> results = await itemsToUpdate.Traverse(item => UpdateItem(item, dbContext));

Because this first, impure layer of the sandwich awaits the task, results is now an immutable value that can be passed to the pure step. This, by the way, assumes that ShoppingListItem is immutable, too.

Notice that I adjusted one of the cases of the discriminated union to NotFound<ShoppingListItem> rather than just NotFound. While the OneOf library ships with a NotFound type, it doesn't have a generic container of that name, so I defined it myself:

internal sealed record NotFound<T>(T Item);

I added it to make the next step simpler.

Aggregating the results #

The next step is to sort the results into three 'buckets', as it were.

// Pure var seed = ( Enumerable.Empty<ShoppingListItem>(), Enumerable.Empty<ShoppingListItem>(), Enumerable.Empty<Error>() ); var result = results.Aggregate( seed, (state, result) => result.Match( storedItem => (state.Item1.Append(storedItem), state.Item2, state.Item3), notFound => (state.Item1, state.Item2.Append(notFound.Item), state.Item3), error => (state.Item1, state.Item2, state.Item3.Append(error))));

It's also possible to inline the seed value, but here I defined it in a separate expression in an attempt at making the code a little more readable. I don't know if I succeeded, because regardless of where it goes, it's hardly idiomatic to break tuple initialization over multiple lines. I had to, though, because otherwise the code would run too far to the right.

The lambda expression handles each result in results and uses Match to append the value to its proper 'bucket'. The outer result is a tuple of the three collections.

Saving the changes and returning the results #

The final, impure step in the sandwich is to save the changes and return the results:

// Impure await dbContext.SaveChangesAsync(); return new OkResult( new BulkUpdateResult([.. result.Item1], [.. result.Item2], [.. result.Item3]));

To be honest, the last line of code is pure, but that's not unusual when it comes to Impureim Sandwiches.

Accumulating the bulk-update result #

So far, I've assumed that the final BulkUpdateResult class is just a simple immutable container without much functionality. If, however, we add some copy-and-update functions to it, we can use them to aggregate the result, instead of an anonymous tuple.

internal BulkUpdateResult Store(ShoppingListItem item) => new([.. StoredItems, item], FailedItems, Errors); internal BulkUpdateResult Fail(ShoppingListItem item) => new(StoredItems, [.. FailedItems, item], Errors); internal BulkUpdateResult Error(Error error) => new(StoredItems, FailedItems, [.. Errors, error]);

I would have personally preferred the name NotFound instead of Fail, but I was going with the original post's failedItems terminology, and I thought that it made more sense to call a method Fail when it adds to a collection called FailedItems.

Adding these three instance methods to BulkUpdateResult simplifies the composing code:

// Impure IEnumerable<OneOf<ShoppingListItem, NotFound<ShoppingListItem>, Error>> results = await itemsToUpdate.Traverse(item => UpdateItem(item, dbContext)); // Pure var result = results.Aggregate( new BulkUpdateResult([], [], []), (state, result) => result.Match( storedItem => state.Store(storedItem), notFound => state.Fail(notFound.Item), error => state.Error(error))); // Impure await dbContext.SaveChangesAsync(); return new OkResult(result);

This variation starts with an empty BulkUpdateResult and then uses Store, Fail, or Error as appropriate to update the state.

Parallel Sequence #

If the tasks you want to traverse are thread-safe, you might consider making the traversal concurrent. You can use Task.WhenAll for that. It has the same type as Sequence, so if you can live with the extra non-determinism that comes with parallel execution, you can use that instead:

internal static async Task<IEnumerable<T>> Sequence<T>(this IEnumerable<Task<T>> tasks) { return await Task.WhenAll(tasks); }

Since the method signature doesn't change, the rest of the code remains unchanged.

Conclusion #

One of the most common stumbling blocks in functional programming is when you have a collection of values, and you need to perform an impure action (typically I/O) for each. This leaves you with a collection of impure values (Task in C#, Task or Async in F#, IO in Haskell, etc.). What you actually need is a single impure value that contains the collection of results.

The solution to this kind of problem is to traverse the collection, rather than mapping over it (with Select, map, fmap, or similar). Note that computer scientists often talk about traversing a data structure like a tree. This is a less well-defined use of the word, and not directly related. That said, you can also write Traverse and Sequence functions for trees.

This article used a Stack Overflow question as the starting point for an example showing how to refactor imperative code to an Impureim Sandwich.

This completes the first variation requested in the Stack Overflow question.

Traversals

How to convert a list of tasks into an asynchronous list, and similar problems.

This article is part of a series of articles about functor relationships. In a previous article you learned about natural transformations, and then how functors compose. You can skip several of them if you like, but you might find the one about functor compositions relevant. Still, this article can be read independently of the rest of the series.

You can go a long way with just a single functor or monad. Consider how useful C#'s LINQ API is, or similar kinds of APIs in other languages - typically map and flatMap methods. These APIs work exclusively with the List monad (which is also a functor). Working with lists, sequences, or collections is so useful that many languages have other kinds of special syntax specifically aimed at working with multiple values: List comprehension.

Asynchronous monads like Task<T> or F#'s Async<'T> are another kind of functor so useful in their own right that languages have special async and await keywords to compose them.

Sooner or later, though, you run into situations where you'd like to combine two different functors.

Lists and tasks #

It's not unusual to combine collections and asynchrony. If you make an asynchronous database query, you could easily receive something like Task<IEnumerable<Reservation>>. This, in isolation, hardly causes problems, but things get more interesting when you need to compose multiple reads.

Consider a query like this:

public static Task<Foo> Read(int id)

What happens if you have a collection of IDs that you'd like to read? This happens:

var ids = new[] { 42, 1337, 2112 }; IEnumerable<Task<Foo>> fooTasks = ids.Select(id => Foo.Read(id));

You get a collection of Tasks, which may be awkward because you can't await it. Perhaps you'd rather prefer a single Task that contains a collection: Task<IEnumerable<Foo>>. In other words, you'd like to flip the functors:

IEnumerable<Task<Foo>> Task<IEnumerable<Foo>>

The top type is what you have. The bottom type is what you'd like to have.

The combination of asynchrony and collections is so common that .NET has special methods to do that. I'll briefly mention one of these later, but what's the general solution to this problem?

Whenever you need to flip two functors, you need a traversal.

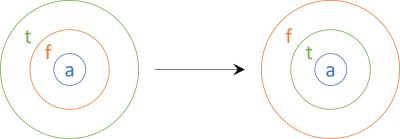

Sequence #

As is almost always the case, we can look to Haskell for a canonical definition of traversals - or, as the type class is called: Traversable.

A traversable functor is a functor that enables you to flip that functor and another functor, like the above C# example. In more succinct syntax:

t (f a) -> f (t a)

Here, t symbolises any traversable functor (like IEnumerable<T> in the above C# example), and f is another functor (like Task<T>, above). By flipping the functors I mean making t and f change places; just like IEnumerable and Task, above.

Thinking of functors as containers we might depict the function like this:

To the left, we have an outer functor t (e.g. IEnumerable) that contains another functor f (e.g. Task) that again 'contains' values of type a (in C# typically called T). We'd like to flip how the containers are nested so that f contains t.

Contrary to what you might expect, the function that does that isn't called traverse; it's called sequence. (For those readers who are interested in Haskell specifics, the function I'm going to be talking about is actually called sequenceA. There's also a function called sequence, but it's not as general. The reason for the odd names are related to the evolution of various Haskell type classes.)

The sequence function doesn't work for any old functor. First, t has to be a traversable functor. We'll get back to that later. Second, f has to be an applicative functor. (To be honest, I'm not sure if this is always required, or if it's possible to produce an example of a specific functor that isn't applicative, but where it's still possible to implement a sequence function. The Haskell sequenceA function has Applicative f as a constraint, but as far as I can tell, this only means that this is a sufficient requirement - not that it's necessary.)

Since tasks (e.g. Task<T>) are applicative functors (they are, because they are monads, and all monads are applicative functors), that second requirement is fulfilled for the above example. I'll show you how to implement a Sequence function in C# and how to use it, and then we'll return to the general discussion of what a traversable functor is:

public static Task<IEnumerable<T>> Sequence<T>( this IEnumerable<Task<T>> source) { return source.Aggregate( Task.FromResult(Enumerable.Empty<T>()), async (acc, t) => { var xs = await acc; var x = await t; return xs.Concat(new[] { x }); }); }

This Sequence function enables you to flip any IEnumerable<Task<T>> to a Task<IEnumerable<T>>, including the above fooTasks:

Task<IEnumerable<Foo>> foosTask = fooTasks.Sequence();

You can also implement sequence in F#:

// Async<'a> list -> Async<'a list> let sequence asyncs = let go acc t = async { let! xs = acc let! x = t return List.append xs [x] } List.fold go (fromValue []) asyncs

and use it like this:

let fooTasks = ids |> List.map Foo.Read let foosTask = fooTasks |> Async.sequence

For this example, I put the sequence function in a local Async module; it's not part of any published Async module.

These C# and F# examples are specific translations: From lists of tasks to a task of list. If you need another translation, you'll have to write a new function for that particular combination of functors. Haskell has more general capabilities, so that you don't have to write functions for all combinations. I'm not assuming that you know Haskell, however, so I'll proceed with the description.

Traversable functor #

The sequence function requires that the 'other' functor (the one that's not the traversable functor) is an applicative functor, but what about the traversable functor itself? What does it take to be a traversable functor?

I have to admit that I have to rely on Haskell specifics to a greater extent than normal. For most other concepts and abstractions in the overall article series, I've been able to draw on various sources, chief of which are Category Theory for Programmers. In various articles, I've cited my sources whenever possible. While I've relied on Haskell libraries for 'canonical' ways to represent concepts in a programming language, I've tried to present ideas as having a more universal origin than just Haskell.

When it comes to traversable functors, I haven't come across universal reasoning like that which gives rise to concepts like monoids, functors, Church encodings, or catamorphisms. This is most likely a failing on my part.

Traversals of the Haskell kind are, however, so useful that I find it appropriate to describe them. When consulting, it's a common solution to a lot of problems that people are having with functional programming.

Thus, based on Haskell's Data.Traversable, a traversable functor must:

- be a functor

- be a 'foldable' functor

- define a sequence or traverse function

Haskell comes with a Foldable type class. It defines a class of data that has a particular type of catamorphism. As I've outlined in my article on catamorphisms, Haskell's notion of a fold sometimes coincides with a (or 'the') catamorphism for a type, and sometimes not. For Maybe and List they do coincide, while they don't for Either or Tree. It's not that you can't define Foldable for Either or Tree, it's just that it's not 'the' general catamorphism for that type.

I can't tell whether Foldable is a universal abstraction, or if it's just an ad-hoc API that turns out to be useful in practice. It looks like the latter to me, but my knowledge is only limited. Perhaps I'll be wiser in a year or two.

I will, however, take it as licence to treat this topic a little less formally than I've done with other articles. While there are laws associated with Traversable, they are rather complex, so I'm going to skip them.

The above requirements will enable you to define traversable functors if you run into some more exotic ones, but in practice, the common functors List, Maybe, Either, Tree, and Identity are all traversable. That it useful to know. If any of those functors is the outer functor in a composition of functors, then you can flip them to the inner position as long as the other functor is an applicative functor.

Since IEnumerable<T> is traversable, and Task<T> (or Async<'T>) is an applicative functor, it's possible to use Sequence to convert IEnumerable<Task<Foo>> to Task<IEnumerable<Foo>>.

Traverse #

The C# and F# examples you've seen so far arrive at the desired type in a two-step process. First they produce the 'wrong' type with ids.Select(Foo.Read) or ids |> List.map Foo.Read, and then they use Sequence to arrive at the desired type.

When you use two expressions, you need two lines of code, and you also need to come up with a name for the intermediary value. It might be easier to chain the two function calls into a single expression:

Task<IEnumerable<Foo>> foosTask = ids.Select(Foo.Read).Sequence();

Or, in F#:

let foosTask = ids |> List.map Foo.Read |> Async.sequence

Chaining Select/map with Sequence/sequence is so common that it's a named function: traverse. In C#:

public static Task<IEnumerable<TResult>> Traverse<T, TResult>( this IEnumerable<T> source, Func<T, Task<TResult>> selector) { return source.Select(selector).Sequence(); }

This makes usage a little easier:

Task<IEnumerable<Foo>> foosTask = ids.Traverse(Foo.Read);

In F# the implementation might be similar:

// ('a -> Async<'b>) -> 'a list -> Async<'b list> let traverse f xs = xs |> List.map f |> sequence

Usage then looks like this:

let foosTask = ids |> Async.traverse Foo.Read